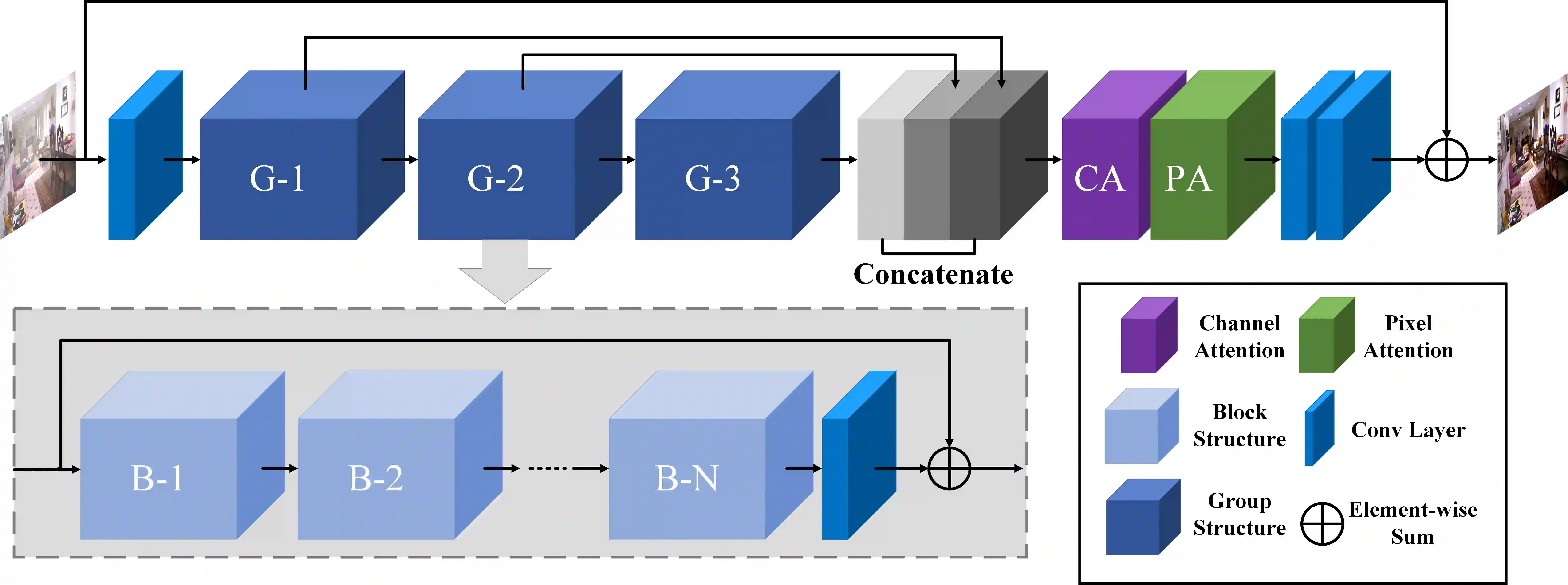

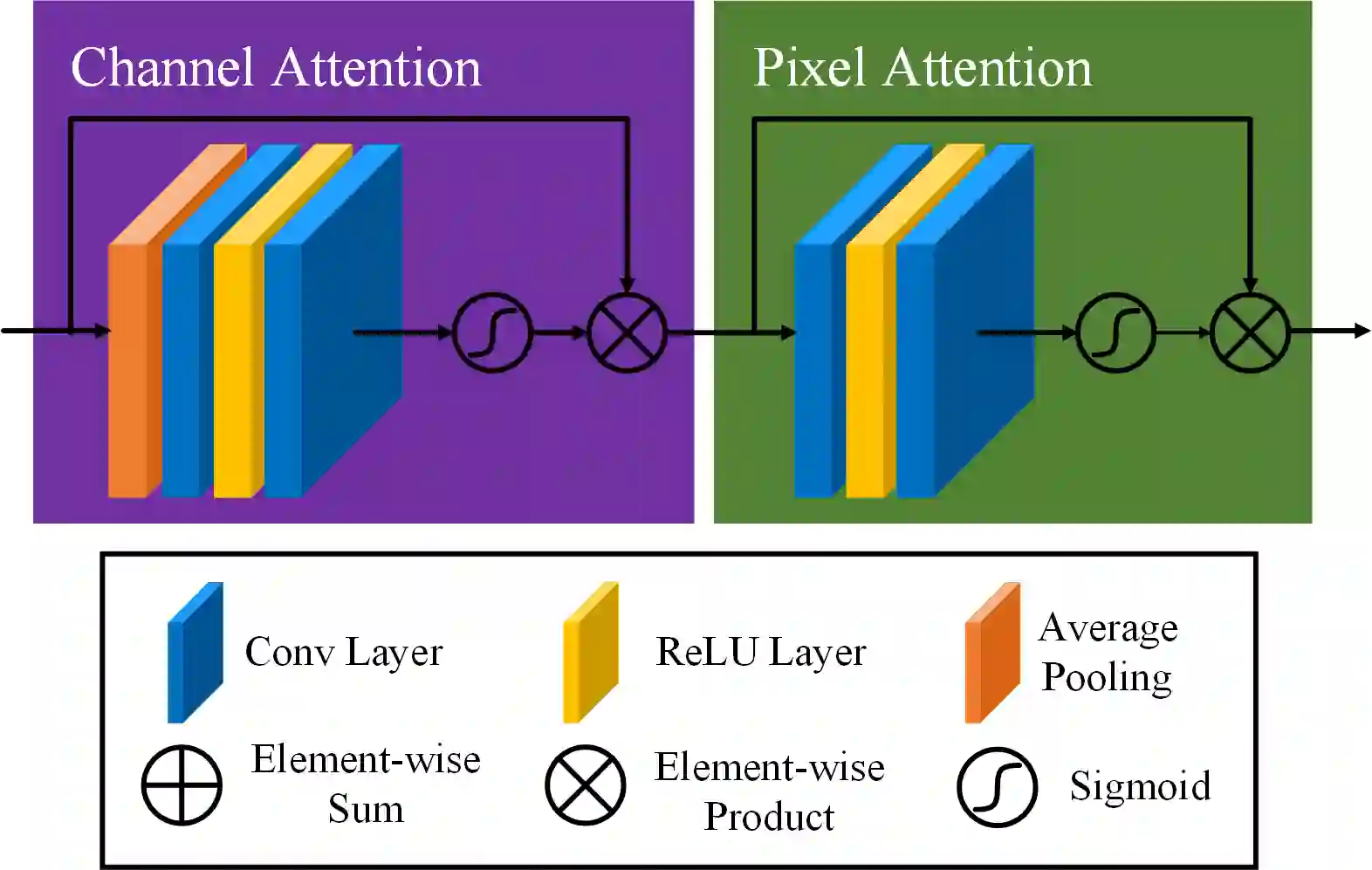

In this paper, we propose an end-to-end feature fusion at-tention network (FFA-Net) to directly restore the haze-free image. The FFA-Net architecture consists of three key components: 1) A novel Feature Attention (FA) module combines Channel Attention with Pixel Attention mechanism, considering that different channel-wise features contain totally different weighted information and haze distribution is uneven on the different image pixels. FA treats different features and pixels unequally, which provides additional flexibility in dealing with different types of information, expanding the representational ability of CNNs. 2) A basic block structure consists of Local Residual Learning and Feature Attention, Local Residual Learning allowing the less important information such as thin haze region or low-frequency to be bypassed through multiple local residual connections, let main network architecture focus on more effective information. 3) An Attention-based different levels Feature Fusion (FFA) structure, the feature weights are adaptively learned from the Feature Attention (FA) module, giving more weight to important features. This structure can also retain the information of shallow layers and pass it into deep layers. The experimental results demonstrate that our proposed FFA-Net surpasses previous state-of-the-art single image dehazing methods by a very large margin both quantitatively and qualitatively, boosting the best published PSNR metric from 30.23db to 35.77db on the SOTS indoor test dataset. Code has been made available at GitHub.

翻译:在本文中,我们提出一个端到端特性聚合网络(FFA-Net),以直接恢复无烟图像。FFA-Net结构由三个关键组成部分组成:1)一个全新的特效关注(FA)模块,将频道注意与像素注意机制相结合,考虑到不同的频道特性包含完全不同的加权信息,不同图像像素的烟雾分布不均匀。FA处理不同的特质和像素不均匀,这为处理不同类型的信息提供了更多的灵活性,扩大了CNN的代表性能力。 2)一个基本块结构由地方残余学习和功能关注、地方残余学习(FA)包含三个关键组成部分组成。1)一个新的特效关注(FA)模块将频道注意与像素注意机制相结合,考虑到不同的频道特性包含完全不同的加权信息,而烟雾分布在不同的图像像素上分布不均匀。FA(FFA)结构的特征加权是适应性的,对重要特征给予更多的重视。这一结构还可以保留浅层信息,并将它传递到深度的内层。23 实验结果显示我们所出版的S-BS-BRS-S-S-S-S-S-S-S-S-S-S-S-S-S-S-S-S-S-S-IAR-S-S-S-S-S-S-S-S-S-S-S-S-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-I-