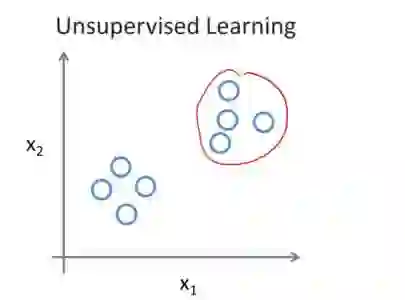

Recent deep learning models such as ChatGPT utilizing the back-propagation algorithm have exhibited remarkable performance. However, the disparity between the biological brain processes and the back-propagation algorithm has been noted. The Forward-Forward algorithm, which trains deep learning models solely through the forward pass, has emerged to address this. Although the Forward-Forward algorithm cannot replace back-propagation due to limitations such as having to use special input and loss functions, it has the potential to be useful in special situations where back-propagation is difficult to use. To work around this limitation and verify usability, we propose an Unsupervised Forward-Forward algorithm. Using an unsupervised learning model enables training with usual loss functions and inputs without restriction. Through this approach, we lead to stable learning and enable versatile utilization across various datasets and tasks. From a usability perspective, given the characteristics of the Forward-Forward algorithm and the advantages of the proposed method, we anticipate its practical application even in scenarios such as federated learning, where deep learning layers need to be trained separately in physically distributed environments.

翻译:近期,诸如ChatGPT等利用反向传播算法的深度学习模型展现出了卓越的性能。然而,生物大脑处理过程与反向传播算法之间的差异已受到关注。为应对这一问题,仅通过前向传播训练深度学习模型的前向-前向算法应运而生。尽管前向-前向算法因需使用特殊输入与损失函数等限制而无法替代反向传播,但在难以运用反向传播的特殊场景中,它可能具有实用潜力。为突破这一限制并验证其可用性,我们提出了一种无监督前向-前向算法。利用无监督学习模型,该算法能够在不设限的情况下,使用常规损失函数与输入进行训练。通过这一方法,我们实现了稳定学习,并使其能够在各种数据集与任务中灵活应用。从可用性角度出发,结合前向-前向算法的特性及本方法的优势,我们预计该算法能在诸如联邦学习等需在物理分布式环境中分别训练深度学习层的场景中得到实际应用。