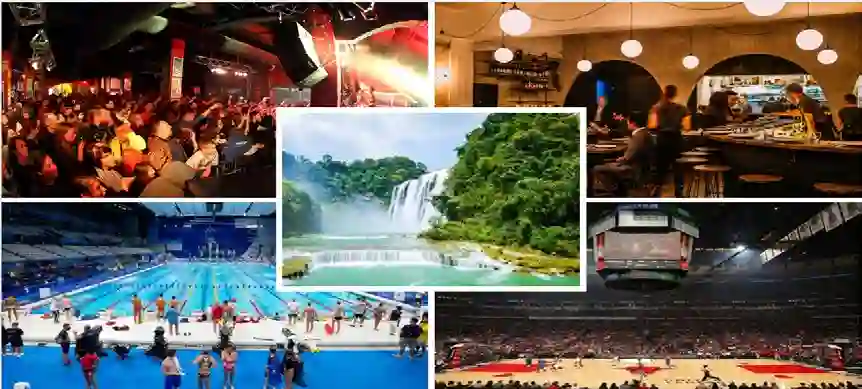

Speech enhancement plays an essential role in various applications, and the integration of visual information has been demonstrated to bring substantial advantages. However, the majority of current research concentrates on the examination of facial and lip movements, which can be compromised or entirely inaccessible in scenarios where occlusions occur or when the camera view is distant. Whereas contextual visual cues from the surrounding environment have been overlooked: for example, when we see a dog bark, our brain has the innate ability to discern and filter out the barking noise. To this end, in this paper, we introduce a novel task, i.e. SAV-SE. To our best knowledge, this is the first proposal to use rich contextual information from synchronized video as auxiliary cues to indicate the type of noise, which eventually improves the speech enhancement performance. Specifically, we propose the VC-S$^2$E method, which incorporates the Conformer and Mamba modules for their complementary strengths. Extensive experiments are conducted on public MUSIC, AVSpeech and AudioSet datasets, where the results demonstrate the superiority of VC-S$^2$E over other competitive methods. We will make the source code publicly available. Project demo page: https://AVSEPage.github.io/

翻译:语音增强在众多应用中扮演着至关重要的角色,而视觉信息的融合已被证明能带来显著优势。然而,当前大多数研究集中于对面部和唇部运动的分析,这类信息在发生遮挡或摄像机视角较远的情况下可能受损甚至完全无法获取。与此同时,来自周围环境的上下文视觉线索却被忽视了:例如,当我们看到一只狗吠叫时,我们的大脑天生具备辨别并滤除吠叫噪声的能力。为此,本文引入了一项新颖的任务,即SAV-SE。据我们所知,这是首次提出利用同步视频中丰富的上下文信息作为辅助线索来指示噪声类型,从而最终提升语音增强性能。具体而言,我们提出了VC-S$^2$E方法,该方法结合了Conformer和Mamba模块,以发挥其互补优势。我们在公开的MUSIC、AVSpeech和AudioSet数据集上进行了大量实验,结果表明VC-S$^2$E优于其他竞争方法。我们将公开源代码。项目演示页面:https://AVSEPage.github.io/