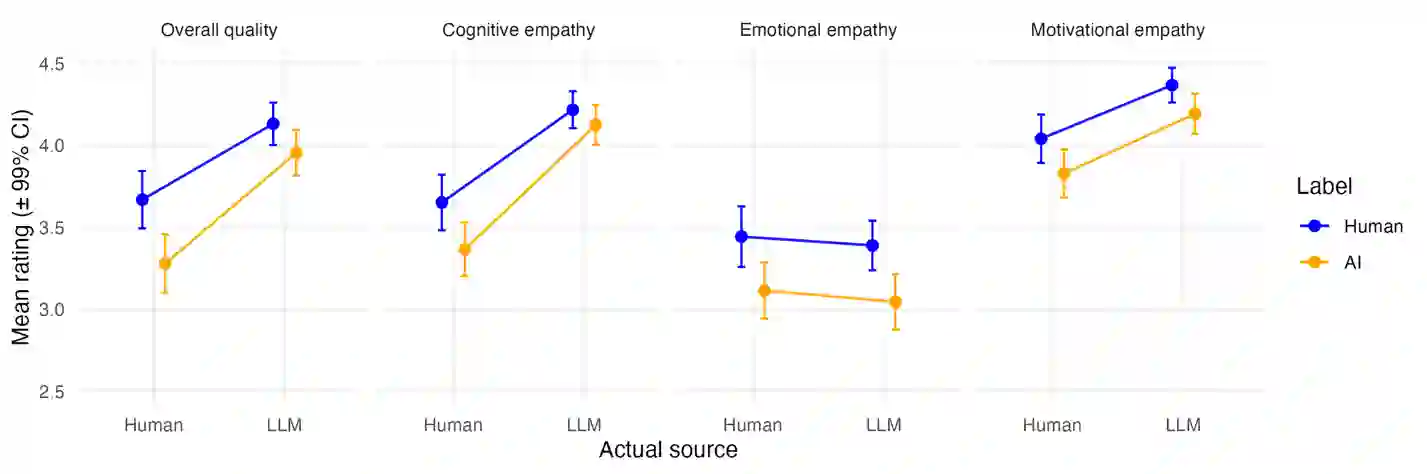

Artificial intelligence systems increasingly generate text intended to provide social and emotional support. Understanding how users perceive empathic qualities in such content is therefore critical. We examined differences in perceived empathy signals between human-written and large language model (LLM)-generated relationship advice, and the influence of authorship labels. Across two preregistered experiments (Study 1: n = 641; Study 2: n = 500), participants rated advice texts on overall quality and perceived cognitive, emotional, and motivational empathy. Multilevel models accounted for the nested rating structure. LLM-generated advice was consistently perceived as higher in overall quality, cognitive empathy, and motivational empathy. Evidence for a widely reported negativity bias toward AI-labelled content was limited. Emotional empathy showed no consistent source advantage. Individual differences in AI attitudes modestly influenced judgments but did not alter the overall pattern. These findings suggest that perceptions of empathic communication are primarily driven by linguistic features rather than authorship beliefs, with implications for the design of AI-mediated support systems.

翻译:人工智能系统越来越多地生成旨在提供社会与情感支持的文本。因此,理解用户如何感知此类内容中的共情特质至关重要。本研究考察了人类撰写与大型语言模型(LLM)生成的关系建议在感知共情信号上的差异,以及作者标签的影响。通过两项预注册实验(研究1:n = 641;研究2:n = 500),参与者从整体质量及感知认知共情、情感共情和动机共情维度对建议文本进行评分。多层模型处理了嵌套评分结构。LLM生成的建议在整体质量、认知共情和动机共情方面持续获得更高评价。针对AI标签内容普遍报告的负面偏见证据有限。情感共情未呈现稳定的来源优势。个体对AI态度的差异对判断有适度影响,但未改变整体模式。这些发现表明,对共情沟通的感知主要受语言特征驱动而非作者身份信念,这对AI介导的支持系统设计具有重要启示。