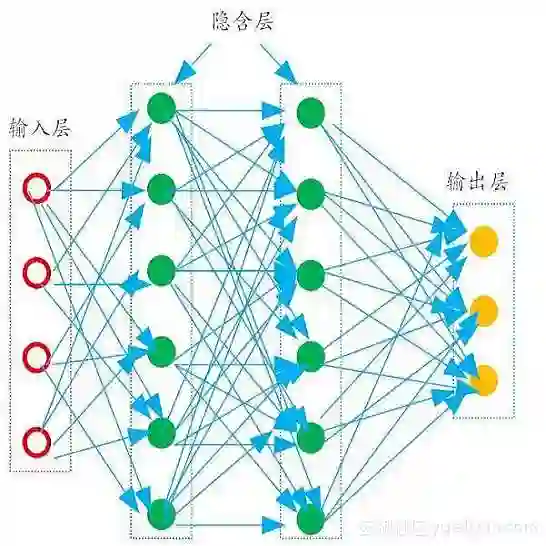

Current computational approaches for analysing or generating code-mixed sentences do not explicitly model "naturalness" or "acceptability" of code-mixed sentences, but rely on training corpora to reflect distribution of acceptable code-mixed sentences. Modelling human judgement for the acceptability of code-mixed text can help in distinguishing natural code-mixed text and enable quality-controlled generation of code-mixed text. To this end, we construct Cline - a dataset containing human acceptability judgements for English-Hindi (en-hi) code-mixed text. Cline is the largest of its kind with 16,642 sentences, consisting of samples sourced from two sources: synthetically generated code-mixed text and samples collected from online social media. Our analysis establishes that popular code-mixing metrics such as CMI, Number of Switch Points, Burstines, which are used to filter/curate/compare code-mixed corpora have low correlation with human acceptability judgements, underlining the necessity of our dataset. Experiments using Cline demonstrate that simple Multilayer Perceptron (MLP) models trained solely on code-mixing metrics are outperformed by fine-tuned pre-trained Multilingual Large Language Models (MLLMs). Specifically, XLM-Roberta and Bernice outperform IndicBERT across different configurations in challenging data settings. Comparison with ChatGPT's zero and fewshot capabilities shows that MLLMs fine-tuned on larger data outperform ChatGPT, providing scope for improvement in code-mixed tasks. Zero-shot transfer from English-Hindi to English-Telugu acceptability judgments using our model checkpoints proves superior to random baselines, enabling application to other code-mixed language pairs and providing further avenues of research. We publicly release our human-annotated dataset, trained checkpoints, code-mix corpus, and code for data generation and model training.

翻译:当前用于分析或生成代码混合句子的计算方法并未显式建模代码混合句子的“自然性”或“可接受性”,而是依赖训练语料库来反映可接受代码混合句子的分布。建模人类对代码混合文本可接受性的判断有助于区分自然代码混合文本,并实现代码混合文本的质量可控生成。为此,我们构建了Cline数据集——包含人类对英语-印地语(en-hi)代码混合文本的可接受性判断。Cline是同类数据集中规模最大的,包含16,642个句子,样本来源于两个渠道:合成生成的代码混合文本和从在线社交媒体收集的样本。我们的分析表明,CMI、开关点数量、突发性等常用于过滤/整理/比较代码混合语料库的主流指标与人类可接受性判断的相关性较低,凸显了本数据集的必要性。基于Cline的实验表明,仅依靠代码混合度量训练的简单多层感知器(MLP)模型,其性能劣于经过微调的预训练多语言大语言模型(MLLM)。具体而言,在挑战性数据设置下,XLM-Roberta和Bernice在不同配置中均优于IndicBERT。与ChatGPT的零样本和少样本能力对比显示,在更大数据集上微调的MLLM超越ChatGPT,为代码混合任务改进提供了空间。使用我们的模型检查点从英语-印地语到英语-泰卢固语可接受性判断的零样本迁移,其性能优于随机基线,可应用于其他代码混合语言对并开辟新的研究方向。我们公开发布了人类标注数据集、训练检查点、代码混合语料库以及数据生成和模型训练的代码。