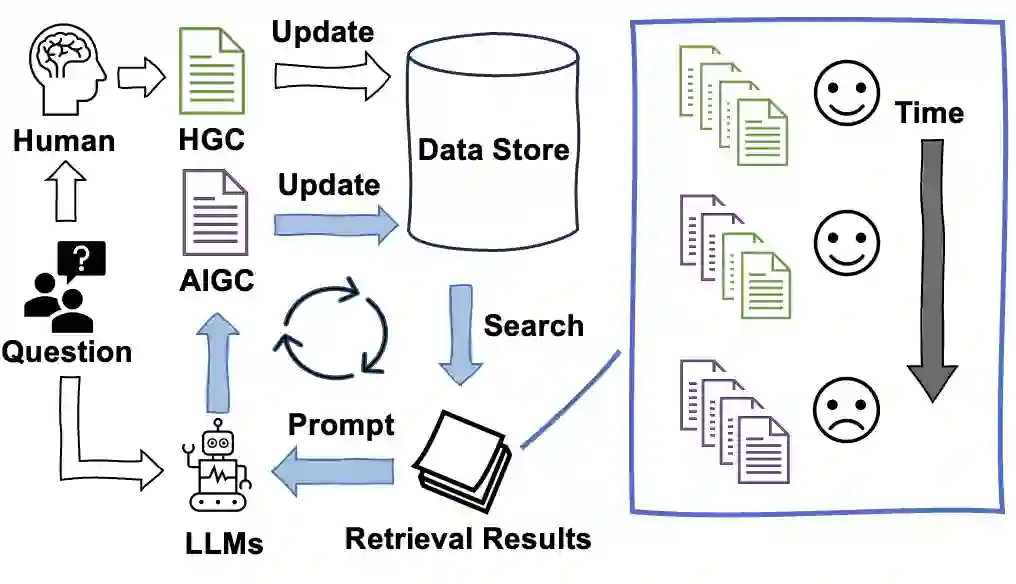

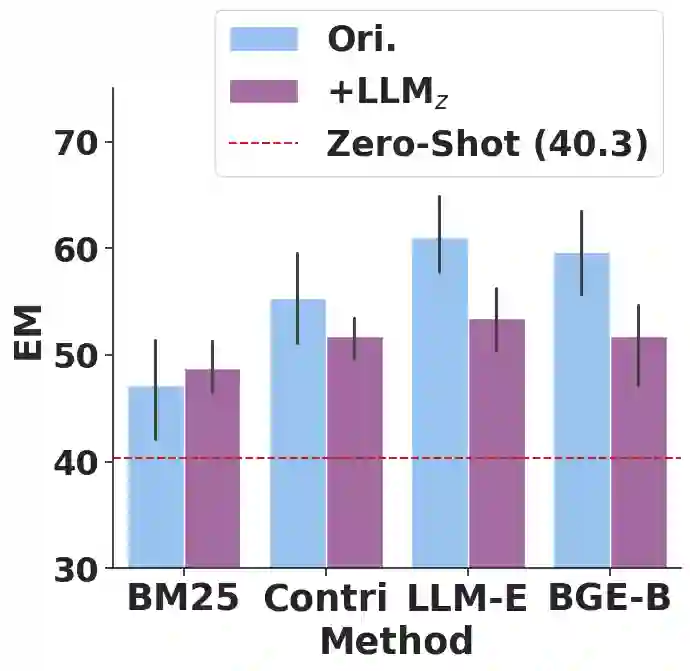

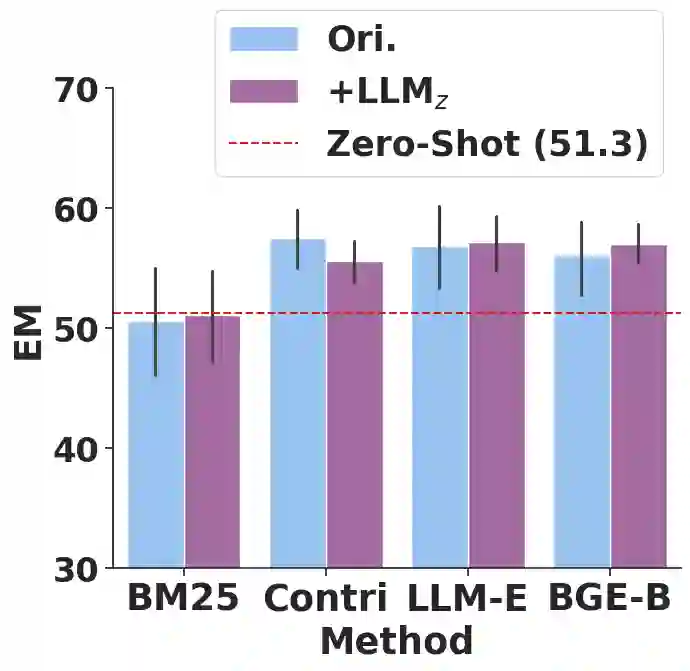

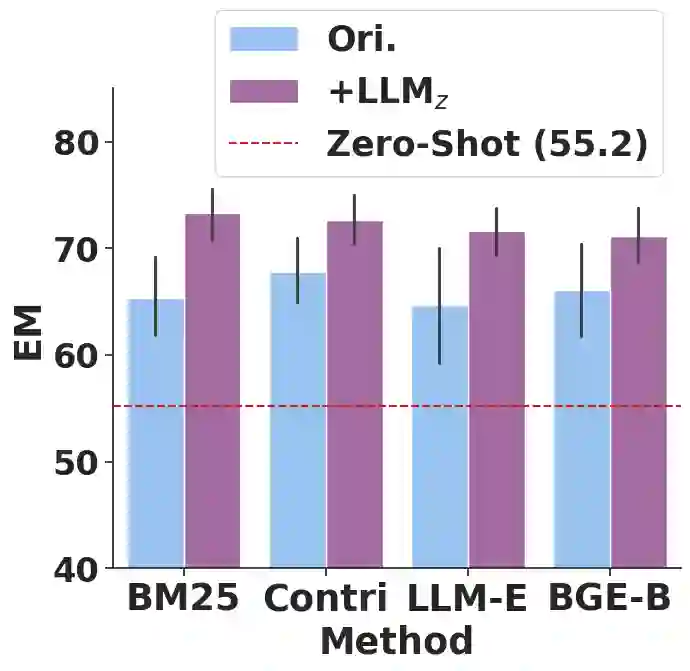

The practice of Retrieval-Augmented Generation (RAG), which integrates Large Language Models (LLMs) with retrieval systems, has become increasingly prevalent. However, the repercussions of LLM-derived content infiltrating the web and influencing the retrieval-generation feedback loop are largely uncharted territories. In this study, we construct and iteratively run a simulation pipeline to deeply investigate the short-term and long-term effects of LLM text on RAG systems. Taking the trending Open Domain Question Answering (ODQA) task as a point of entry, our findings reveal a potential digital "Spiral of Silence" effect, with LLM-generated text consistently outperforming human-authored content in search rankings, thereby diminishing the presence and impact of human contributions online. This trend risks creating an imbalanced information ecosystem, where the unchecked proliferation of erroneous LLM-generated content may result in the marginalization of accurate information. We urge the academic community to take heed of this potential issue, ensuring a diverse and authentic digital information landscape.

翻译:检索增强生成(RAG)将大型语言模型(LLMs)与检索系统相结合,其应用日益广泛。然而,LLM生成内容渗透网络并影响检索-生成反馈循环的后果在很大程度上仍是未知领域。本研究构建并迭代运行模拟流程,深入探究LLM文本对RAG系统的短期与长期影响。以当前热门的开放域问答(ODQA)任务为切入点,我们的发现揭示了一种潜在的数字化“沉默螺旋”效应:LLM生成的文本在搜索结果排名中持续优于人类创作的内容,从而削弱了人类贡献在网络中的存在感与影响力。这一趋势可能导致信息生态系统失衡:错误LLM生成内容的无节制扩散或将使准确信息被边缘化。我们呼吁学术界重视这一潜在问题,以维护多样且真实的数字信息环境。