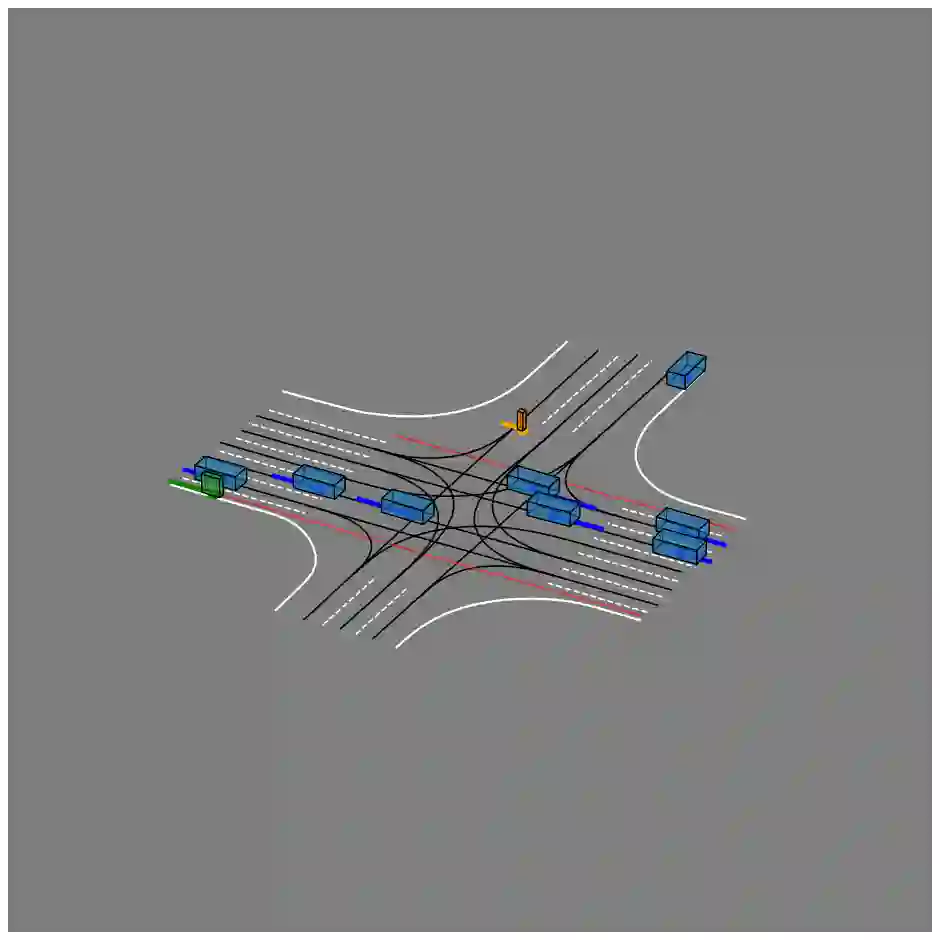

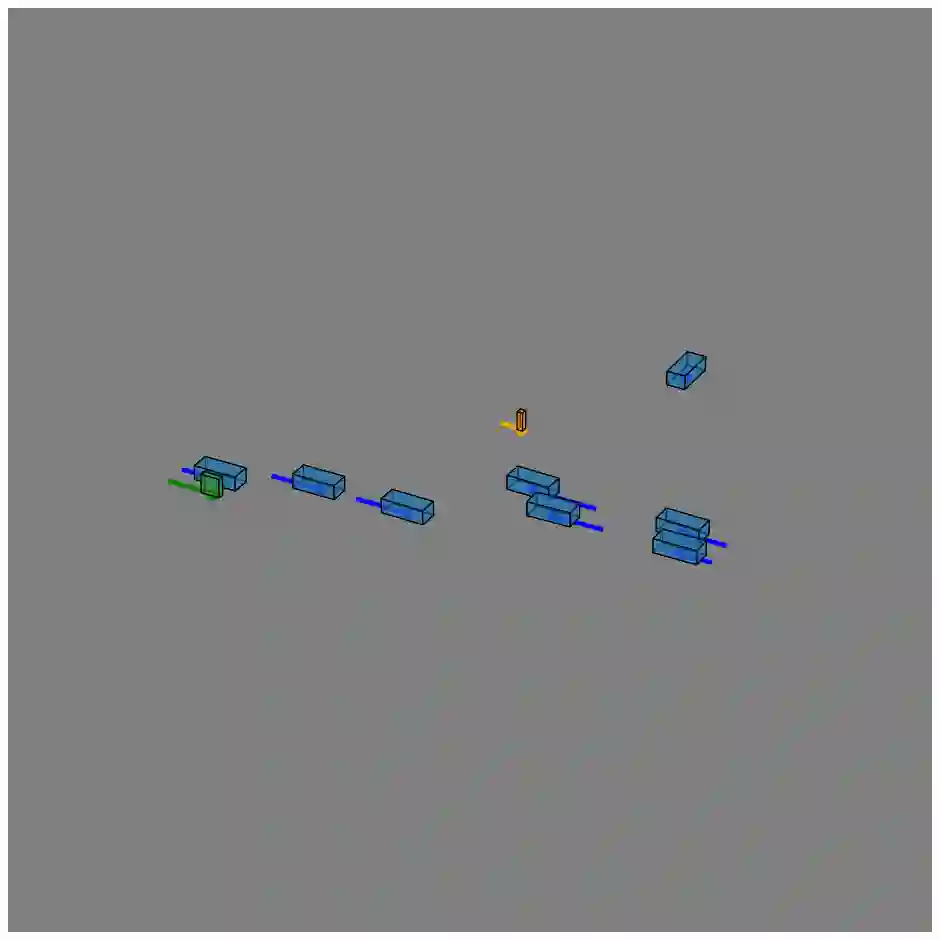

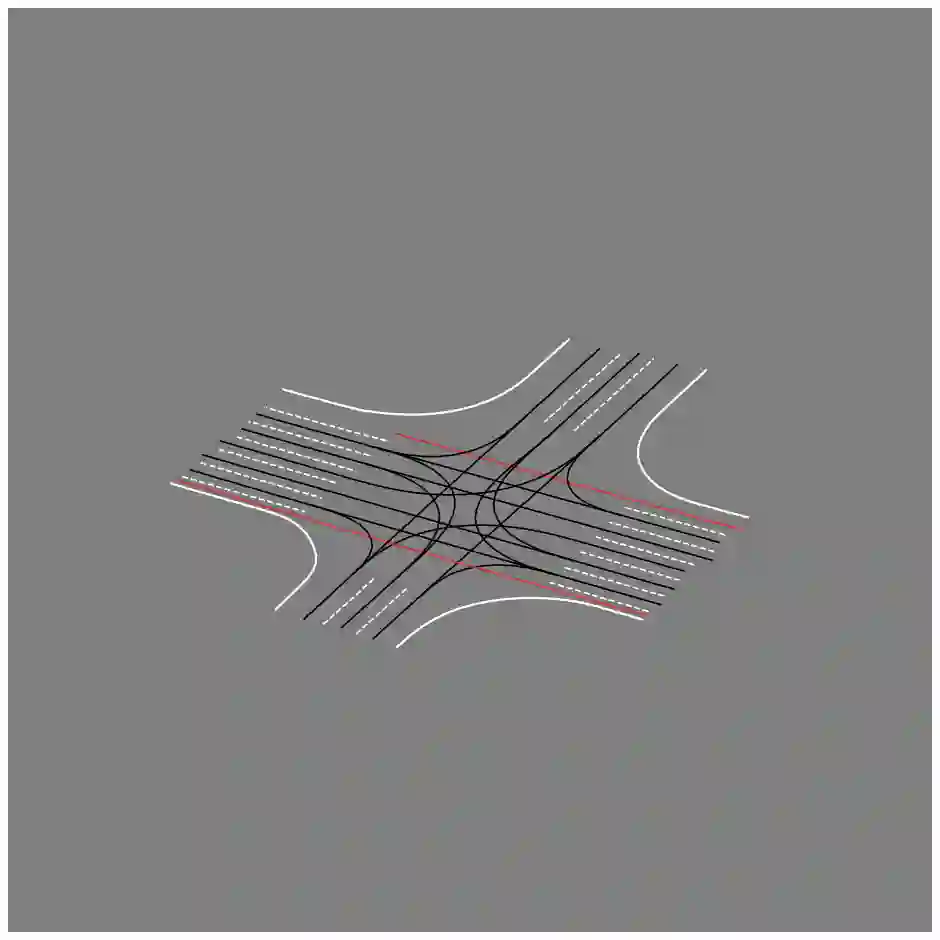

We present JointMotion, a self-supervised learning method for joint motion prediction in autonomous driving. Our method includes a scene-level objective connecting motion and environments, and an instance-level objective to refine learned representations. Our evaluations show that these objectives are complementary and outperform recent contrastive and autoencoding methods as pre-training for joint motion prediction. Furthermore, JointMotion adapts to all common types of environment representations used for motion prediction (i.e., agent-centric, scene-centric, and pairwise relative), and enables effective transfer learning between the Waymo Open Motion and the Argoverse 2 Forecasting datasets. Notably, our method improves the joint final displacement error of Wayformer, Scene Transformer, and HPTR by 3%, 7%, and 11%, respectively.

翻译:我们提出JointMotion,一种用于自动驾驶中联合运动预测的自监督学习方法。该方法包含一个连接运动与环境的场景级目标函数,以及一个用于细化所学表征的实例级目标函数。我们的评估表明,这两个目标函数具有互补性,且作为联合运动预测的预训练方法,其性能优于近期对比学习与自编码方法。此外,JointMotion能适应运动预测中所有常见的环境表征类型(即智能体中心、场景中心和成对相对表征),并能实现Waymo开放运动数据集与Argoverse 2预测数据集之间的有效迁移学习。值得注意的是,该方法分别将Wayformer、Scene Transformer和HPTR的联合最终位移误差降低了3%、7%和11%。