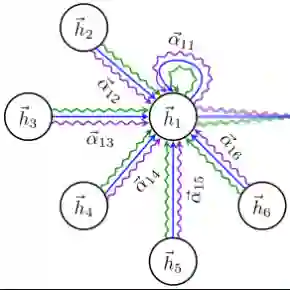

Oversmoothing in Graph Neural Networks (GNNs) refers to the phenomenon where increasing network depth leads to homogeneous node representations. While previous work has established that Graph Convolutional Networks (GCNs) exponentially lose expressive power, it remains controversial whether the graph attention mechanism can mitigate oversmoothing. In this work, we provide a definitive answer to this question through a rigorous mathematical analysis, by viewing attention-based GNNs as nonlinear time-varying dynamical systems and incorporating tools and techniques from the theory of products of inhomogeneous matrices and the joint spectral radius. We establish that, contrary to popular belief, the graph attention mechanism cannot prevent oversmoothing and loses expressive power exponentially. The proposed framework extends the existing results on oversmoothing for symmetric GCNs to a significantly broader class of GNN models, including random walk GCNs, Graph Attention Networks (GATs) and (graph) transformers. In particular, our analysis accounts for asymmetric, state-dependent and time-varying aggregation operators and a wide range of common nonlinear activation functions, such as ReLU, LeakyReLU, GELU and SiLU.

翻译:图神经网络(GNNs)中的过度平滑现象指的是随着网络深度增加,节点表征趋于同质化的问题。尽管先前的研究已证实图卷积网络(GCNs)的表达能力会呈指数级衰减,但图注意力机制能否缓解过度平滑仍存在争议。本研究通过严格的数学分析,将基于注意力的GNNs视为非线性时变动力系统,并引入非齐次矩阵乘积理论与联合谱半径理论中的工具与方法,为此问题提供了确定性答案。我们证明,与普遍观点相反,图注意力机制无法阻止过度平滑现象,其表达能力同样会呈指数级衰减。所提出的框架将现有针对对称GCNs的过度平滑结论,扩展至更广泛的GNN模型类别,包括随机游走GCNs、图注意力网络(GATs)及(图)Transformer模型。特别地,我们的分析涵盖了非对称、状态依赖且时变的聚合算子,以及一系列常用的非线性激活函数,如ReLU、LeakyReLU、GELU和SiLU。