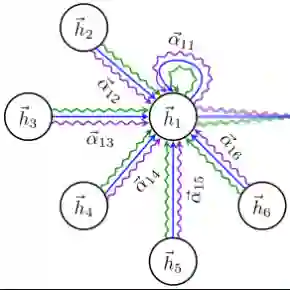

Automatic Sign Language (SL) recognition is an important task in the computer vision community. To build a robust SL recognition system, we need a considerable amount of data which is lacking particularly in Indian sign language (ISL). In this paper, we introduce a large-scale isolated ISL dataset and a novel SL recognition model based on skeleton graph structure. The dataset covers 2002 daily used common words in the deaf community recorded by 20 (10 male and 10 female) deaf adult signers (contains 40033 videos). We propose a SL recognition model namely Hierarchical Windowed Graph Attention Network (HWGAT) by utilizing the human upper body skeleton graph. The HWGAT tries to capture distinctive motions by giving attention to different body parts induced by the human skeleton graph. The utility of the proposed dataset and the usefulness of our model are evaluated through extensive experiments. We pre-trained the proposed model on the presented dataset and fine-tuned it across different sign language datasets further boosting the performance of 1.10, 0.46, 0.78, and 6.84 percentage points on INCLUDE, LSA64, AUTSL and WLASL respectively compared to the existing state-of-the-art keypoints-based models.

翻译:自动手语识别是计算机视觉领域的一项重要任务。为构建鲁棒的手语识别系统,我们需要大量数据,而印度手语领域尤其缺乏此类数据。本文提出了一个大规模孤立印度手语数据集,以及一种基于骨架图结构的新型手语识别模型。该数据集涵盖聋人社区日常使用的2002个常用词汇,由20位(10男10女)成年聋人手语者录制(包含40033个视频)。我们通过利用人体上半身骨架图,提出了一种名为分层窗口化图注意力网络的手语识别模型。该模型通过关注人体骨架图引导的不同身体部位,试图捕捉具有区分性的动作特征。通过大量实验评估了所提出数据集的实用性和模型的有效性。我们在所提数据集上对模型进行预训练,并在不同手语数据集上进行微调,相比现有基于关键点的最先进模型,在INCLUDE、LSA64、AUTSL和WLASL数据集上分别提升了1.10、0.46、0.78和6.84个百分点。