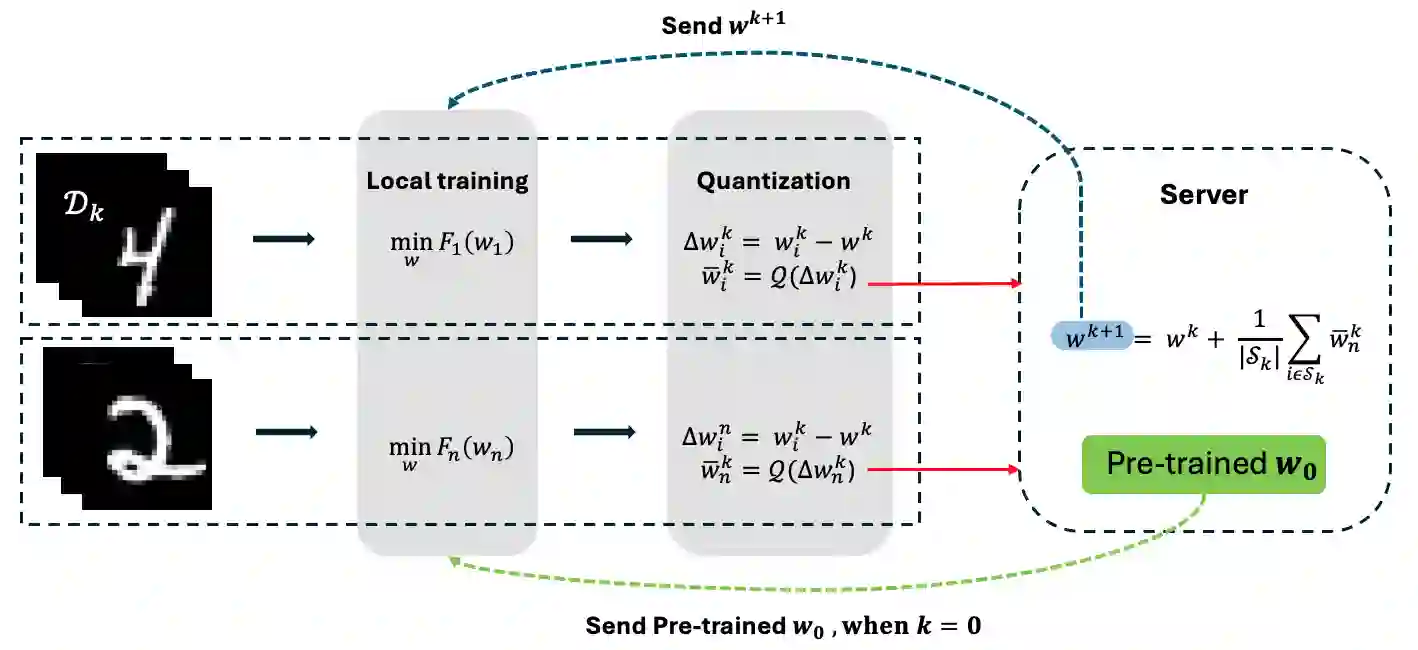

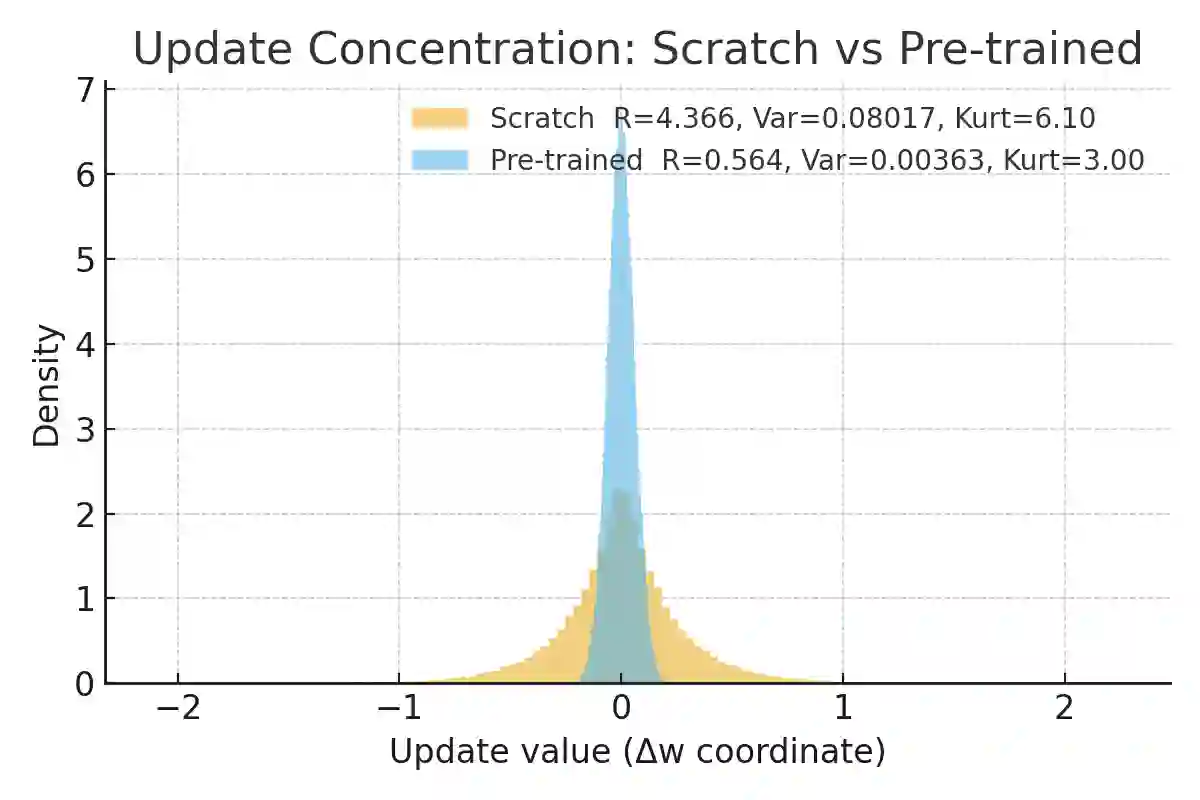

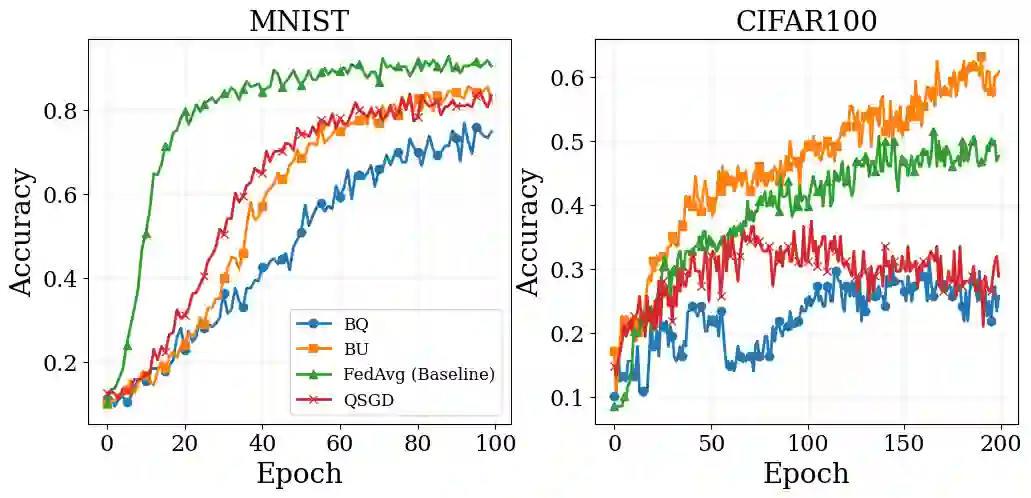

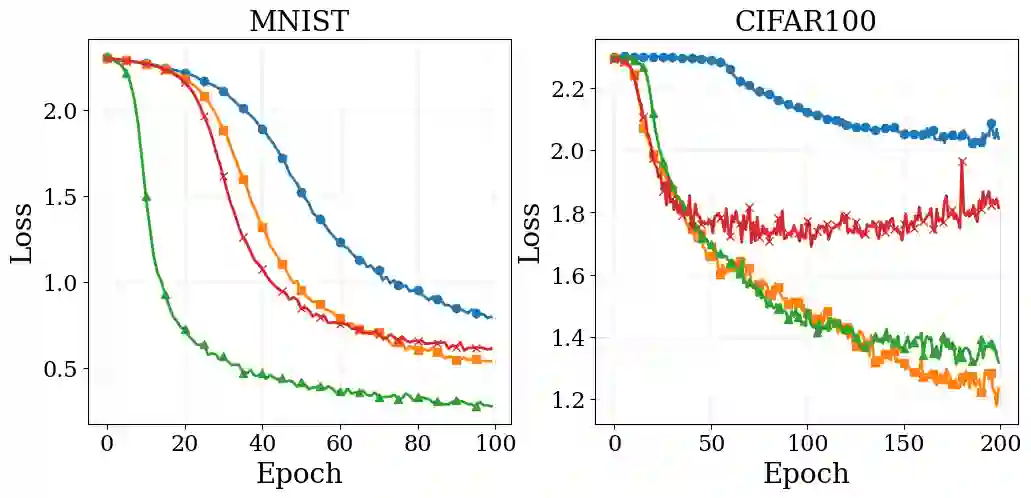

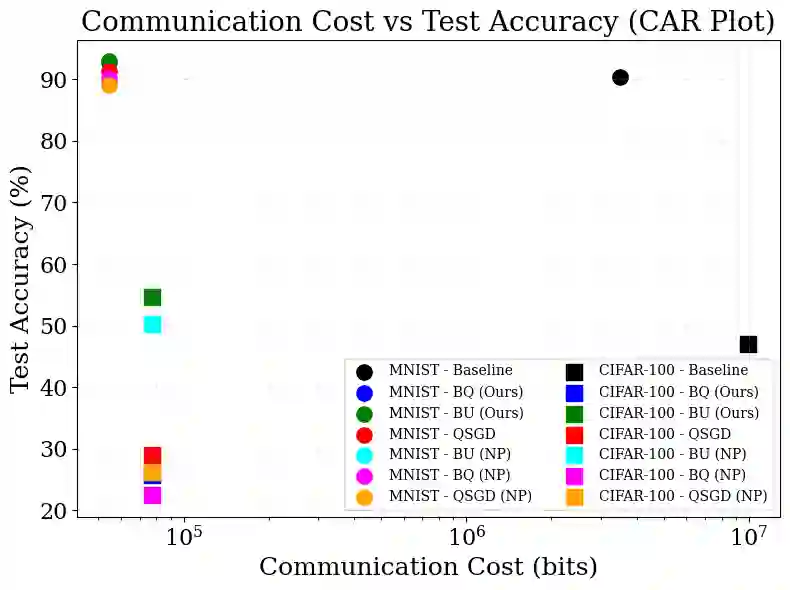

Federated Learning (FL) enables privacy-preserving intelligence on Internet of Things (IoT) devices but incurs a significant carbon footprint due to the high energy cost of frequent uplink transmission. While pre-trained models are increasingly available on edge devices, their potential to reduce the energy overhead of fine-tuning remains underexplored. In this work, we propose QuantFL, a sustainable FL framework that leverages pre-trained initialisation to enable aggressive, computationally lightweight quantisation. We demonstrate that pre-training naturally concentrates update statistics, allowing us to use memory-efficient bucket quantisation without the energy-intensive overhead of complex error-feedback mechanisms. On MNIST and CIFAR-100, QuantFL reduces total communication by 40\% ($\simeq40\%$ total-bit reduction with full-precision downlink; $\geq80\%$ on uplink or when downlink is quantised) while matching or exceeding uncompressed baselines under strict bandwidth budgets; BU attains 89.00\% (MNIST) and 66.89\% (CIFAR-100) test accuracy with orders of magnitude fewer bits. We also account for uplink and downlink costs and provide ablations on quantisation levels and initialisation. QuantFL delivers a practical, "green" recipe for scalable training on battery-constrained IoT networks.

翻译:联邦学习(FL)使得在物联网(IoT)设备上实现隐私保护的智能成为可能,但由于频繁上行链路传输的高能耗,其产生了显著的碳足迹。尽管预训练模型在边缘设备上日益普及,但其在降低微调能耗方面的潜力仍未得到充分探索。在本工作中,我们提出了QuantFL,一个可持续的联邦学习框架,它利用预训练初始化来实现激进且计算轻量的量化。我们证明了预训练自然地集中了更新统计量,使我们能够使用内存高效的桶量化,而无需复杂误差反馈机制带来的高能耗开销。在MNIST和CIFAR-100数据集上,QuantFL在严格的带宽预算下,将总通信量减少了40%(全精度下行链路时总比特减少约40%;上行链路或下行链路量化时减少≥80%),同时达到或超过了未压缩基线的性能;BU以数量级更少的比特数实现了89.00%(MNIST)和66.89%(CIFAR-100)的测试准确率。我们还考虑了上行链路和下行链路的成本,并对量化级别和初始化进行了消融实验。QuantFL为电池受限的物联网网络上的可扩展训练提供了一种实用、"绿色"的方案。