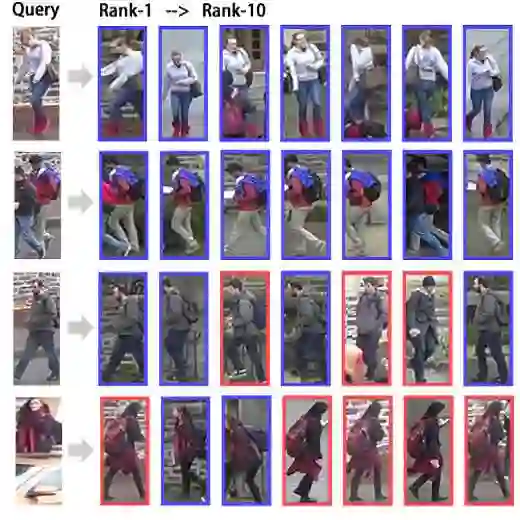

Text-based Person Retrieval (TPR) aims to retrieve person images that match the description given a text query. The performance improvement of the TPR model relies on high-quality data for supervised training. However, it is difficult to construct a large-scale, high-quality TPR dataset due to expensive annotation and privacy protection. Recently, Large Language Models (LLMs) have approached or even surpassed human performance on many NLP tasks, creating the possibility to expand high-quality TPR datasets. This paper proposes an LLM-based Data Augmentation (LLM-DA) method for TPR. LLM-DA uses LLMs to rewrite the text in the current TPR dataset, achieving high-quality expansion of the dataset concisely and efficiently. These rewritten texts are able to increase the diversity of vocabulary and sentence structure while retaining the original key concepts and semantic information. In order to alleviate the hallucinations of LLMs, LLM-DA introduces a Text Faithfulness Filter (TFF) to filter out unfaithful rewritten text. To balance the contributions of original text and augmented text, a Balanced Sampling Strategy (BSS) is proposed to control the proportion of original text and augmented text used for training. LLM-DA is a plug-and-play method that can be easily integrated into various TPR models. Comprehensive experiments on three TPR benchmarks show that LLM-DA can improve the retrieval performance of current TPR models.

翻译:文本行人重识别旨在根据文本查询检索与之描述相匹配的行人图像。该模型性能的提升依赖于用于监督训练的高质量数据。然而,由于标注成本高昂及隐私保护限制,构建大规模、高质量的文本行人重识别数据集存在困难。近期,大语言模型在许多自然语言处理任务上已接近甚至超越人类表现,这为扩展高质量文本行人重识别数据集提供了可能。本文提出一种基于大语言模型的数据增强方法用于文本行人重识别。该方法利用大语言模型重写现有文本行人重识别数据集中的文本描述,以简洁高效的方式实现数据集的高质量扩充。这些重写文本能在保留原始关键概念与语义信息的同时,增加词汇多样性和句子结构丰富性。为缓解大语言模型的幻觉问题,该方法引入文本忠实度过滤器以剔除不忠实于原意的重写文本。为平衡原始文本与增强文本的贡献,提出平衡采样策略控制训练过程中两类文本的使用比例。本方法具有即插即用特性,可便捷集成至各类文本行人重识别模型。在三个标准测试集上的全面实验表明,该方法能有效提升现有文本行人重识别模型的检索性能。