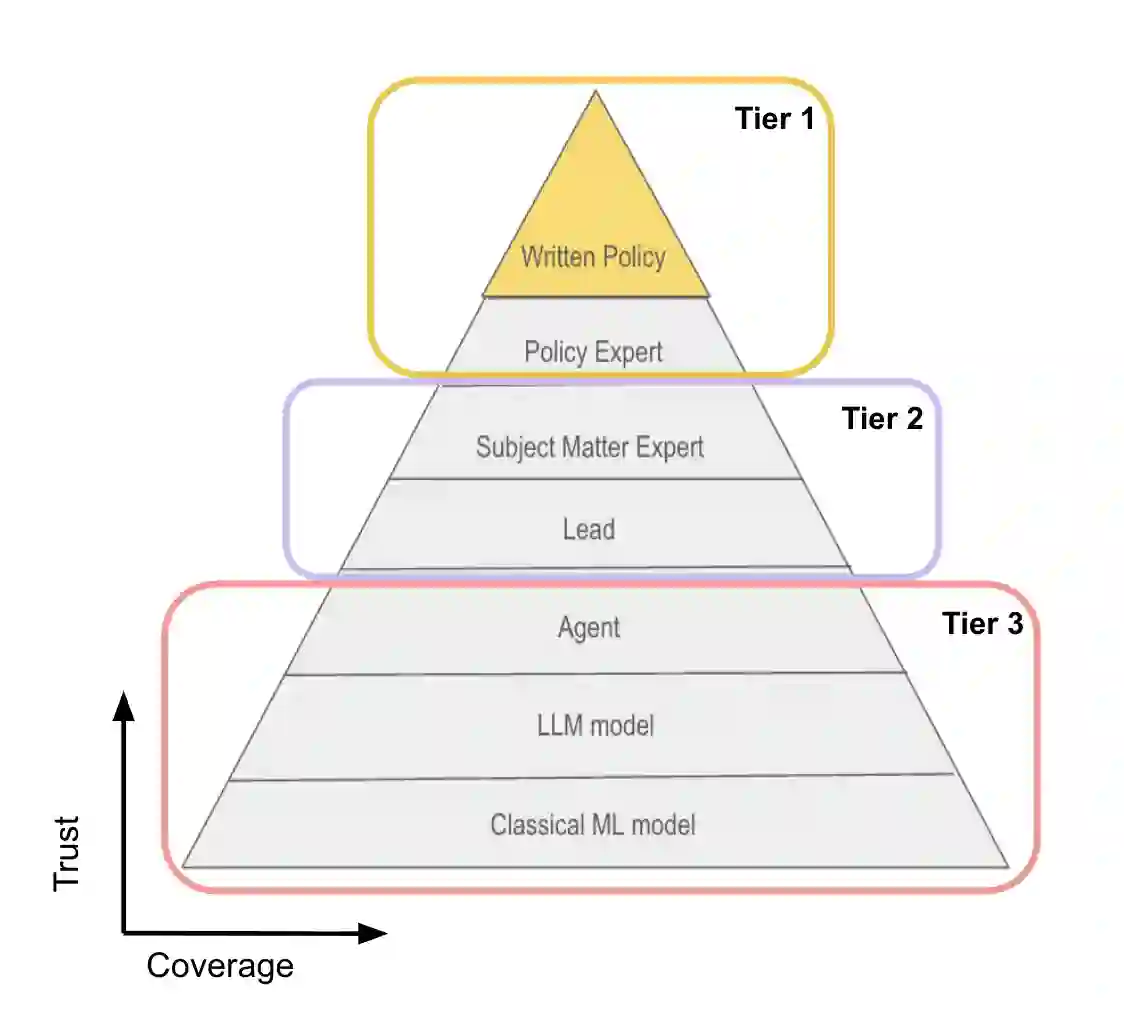

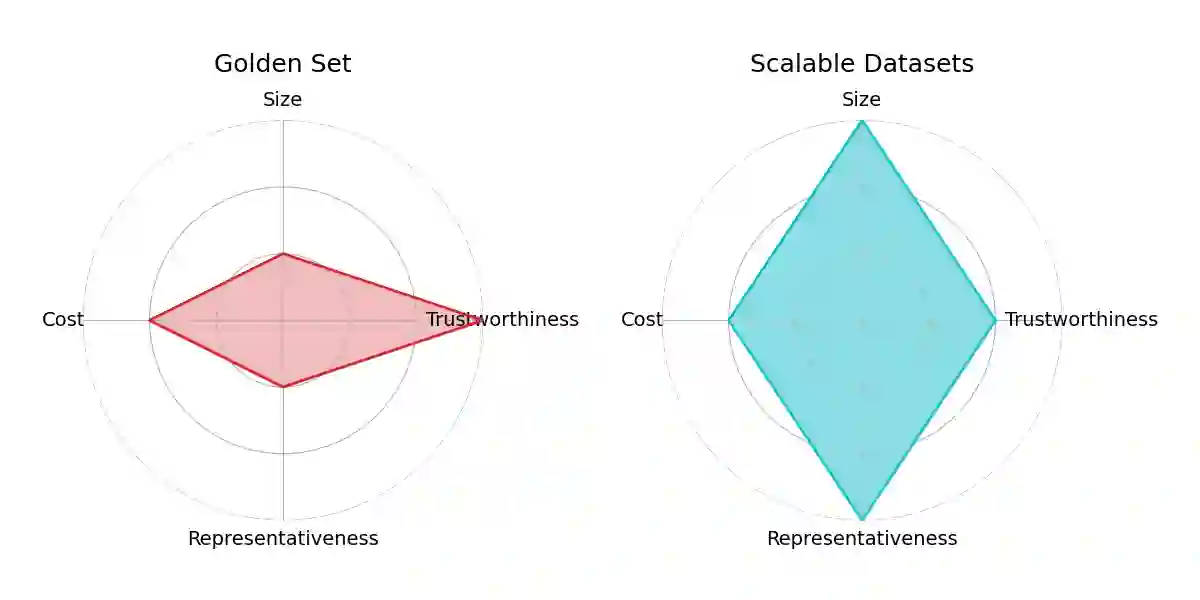

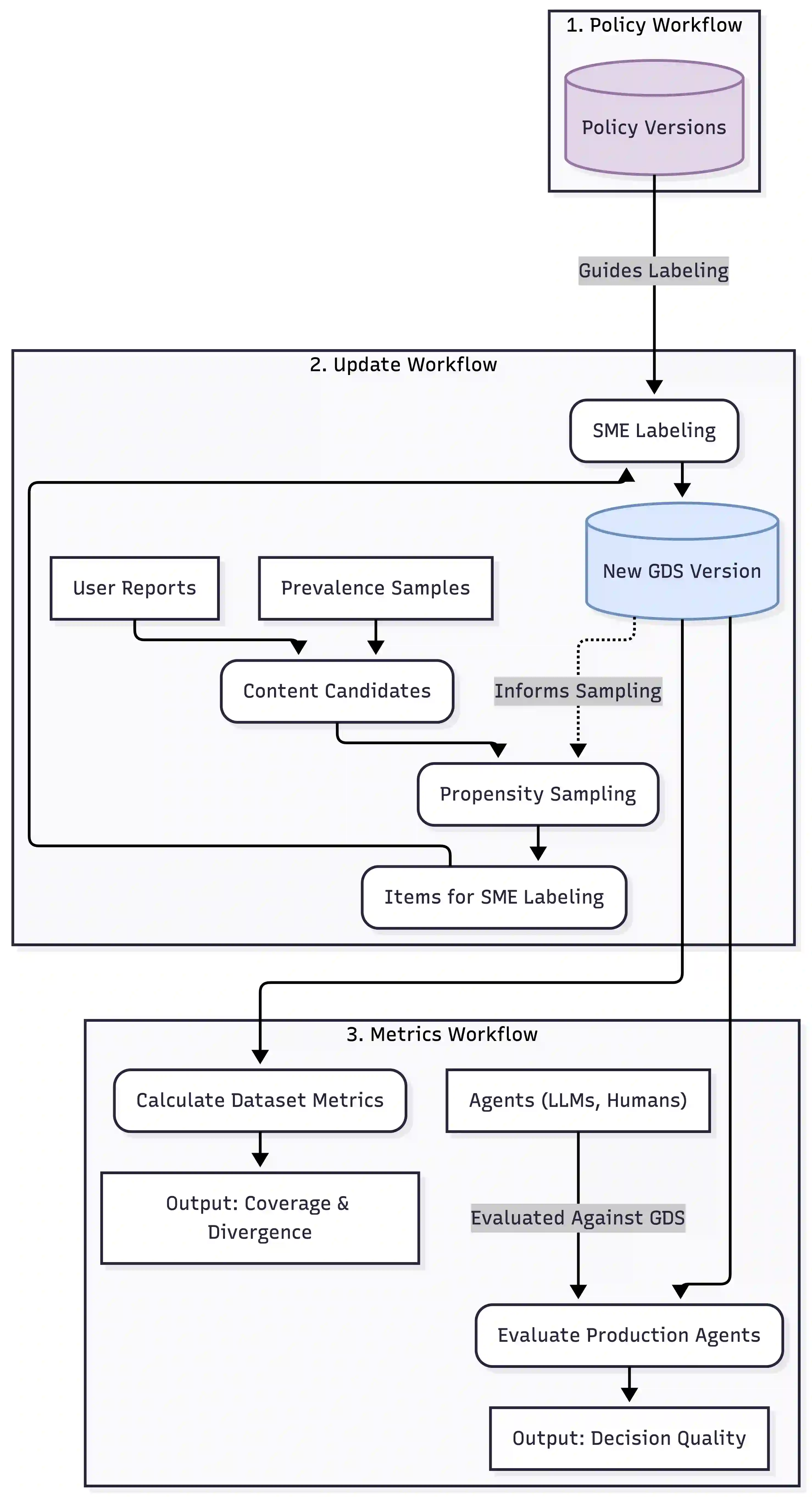

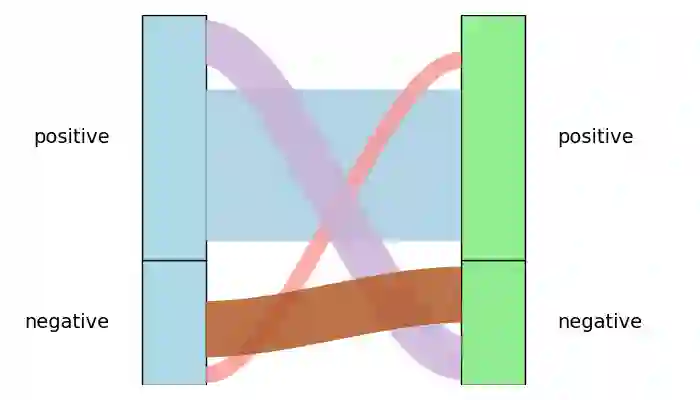

Online platforms require robust systems to enforce content safety policies at scale. A critical component of these systems is the ability to evaluate the quality of moderation decisions made by both human agents and Large Language Models (LLMs). However, this evaluation is challenging due to the inherent trade-offs between cost, scale, and trustworthiness, along with the complexity of evolving policies. To address this, we present a comprehensive Decision Quality Evaluation Framework developed and deployed at Pinterest. The framework is centered on a high-trust Golden Set (GDS) curated by subject matter experts (SMEs), which serves as a ground truth benchmark. We introduce an automated intelligent sampling pipeline that uses propensity scores to efficiently expand dataset coverage. We demonstrate the framework's practical application in several key areas: benchmarking the cost-performance trade-offs of various LLM agents, establishing a rigorous methodology for data-driven prompt optimization, managing complex policy evolution, and ensuring the integrity of policy content prevalence metrics via continuous validation. The framework enables a shift from subjective assessments to a data-driven and quantitative practice for managing content safety systems.

翻译:在线平台需要强大的系统来大规模执行内容安全策略。这些系统的关键组成部分在于能够评估人工审核员和大语言模型(LLM)所做审核决策的质量。然而,由于成本、规模和可信度之间固有的权衡,以及不断演变的策略复杂性,此类评估极具挑战性。为此,我们提出在Pinterest开发并部署的综合性决策质量评估框架。该框架以领域专家(SME)精心编制的高可信度黄金数据集(GDS)为核心,作为基准事实标准。我们引入了一种基于倾向性评分的自动化智能抽样流程,可高效扩展数据集覆盖范围。我们展示了该框架在多个关键领域的实际应用:评估各类LLM代理的成本-性能权衡、建立数据驱动提示优化的严谨方法、管理复杂的策略演进,以及通过持续验证确保策略内容流行度指标的完整性。该框架实现了从主观评估向数据驱动、量化管理内容安全系统实践的转变。