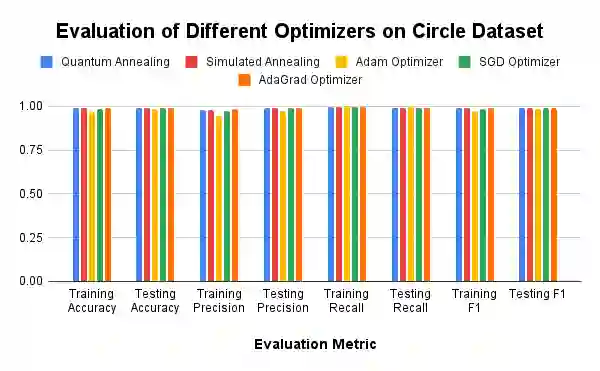

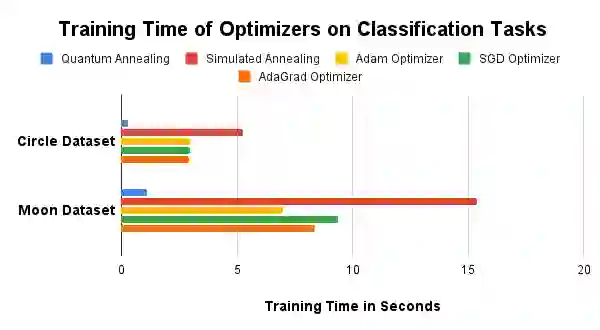

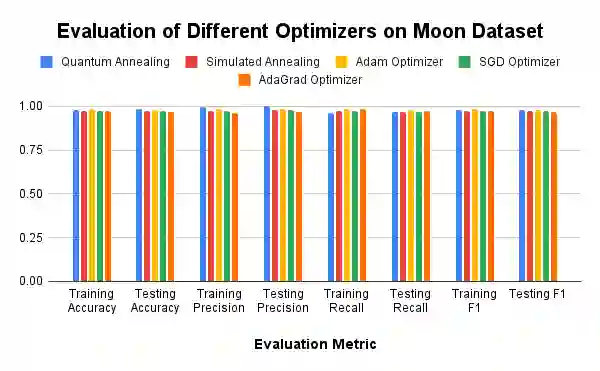

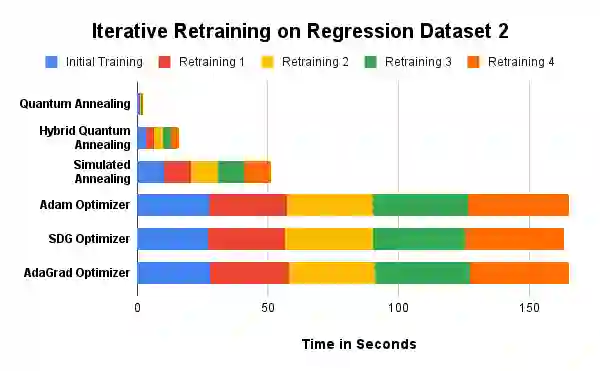

The advent of quantum computing holds the potential to revolutionize various fields by solving complex problems more efficiently than classical computers. Despite this promise, practical quantum advantage is hindered by current hardware limitations, notably the small number of qubits and high noise levels. In this study, we leverage adiabatic quantum computers to optimize Kolmogorov-Arnold Networks, a powerful neural network architecture for representing complex functions with minimal parameters. By modifying the network to use Bezier curves as the basis functions and formulating the optimization problem into a Quadratic Unconstrained Binary Optimization problem, we create a fixed-sized solution space, independent of the number of training samples. Our approach demonstrates sparks of quantum advantage through faster training times compared to classical optimizers such as the Adam, Stochastic Gradient Descent, Adaptive Gradient, and simulated annealing. Additionally, we introduce a novel rapid retraining capability, enabling the network to be retrained with new data without reprocessing old samples, thus enhancing learning efficiency in dynamic environments. Experimental results on initial training of classification and regression tasks validate the efficacy of our approach, showcasing significant speedups and comparable performance to classical methods. While experiments on retraining demonstrate a sixty times speed up using adiabatic quantum computing based optimization compared to that of the gradient descent based optimizers, with theoretical models allowing this speed up to be even larger! Our findings suggest that with further advancements in quantum hardware and algorithm optimization, quantum-optimized machine learning models could have broad applications across various domains, with initial focus on rapid retraining.

翻译:量子计算的出现有望通过比经典计算机更高效地解决复杂问题,从而彻底改变多个领域。尽管前景广阔,但当前的硬件限制阻碍了实际量子优势的实现,特别是量子比特数量少和噪声水平高的问题。在本研究中,我们利用绝热量子计算机来优化Kolmogorov-Arnold网络,这是一种用于以最少参数表示复杂函数的强大神经网络架构。通过将网络修改为使用贝塞尔曲线作为基函数,并将优化问题表述为二次无约束二进制优化问题,我们创建了一个固定大小的解空间,其大小与训练样本数量无关。与Adam、随机梯度下降、自适应梯度和模拟退火等经典优化器相比,我们的方法通过更快的训练时间展示了量子优势的火花。此外,我们引入了一种新颖的快速再训练能力,使网络能够在不重新处理旧样本的情况下用新数据进行再训练,从而提高了动态环境中的学习效率。在分类和回归任务初始训练上的实验结果验证了我们方法的有效性,显示出显著的加速效果以及与经典方法相当的性能。而在再训练实验中,基于绝热量子计算的优化相比基于梯度下降的优化器实现了六十倍的加速,理论模型甚至允许这一加速进一步提升!我们的研究结果表明,随着量子硬件和算法优化的进一步发展,量子优化的机器学习模型可能在多个领域具有广泛的应用前景,其中快速再训练将是初步关注的重点。