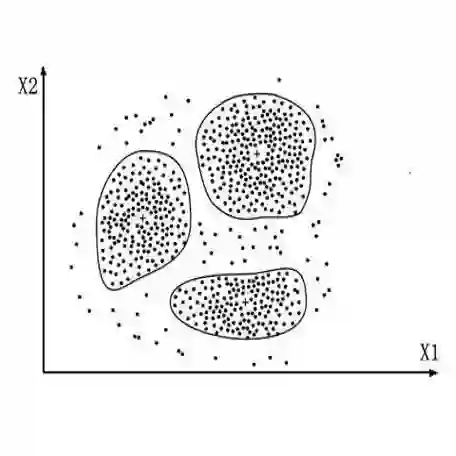

Text clustering is an important approach for organising the growing amount of digital content, helping to structure and find hidden patterns in uncategorised data. In this research, we investigated how different textual embeddings - particularly those used in large language models (LLMs) - and clustering algorithms affect how text datasets are clustered. A series of experiments were conducted to assess how embeddings influence clustering results, the role played by dimensionality reduction through summarisation, and embedding size adjustment. Results reveal that LLM embeddings excel at capturing the nuances of structured language, while BERT leads the lightweight options in performance. In addition, we find that increasing embedding dimensionality and summarisation techniques do not uniformly improve clustering efficiency, suggesting that these strategies require careful analysis to use in real-life models. These results highlight a complex balance between the need for nuanced text representation and computational feasibility in text clustering applications. This study extends traditional text clustering frameworks by incorporating embeddings from LLMs, thereby paving the way for improved methodologies and opening new avenues for future research in various types of textual analysis.

翻译:文本聚类是组织日益增长的数字内容的重要方法,有助于在未分类数据中构建结构并发现隐藏模式。本研究探讨了不同文本嵌入(特别是大语言模型(LLMs)中使用的嵌入)以及聚类算法如何影响文本数据集的聚类效果。通过一系列实验,我们评估了嵌入对聚类结果的影响、通过摘要进行降维的作用以及嵌入尺寸调整的效果。结果表明,LLM嵌入在捕捉结构化语言的细微差别方面表现优异,而BERT在轻量级选项中性能领先。此外,我们发现增加嵌入维度和使用摘要技术并不能一致地提升聚类效率,这表明在实际模型中使用这些策略需要仔细分析。这些结果凸显了文本聚类应用中细微文本表示需求与计算可行性之间的复杂平衡。本研究通过引入LLM嵌入扩展了传统文本聚类框架,从而为改进方法论铺平了道路,并为各类文本分析的未来研究开辟了新途径。