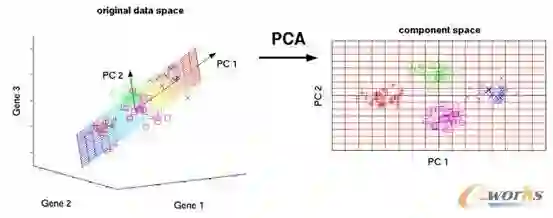

Principal Component Analysis (PCA) is a powerful and popular dimensionality reduction technique. However, due to its linear nature, it often fails to capture the complex underlying structure of real-world data. While Kernel PCA (kPCA) addresses non-linearity, it sacrifices interpretability and struggles with hyperparameter selection. In this paper, we propose a robust non-linear PCA framework that unifies the interpretability of PCA with the flexibility of neural networks. Our method parametrizes variable transformations via neural networks, optimized using Evolution Strategies (ES) to handle the non-differentiability of eigendecomposition. We introduce a novel, granular objective function that maximizes the individual variance contribution of each variable providing a stronger learning signal than global variance maximization. This approach natively handles categorical and ordinal variables without the dimensional explosion associated with one-hot encoding. We demonstrate that our method significantly outperforms both linear PCA and kPCA in explained variance across synthetic and real-world datasets. At the same time, it preserves PCA's interpretability, enabling visualization and analysis of feature contributions using standard tools such as biplots. The code can be found on GitHub.

翻译:主成分分析(PCA)是一种强大且广泛应用的降维技术。然而,由于其线性本质,它常常无法捕捉现实世界数据中复杂的底层结构。虽然核主成分分析(kPCA)解决了非线性问题,但它牺牲了可解释性,并且在超参数选择方面存在困难。本文提出了一种鲁棒的非线性PCA框架,该框架统一了PCA的可解释性与神经网络的灵活性。我们的方法通过神经网络对变量变换进行参数化,并使用进化策略(ES)进行优化,以处理特征分解的不可微性。我们引入了一种新颖的、细粒度的目标函数,该函数最大化每个变量的个体方差贡献,从而提供比全局方差最大化更强的学习信号。此方法能够原生处理分类变量和有序变量,而无需进行与独热编码相关的维度爆炸。我们证明,在合成数据集和真实数据集上,我们的方法在解释方差方面显著优于线性PCA和kPCA。同时,它保留了PCA的可解释性,使得能够使用双标图等标准工具对特征贡献进行可视化和分析。代码可在GitHub上找到。