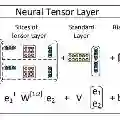

This paper studies off-policy evaluation (OPE) in the presence of unmeasured confounders. Inspired by the two-way fixed effects regression model widely used in the panel data literature, we propose a two-way unmeasured confounding assumption to model the system dynamics in causal reinforcement learning and develop a two-way deconfounder algorithm that devises a neural tensor network to simultaneously learn both the unmeasured confounders and the system dynamics, based on which a model-based estimator can be constructed for consistent policy value estimation. We illustrate the effectiveness of the proposed estimator through theoretical results and numerical experiments.

翻译:本文研究存在未观测混杂因子情况下的离策略评估问题。受面板数据文献中广泛使用的双向固定效应回归模型启发,我们提出了一种双向未观测混杂假设来建模因果强化学习中的系统动态,并开发了一种双向去混杂因子算法。该算法设计了一个神经张量网络来同时学习未观测混杂因子和系统动态,基于此可以构建一个基于模型的估计器以实现一致的策略价值估计。我们通过理论结果和数值实验验证了所提出估计器的有效性。