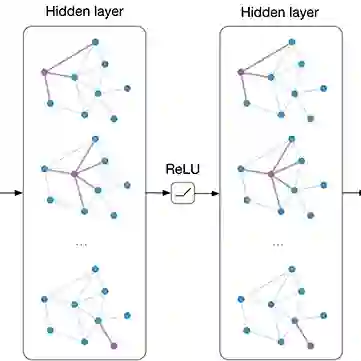

Multimodal emotion recognition in conversation (MERC) refers to identifying and classifying human emotional states by combining data from multiple different modalities (e.g., audio, images, text, video, etc.). Most existing multimodal emotion recognition methods use GCN to improve performance, but existing GCN methods are prone to overfitting and cannot capture the temporal dependency of the speaker's emotions. To address the above problems, we propose a Dynamic Graph Neural Ordinary Differential Equation Network (DGODE) for MERC, which combines the dynamic changes of emotions to capture the temporal dependency of speakers' emotions, and effectively alleviates the overfitting problem of GCNs. Technically, the key idea of DGODE is to utilize an adaptive mixhop mechanism to improve the generalization ability of GCNs and use the graph ODE evolution network to characterize the continuous dynamics of node representations over time and capture temporal dependencies. Extensive experiments on two publicly available multimodal emotion recognition datasets demonstrate that the proposed DGODE model has superior performance compared to various baselines. Furthermore, the proposed DGODE can also alleviate the over-smoothing problem, thereby enabling the construction of a deep GCN network.

翻译:多模态对话情感识别(MERC)指通过结合来自多个不同模态(例如音频、图像、文本、视频等)的数据来识别和分类人类情感状态。现有的大多数多模态情感识别方法使用图卷积网络(GCN)来提升性能,但现有的GCN方法容易过拟合,且无法捕捉说话者情感的时间依赖性。为解决上述问题,我们提出了一种用于MERC的动态图神经常微分方程网络(DGODE),该网络结合情感动态变化以捕捉说话者情感的时间依赖性,并有效缓解了GCN的过拟合问题。在技术上,DGODE的核心思想是利用自适应混合跳数机制提升GCN的泛化能力,并使用图常微分方程演化网络来刻画节点表征随时间的连续动态变化并捕捉时间依赖性。在两个公开可用的多模态情感识别数据集上的大量实验表明,所提出的DGODE模型相较于多种基线方法具有优越的性能。此外,所提出的DGODE还能缓解过平滑问题,从而能够构建更深的GCN网络。