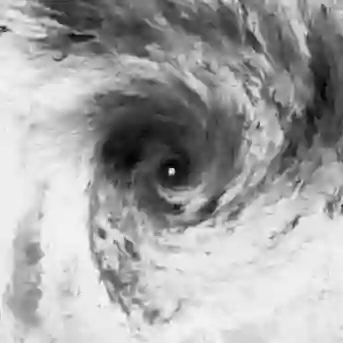

High-resolution satellite imagery is indispensable for tracking the genesis, intensification, and trajectory of tropical cyclones (TCs). However, existing deep learning-based super-resolution (SR) methods often treat satellite image sequences as generic videos, neglecting the underlying atmospheric physical laws governing cloud motion. To address this, we propose a Physics Encoded Spatial and Temporal Generative Adversarial Network (PESTGAN) for TC image super-resolution. Specifically, we design a disentangled generator architecture incorporating a PhyCell module, which approximates the vorticity equation via constrained convolutions and encodes the resulting approximate physical dynamics as implicit latent representations to separate physical dynamics from visual textures. Furthermore, a dual-discriminator framework is introduced, employing a temporal discriminator to enforce motion consistency alongside spatial realism. Experiments on the Digital Typhoon dataset for 4$\times$ upscaling demonstrate that PESTGAN establishes a better performance in structural fidelity and perceptual quality. While maintaining competitive pixel-wise accuracy compared to existing approaches, our method significantly excels in reconstructing meteorologically plausible cloud structures with superior physical fidelity.

翻译:高分辨率卫星图像对于追踪热带气旋(TC)的生成、增强和轨迹至关重要。然而,现有的基于深度学习的超分辨率(SR)方法通常将卫星图像序列视为通用视频,忽略了支配云层运动的底层大气物理规律。为解决这一问题,我们提出了一种用于TC图像超分辨率的物理编码时空生成对抗网络(PESTGAN)。具体而言,我们设计了一个解耦的生成器架构,其中包含一个PhyCell模块。该模块通过约束卷积近似求解涡度方程,并将由此得到的近似物理动力学编码为隐式潜在表示,从而将物理动力学与视觉纹理分离开来。此外,我们引入了一个双判别器框架,采用一个时序判别器在保证空间真实感的同时,强化运动一致性。在Digital Typhoon数据集上进行的4$\times$超分辨率实验表明,PESTGAN在结构保真度和感知质量方面建立了更优的性能。在与现有方法保持相当的像素级精度的同时,我们的方法在重建具有卓越物理保真度的、气象学上合理的云结构方面表现尤为突出。