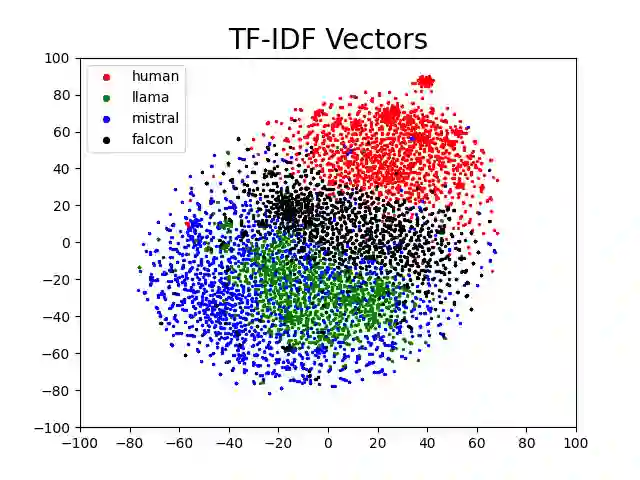

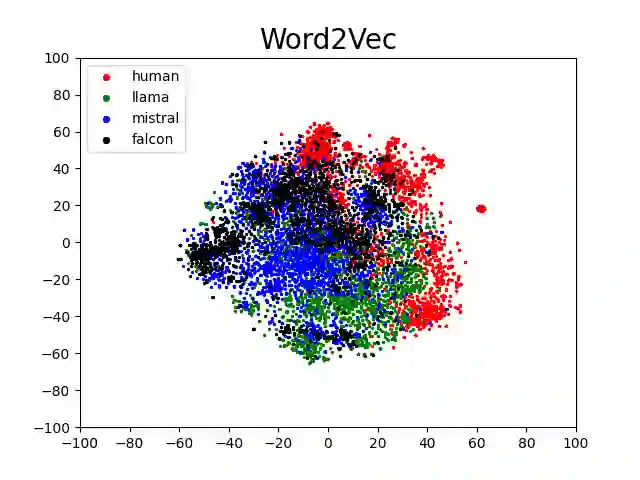

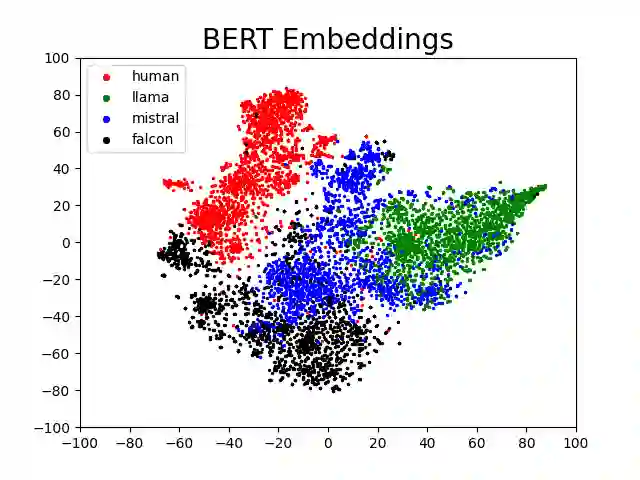

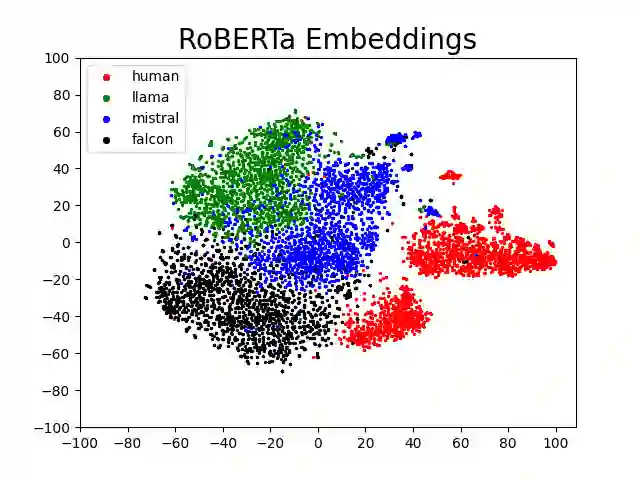

Politics is one of the most prevalent topics discussed on social media platforms, particularly during major election cycles, where users engage in conversations about candidates and electoral processes. Malicious actors may use this opportunity to disseminate misinformation to undermine trust in the electoral process. The emergence of Large Language Models (LLMs) exacerbates this issue by enabling malicious actors to generate misinformation at an unprecedented scale. Artificial intelligence (AI)-generated content is often indistinguishable from authentic user content, raising concerns about the integrity of information on social networks. In this paper, we present a novel taxonomy for characterizing election-related claims. This taxonomy provides an instrument for analyzing election-related claims, with granular categories related to jurisdiction, equipment, processes, and the nature of claims. We introduce ElectAI, a novel benchmark dataset that consists of 9,900 tweets, each labeled as human- or AI-generated. For AI-generated tweets, the specific LLM variant that produced them is specified. We annotated a subset of 1,550 tweets using the proposed taxonomy to capture the characteristics of election-related claims. We explored the capabilities of LLMs in extracting the taxonomy attributes and trained various machine learning models using ElectAI to distinguish between human- and AI-generated posts and identify the specific LLM variant.

翻译:政治是社交媒体平台上讨论最广泛的话题之一,尤其在重大选举周期中,用户会围绕候选人和选举进程展开讨论。恶意行为者可能利用这一机会散布虚假信息,削弱公众对选举过程的信任。大语言模型(LLMs)的出现加剧了这一问题,使恶意行为者能够以前所未有的规模生成虚假信息。人工智能(AI)生成的内容通常与用户真实内容难以区分,引发了对社交网络信息完整性的担忧。本文提出了一种用于描述选举相关言论的新型分类体系。该分类体系提供了一种分析选举相关言论的工具,包含与管辖区域、设备、流程及言论性质相关的细化类别。我们引入了ElectAI基准数据集,包含9,900条推文,每条都标注为人类生成或AI生成。对于AI生成的推文,还标注了具体的大语言模型变体。我们利用所提出的分类体系对1,550条推文进行了标注,以捕捉选举相关言论的特征。我们探索了大语言模型提取分类属性的能力,并基于ElectAI训练了多种机器学习模型,以区分人类生成与AI生成的帖子,并识别具体的大语言模型变体。