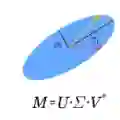

The singular value decomposition (SVD) allows to put a matrix as a product of three matrices: a matrix with the left singular vectors, a matrix with the positive-valued singular values and a matrix with the right singular vectors. There are two main approaches allowing to get the SVD result: the classical method and the randomized method. The analysis of the classical approach leads to accurate singular values. The randomized approach is especially used for high dimensional matrix and is based on the approximation accuracy without computing necessary all singular values. In this paper, the SVD computation is formalized as an optimization problem and a use of the gradient search algorithm. That results in a power method allowing to get all or the first largest singular values and their associated right vectors. In this iterative search, the accuracy on the singular values and the associated vector matrix depends on the user settings. Two applications of the SVD are the principal component analysis and the autoencoder used in the neural network models.

翻译:奇异值分解(SVD)可将矩阵表示为三个矩阵的乘积:包含左奇异向量的矩阵、包含正奇异值的矩阵以及包含右奇异向量的矩阵。获取SVD结果主要有两种途径:经典方法与随机化方法。经典方法分析可获得精确的奇异值。随机化方法特别适用于高维矩阵,其基于近似精度而不必计算全部奇异值。本文将SVD计算形式化为优化问题,并采用梯度搜索算法。由此推导出一种幂次计算方法,可获取全部或前k个最大奇异值及其对应的右向量。在此迭代搜索过程中,奇异值及相关向量矩阵的精度取决于用户设定参数。SVD的两个典型应用是主成分分析以及神经网络模型中使用的自编码器。