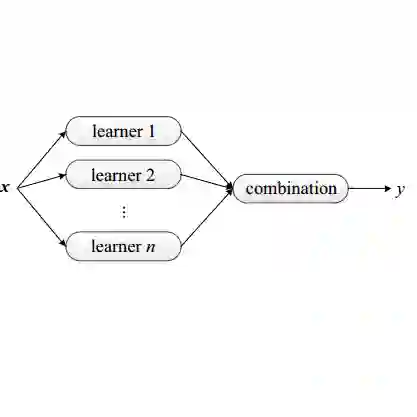

Background: Machine learning (ML) model composition is a popular technique to mitigate shortcomings of a single ML model and to design more effective ML-enabled systems. While ensemble learning, i.e., forwarding the same request to several models and fusing their predictions, has been studied extensively for accuracy, we have insufficient knowledge about how to design energy-efficient ensembles. Objective: We therefore analyzed three types of design decisions for ensemble learning regarding a potential trade-off between accuracy and energy consumption: a) ensemble size, i.e., the number of models in the ensemble, b) fusion methods (majority voting vs. a meta-model), and c) partitioning methods (whole-dataset vs. subset-based training). Methods: By combining four popular ML algorithms for classification in different ensembles, we conducted a full factorial experiment with 11 ensembles x 4 datasets x 2 fusion methods x 2 partitioning methods (176 combinations). For each combination, we measured accuracy (F1-score) and energy consumption in J (for both training and inference). Results: While a larger ensemble size significantly increased energy consumption (size 2 ensembles consumed 37.49% less energy than size 3 ensembles, which in turn consumed 26.96% less energy than the size 4 ensembles), it did not significantly increase accuracy. Furthermore, majority voting outperformed meta-model fusion both in terms of accuracy (Cohen's d of 0.38) and energy consumption (Cohen's d of 0.92). Lastly, subset-based training led to significantly lower energy consumption (Cohen's d of 0.91), while training on the whole dataset did not increase accuracy significantly. Conclusions: From a Green AI perspective, we recommend designing ensembles of small size (2 or maximum 3 models), using subset-based training, majority voting, and energy-efficient ML algorithms like decision trees, Naive Bayes, or KNN.

翻译:背景:机器学习(ML)模型组合是一种常用技术,旨在弥补单一ML模型的不足并设计更有效的ML赋能系统。尽管集成学习(即将同一请求转发给多个模型并融合其预测)在准确性方面已得到广泛研究,但我们对于如何设计高能效的集成系统仍缺乏足够认知。目标:为此,我们分析了集成学习中三类可能涉及准确性与能耗权衡的设计决策:a) 集成规模(即集成中模型的数量),b) 融合方法(多数投票 vs. 元模型),以及 c) 划分方法(全数据集训练 vs. 基于子集的训练)。方法:通过将四种流行的分类ML算法组合成不同集成,我们进行了全因子实验,涵盖11种集成 × 4个数据集 × 2种融合方法 × 2种划分方法(共176种组合)。针对每种组合,我们测量了准确性(F1分数)和以焦耳为单位的能耗(包括训练和推理阶段)。结果:虽然更大的集成规模显著增加了能耗(规模为2的集成比规模为3的集成能耗低37.49%,而规模为3的集成又比规模为4的集成能耗低26.96%),但并未显著提升准确性。此外,多数投票在准确性(Cohen's d为0.38)和能耗(Cohen's d为0.92)两方面均优于元模型融合。最后,基于子集的训练显著降低了能耗(Cohen's d为0.91),而全数据集训练并未显著提高准确性。结论:从绿色AI的角度出发,我们建议设计小规模集成(2个或最多3个模型),采用基于子集的训练、多数投票法,以及能效较高的ML算法(如决策树、朴素贝叶斯或KNN)。