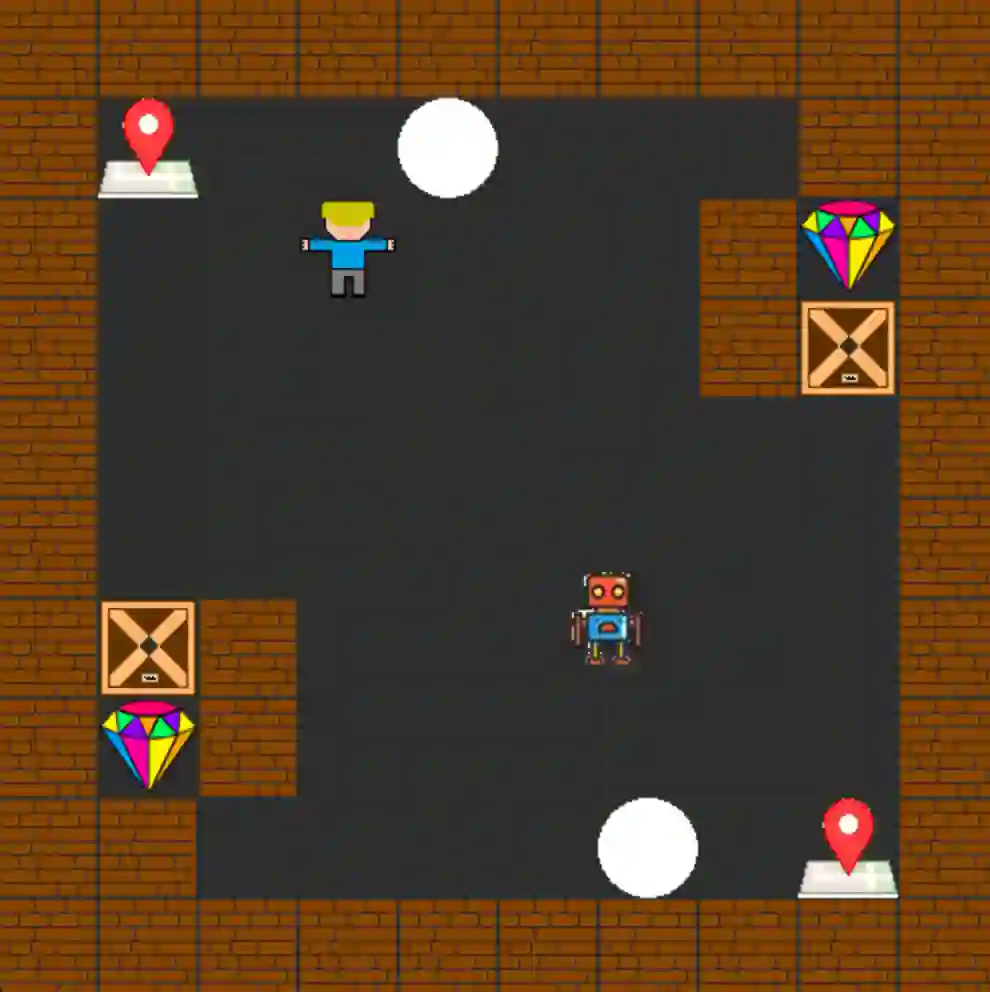

As robots are deployed in human spaces, it is important that they are able to coordinate their actions with the people around them. Part of such coordination involves ensuring that people have a good understanding of how a robot will act in the environment. This can be achieved through explanations of the robot's policy. Much prior work in explainable AI and RL focuses on generating explanations for single-agent policies, but little has been explored in generating explanations for collaborative policies. In this work, we investigate how to generate multi-agent strategy explanations for human-robot collaboration. We formulate the problem using a generic multi-agent planner, show how to generate visual explanations through strategy-conditioned landmark states and generate textual explanations by giving the landmarks to an LLM. Through a user study, we find that when presented with explanations from our proposed framework, users are able to better explore the full space of strategies and collaborate more efficiently with new robot partners.

翻译:随着机器人在人类空间中的部署日益增多,确保其能与周围人员进行行动协调变得至关重要。这种协调的一部分在于保证人们能够充分理解机器人在环境中的行为方式。这可以通过对机器人策略的解释来实现。以往可解释人工智能和强化学习的研究大多集中于生成单智能体策略的解释,而在生成协作策略解释方面的探索尚显不足。本研究探讨如何为人机协作生成多智能体策略解释。我们采用通用多智能体规划器对问题进行形式化建模,展示了如何通过策略条件化关键状态生成可视化解释,并通过将关键状态输入大语言模型生成文本解释。用户研究表明,当参与者接触我们提出框架生成的解释时,他们能够更全面地探索策略空间,并与新的机器人伙伴实现更高效的协作。