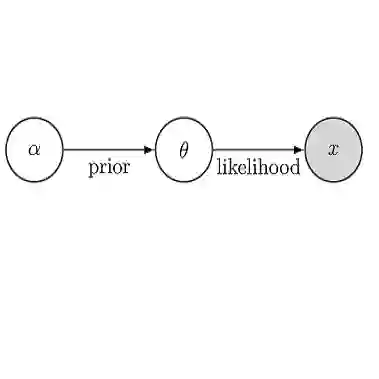

Beyond estimating parameters of interest from data, one of the key goals of statistical inference is to properly quantify uncertainty in these estimates. In Bayesian inference, this uncertainty is provided by the posterior distribution, the computation of which typically involves an intractable high-dimensional integral. Among available approximation methods, sampling-based approaches come with strong theoretical guarantees but scale poorly to large problems, while variational approaches scale well but offer few theoretical guarantees. In particular, variational methods are known to produce overconfident estimates of posterior uncertainty and are typically non-identifiable, with many latent variable configurations generating equivalent predictions. Here, we address these challenges by showing how diffusion-based models (DBMs), which have recently produced state-of-the-art performance in generative modeling tasks, can be repurposed for performing calibrated, identifiable Bayesian inference. By exploiting a previously established connection between the stochastic and probability flow ordinary differential equations (pfODEs) underlying DBMs, we derive a class of models, inflationary flows, that uniquely and deterministically map high-dimensional data to a lower-dimensional Gaussian distribution via ODE integration. This map is both invertible and neighborhood-preserving, with controllable numerical error, with the result that uncertainties in the data are correctly propagated to the latent space. We demonstrate how such maps can be learned via standard DBM training using a novel noise schedule and are effective at both preserving and reducing intrinsic data dimensionality. The result is a class of highly expressive generative models, uniquely defined on a low-dimensional latent space, that afford principled Bayesian inference.

翻译:除了从数据中估计感兴趣的参数外,统计推断的一个关键目标是正确量化这些估计的不确定性。在贝叶斯推断中,这种不确定性由后验分布提供,其计算通常涉及难以处理的高维积分。在现有的近似方法中,基于采样的方法具有坚实的理论保证,但难以扩展至大规模问题;而变分方法扩展性良好,却缺乏理论保证。特别是,变分方法已知会产生过于自信的后验不确定性估计,且通常不可识别——许多潜变量配置能产生等价的预测。本文通过展示如何将近期在生成建模任务中取得最先进性能的基于扩散的模型重新用于执行校准、可识别的贝叶斯推断,以应对这些挑战。通过利用已建立的DBMs基础随机微分方程与概率流常微分方程之间的关联,我们推导出一类模型——膨胀流,其通过ODE积分唯一且确定地将高维数据映射到低维高斯分布。该映射既可逆又保持邻域关系,且数值误差可控,从而确保数据中的不确定性被正确传播到潜空间。我们演示了如何通过采用新型噪声调度的标准DBM训练学习此类映射,并证明其在保持和降低数据本征维度方面的有效性。最终得到一类高度表达性的生成模型,其唯一定义在低维潜空间上,并支持原则性的贝叶斯推断。