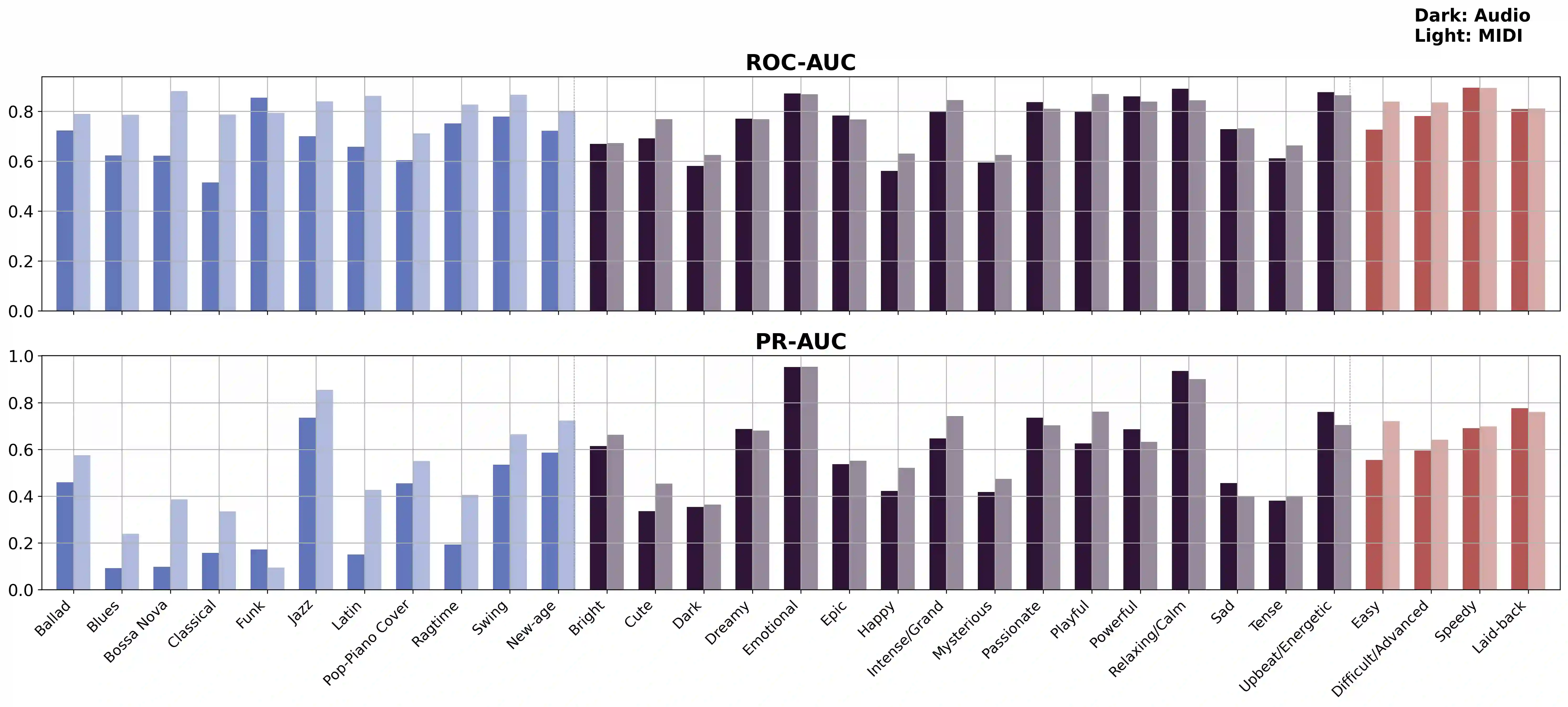

While piano music has become a significant area of study in Music Information Retrieval (MIR), there is a notable lack of datasets for piano solo music with text labels. To address this gap, we present PIAST (PIano dataset with Audio, Symbolic, and Text), a piano music dataset. Utilizing a piano-specific taxonomy of semantic tags, we collected 9,673 tracks from YouTube and added human annotations for 2,023 tracks by music experts, resulting in two subsets: PIAST-YT and PIAST-AT. Both include audio, text, tag annotations, and transcribed MIDI utilizing state-of-the-art piano transcription and beat tracking models. Among many possible tasks with the multi-modal dataset, we conduct music tagging and retrieval using both audio and MIDI data and report baseline performances to demonstrate its potential as a valuable resource for MIR research.

翻译:尽管钢琴音乐已成为音乐信息检索领域的重要研究对象,但目前仍缺乏带有文本标注的钢琴独奏音乐数据集。为填补这一空白,我们提出了PIAST(包含音频、符号与文本的钢琴数据集)。基于钢琴专用的语义标签分类体系,我们从YouTube收集了9,673条音轨,并由音乐专家对其中2,023条音轨进行了人工标注,最终形成两个子集:PIAST-YT与PIAST-AT。两个子集均包含音频、文本、标签标注,并采用最先进的钢琴转录与节拍追踪模型生成了MIDI转录数据。基于该多模态数据集可开展的众多任务中,我们利用音频与MIDI数据进行了音乐标签分类与检索实验,并报告了基线性能,以证明其作为音乐信息检索研究资源的潜在价值。