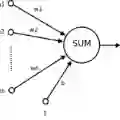

Partial differential equations have a wide range of applications in modeling multiple physical, biological, or social phenomena. Therefore, we need to approximate the solutions of these equations in computationally feasible terms. Nowadays, among the most popular numerical methods for solving partial differential equations in engineering, we encounter the finite difference and finite element methods. An alternative numerical method that has recently gained popularity for numerically solving partial differential equations is the use of artificial neural networks. Artificial neural networks, or neural networks for short, are mathematical structures with universal approximation properties. In addition, thanks to the extraordinary computational development of the last decade, neural networks have become accessible and powerful numerical methods for engineers and researchers. For example, imaging and language processing are applications of neural networks today that show sublime performance inconceivable years ago. This dissertation contributes to the numerical solution of partial differential equations using neural networks with the following two-fold objective: investigate the behavior of neural networks as approximators of solutions of partial differential equations and propose neural-network-based methods for frameworks that are hardly addressable via traditional numerical methods. As novel neural-network-based proposals, we first present a method inspired by the finite element method when applying mesh refinements to solve parametric problems. Secondly, we propose a general residual minimization scheme based on a generalized version of the Ritz method. Finally, we develop a memory-based strategy to overcome a usual numerical integration limitation when using neural networks to solve partial differential equations.

翻译:偏微分方程在模拟多种物理、生物或社会现象中具有广泛的应用。因此,我们需要以计算可行的方式近似求解这些方程。当前,在工程领域求解偏微分方程最常用的数值方法中,有限差分法和有限元法占据主导地位。近年来,一种新兴的替代性数值方法——人工神经网络法——在偏微分方程数值求解中日益受到关注。人工神经网络(简称神经网络)是具有通用逼近性质的数学结构。此外,得益于过去十年非凡的计算技术发展,神经网络已成为工程师和研究人员可获取且功能强大的数值方法。例如,图像与语言处理作为神经网络的应用领域,如今展现出数年前难以想象的卓越性能。本论文围绕神经网络在偏微分方程数值求解中的应用展开研究,旨在实现以下双重目标:探究神经网络作为偏微分方程解逼近器的行为特性,并提出基于神经网络的方法以应对传统数值方法难以处理的框架。作为新颖的神经网络方法,我们首先提出一种受有限元法网格细化启发的参数化问题求解方法。其次,我们基于广义Ritz法提出一种通用的残差最小化方案。最后,我们开发了一种基于记忆的策略,以克服使用神经网络求解偏微分方程时常见的数值积分局限。