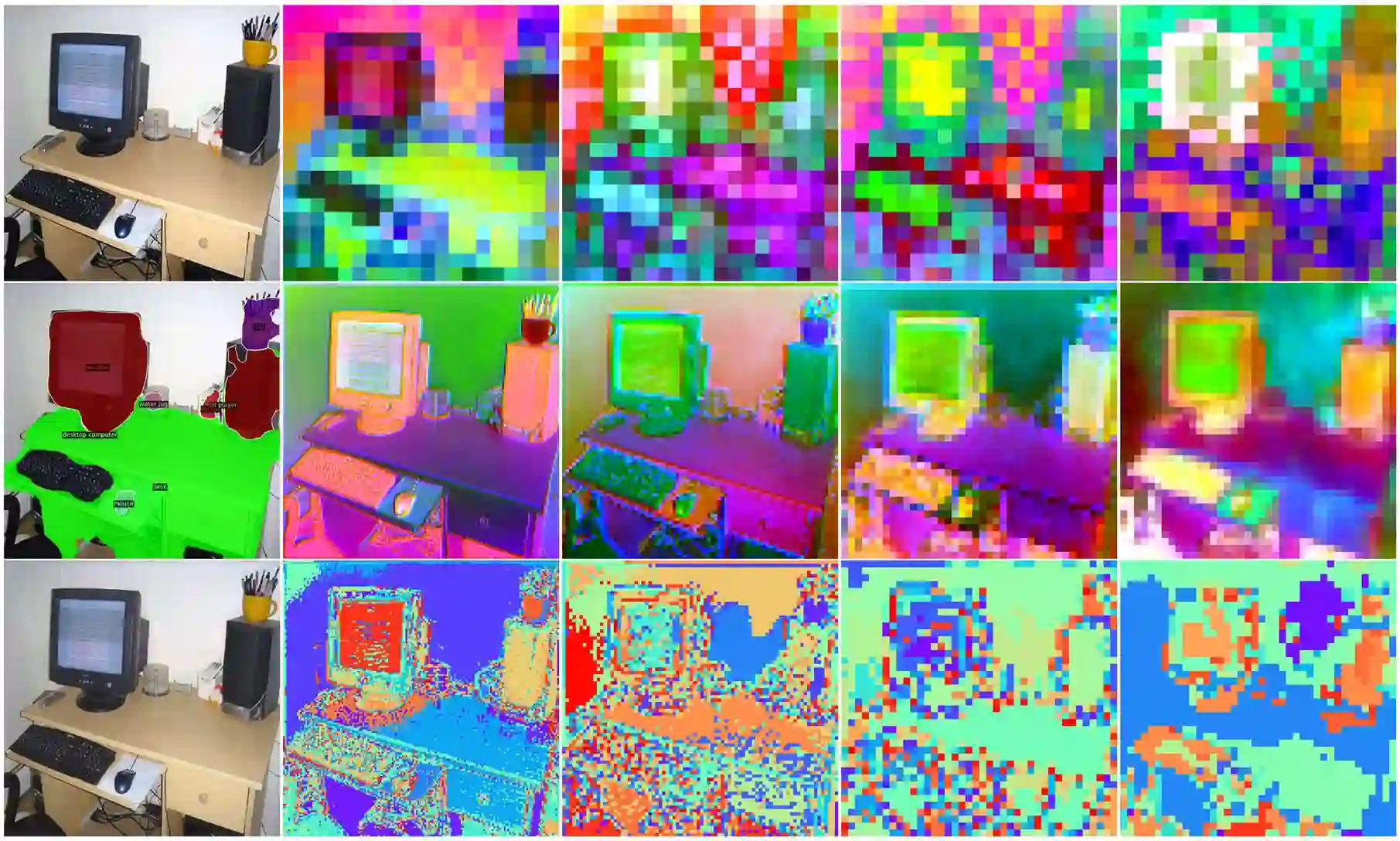

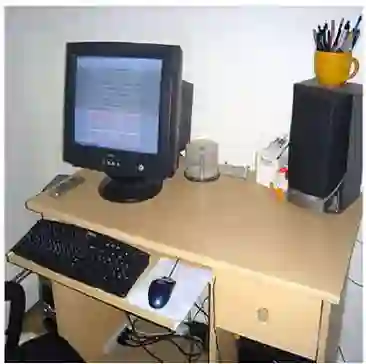

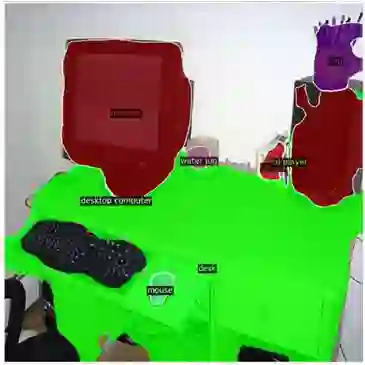

The visual understanding are often approached from 3 granular levels: image, patch and pixel. Visual Tokenization, trained by self-supervised reconstructive learning, compresses visual data by codebook in patch-level with marginal information loss, but the visual tokens does not have semantic meaning. Open Vocabulary semantic segmentation benefits from the evolving Vision-Language models (VLMs) with strong image zero-shot capability, but transferring image-level to pixel-level understanding remains an imminent challenge. In this paper, we treat segmentation as tokenizing pixels and study a united perceptual and semantic token compression for all granular understanding and consequently facilitate open vocabulary semantic segmentation. Referring to the cognitive process of pretrained VLM where the low-level features are progressively composed to high-level semantics, we propose Feature Pyramid Tokenization (PAT) to cluster and represent multi-resolution feature by learnable codebooks and then decode them by joint learning pixel reconstruction and semantic segmentation. We design loosely coupled pixel and semantic learning branches. The pixel branch simulates bottom-up composition and top-down visualization of codebook tokens, while the semantic branch collectively fuse hierarchical codebooks as auxiliary segmentation guidance. Our experiments show that PAT enhances the semantic intuition of VLM feature pyramid, improves performance over the baseline segmentation model and achieves competitive performance on open vocabulary semantic segmentation benchmark. Our model is parameter-efficient for VLM integration and flexible for the independent tokenization. We hope to give inspiration not only on improving segmentation but also on semantic visual token utilization.

翻译:视觉理解通常从三个粒度层面展开:图像级、块级和像素级。通过自监督重建学习训练的视觉标记化,利用码本在块级压缩视觉数据且信息损失极小,但这些视觉标记缺乏语义含义。开放词汇语义分割得益于具有强大图像零样本能力的演进式视觉-语言模型(VLMs),然而将图像级理解迁移至像素级理解仍是一个紧迫的挑战。本文中,我们将分割视为像素的标记化,研究一种统一的感知与语义标记压缩方法,以服务于所有粒度的理解,进而促进开放词汇语义分割。借鉴预训练VLM中将低级特征逐步组合为高级语义的认知过程,我们提出特征金字塔标记化(PAT),通过可学习的码本对多分辨率特征进行聚类与表征,再通过联合学习像素重建与语义分割进行解码。我们设计了松耦合的像素与语义学习分支:像素分支模拟码本标记的自底向上组合与自顶向下可视化,语义分支则融合层级化码本作为辅助分割指导。实验表明,PAT增强了VLM特征金字塔的语义直觉,在基线分割模型基础上提升了性能,并在开放词汇语义分割基准测试中取得了有竞争力的结果。我们的模型在VLM集成方面参数量高效,且支持独立的标记化操作。我们希望这项工作不仅能对改进分割任务有所启发,也能为语义化视觉标记的利用提供新思路。