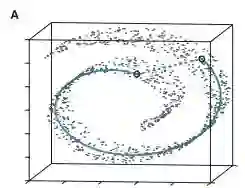

We propose K-Deep Simplex(KDS) which, given a set of data points, learns a dictionary comprising synthetic landmarks, along with representation coefficients supported on a simplex. KDS employs a local weighted $\ell_1$ penalty that encourages each data point to represent itself as a convex combination of nearby landmarks. We solve the proposed optimization program using alternating minimization and design an efficient, interpretable autoencoder using algorithm unrolling. We theoretically analyze the proposed program by relating the weighted $\ell_1$ penalty in KDS to a weighted $\ell_0$ program. Assuming that the data are generated from a Delaunay triangulation, we prove the equivalence of the weighted $\ell_1$ and weighted $\ell_0$ programs. We further show the stability of the representation coefficients under mild geometrical assumptions. If the representation coefficients are fixed, we prove that the sub-problem of minimizing over the dictionary yields a unique solution. Further, we show that low-dimensional representations can be efficiently obtained from the covariance of the coefficient matrix. Experiments show that the algorithm is highly efficient and performs competitively on synthetic and real data sets.

翻译:我们提出了K-Deep Simplex(KDS)方法,该方法在给定一组数据点后,能够学习一个包含合成地标点的字典以及支撑在单纯形上的表示系数。KDS采用局部加权$\ell_1$惩罚项,促使每个数据点将其自身表示为邻近地标点的凸组合。我们通过交替最小化求解所提出的优化问题,并利用算法展开技术设计了一种高效、可解释的自编码器。我们从理论上分析了所提出的优化问题,将KDS中的加权$\ell_1$惩罚项与一个加权$\ell_0$问题联系起来。假设数据由Delaunay三角剖分生成,我们证明了加权$\ell_1$与加权$\ell_0$问题的等价性。在温和的几何假设下,我们进一步证明了表示系数的稳定性。若表示系数固定,我们证明了在字典上最小化的子问题具有唯一解。此外,我们表明可以从系数矩阵的协方差中高效地获得低维表示。实验表明,该算法具有很高的效率,并在合成与真实数据集上表现出有竞争力的性能。