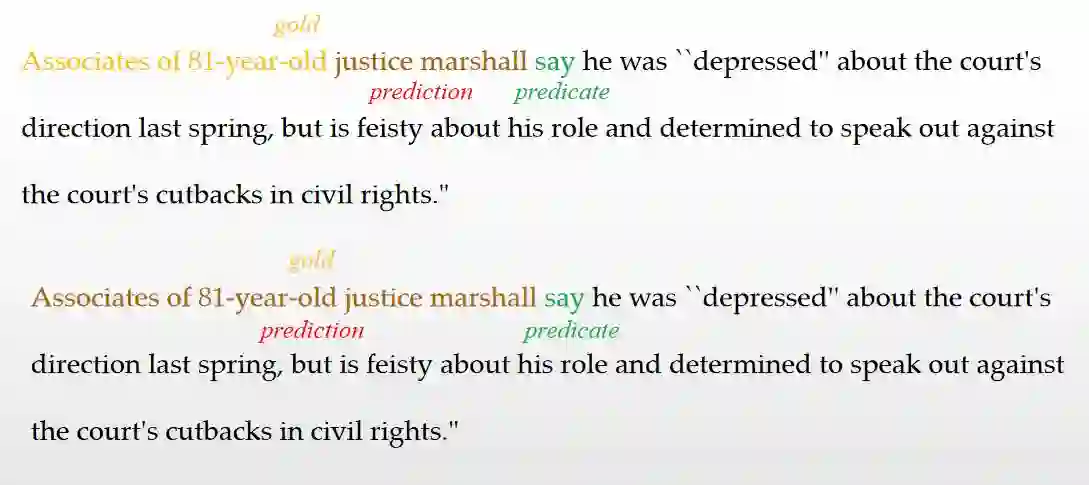

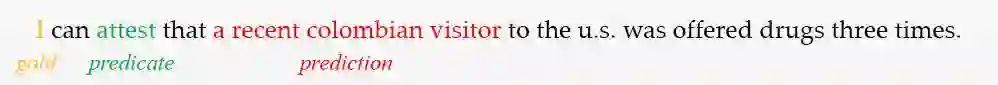

Large Language Models (LLMs) play a crucial role in capturing structured semantics to enhance language understanding, improve interpretability, and reduce bias. Nevertheless, an ongoing controversy exists over the extent to which LLMs can grasp structured semantics. To assess this, we propose using Semantic Role Labeling (SRL) as a fundamental task to explore LLMs' ability to extract structured semantics. In our assessment, we employ the prompting approach, which leads to the creation of our few-shot SRL parser, called PromptSRL. PromptSRL enables LLMs to map natural languages to explicit semantic structures, which provides an interpretable window into the properties of LLMs. We find interesting potential: LLMs can indeed capture semantic structures, and scaling-up doesn't always mirror potential. Additionally, limitations of LLMs are observed in C-arguments, etc. Lastly, we are surprised to discover that significant overlap in the errors is made by both LLMs and untrained humans, accounting for almost 30% of all errors.

翻译:大语言模型在捕获结构化语义以增强语言理解、提升可解释性和减少偏见方面发挥着关键作用。然而,关于LLMs能在多大程度上掌握结构化语义仍存在持续争议。为评估这一点,我们提出将语义角色标注作为基础任务,探索LLMs提取结构化语义的能力。在评估中,我们采用提示方法,由此构建了基于少样本学习的SRL解析器PromptSRL。PromptSRL使LLMs能将自然语言映射为显式语义结构,为理解LLMs的特性提供了可解释的窗口。我们发现有趣的潜力:LLMs确实能捕获语义结构,且模型规模扩展并不总能反映这种潜力。此外,在C-论元等方面观察到LLMs的局限性。最后,我们惊讶地发现:LLMs与未经训练的人类所犯错误存在显著重叠,该重叠约占全部错误的30%。