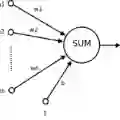

Artificial neural networks have advanced due to scaling dimensions, but conventional computing faces inefficiency due to the von Neumann bottleneck. In-memory computation architectures, like memristors, offer promise but face challenges due to hardware non-idealities. This work proposes and experimentally demonstrates layer ensemble averaging, a technique to map pre-trained neural network solutions from software to defective hardware crossbars of emerging memory devices and reliably attain near-software performance on inference. The approach is investigated using a custom 20,000-device hardware prototyping platform on a continual learning problem where a network must learn new tasks without catastrophically forgetting previously learned information. Results demonstrate that by trading off the number of devices required for layer mapping, layer ensemble averaging can reliably boost defective memristive network performance up to the software baseline. For the investigated problem, the average multi-task classification accuracy improves from 61 % to 72 % (< 1 % of software baseline) using the proposed approach.

翻译:人工神经网络凭借规模扩展取得了长足进步,但传统计算受冯·诺依曼瓶颈制约存在效率低下问题。基于忆阻器的存内计算架构虽展现出应用前景,却受限于硬件非理想特性带来的挑战。本文提出并实验验证了层集成平均技术——该技术可将预训练神经网络解决方案从软件映射至新兴存储器件的有缺陷硬件交叉阵列,在推理阶段可靠实现接近软件水平的性能。我们利用定制20000器件硬件原型平台,在持续学习问题(要求网络在不发生灾难性遗忘的前提下持续学习新任务)中对本方法展开研究。结果表明,通过权衡层映射所需的器件数量,层集成平均技术能可靠地将缺陷忆阻网络的性能提升至软件基准水平。针对所研究问题,采用本方法后平均多任务分类准确率从61%提升至72%(与软件基准的偏差小于1%)。