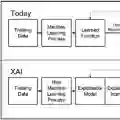

Explaining with examples is an intuitive way to justify AI decisions. However, it is challenging to understand how a decision value should change relative to the examples with many features differing by large amounts. We draw from real estate valuation that uses Comparables-examples with known values for comparison. Estimates are made more accurate by hypothetically adjusting the attributes of each Comparable and correspondingly changing the value based on factors. We propose Comparables XAI for relatable example-based explanations of AI with Trace adjustments that trace counterfactual changes from each Comparable to the Subject, one attribute at a time, monotonically along the AI feature space. In modelling and user studies, Trace-adjusted Comparables achieved the highest XAI faithfulness and precision, user accuracy, and narrowest uncertainty bounds compared to linear regression, linearly adjusted Comparables, or unadjusted Comparables. This work contributes a new analytical basis for using example-based explanations to improve user understanding of AI decisions.

翻译:基于示例的解释是证明人工智能决策合理性的直观方法。然而,当存在大量特征存在显著差异时,理解决策值应如何相对于示例发生变化具有挑战性。我们借鉴房地产估值中使用的可比性示例——即具有已知价值用于比较的案例。通过假设性调整每个可比性示例的属性,并基于影响因子相应调整其价值,可以使估值更为准确。我们提出可比性可解释人工智能,通过追踪调整机制为人工智能提供可关联的示例解释,该机制沿着人工智能特征空间逐个属性单调地追踪从每个可比性示例到目标案例的反事实变化。在建模与用户研究中,相较于线性回归、线性调整的可比性示例或未调整的可比性示例,追踪调整的可比性示例在可解释人工智能的忠实度与精确度、用户准确率方面表现最优,且具有最窄的不确定性边界。本研究为利用示例解释提升用户对人工智能决策的理解提供了新的分析基础。