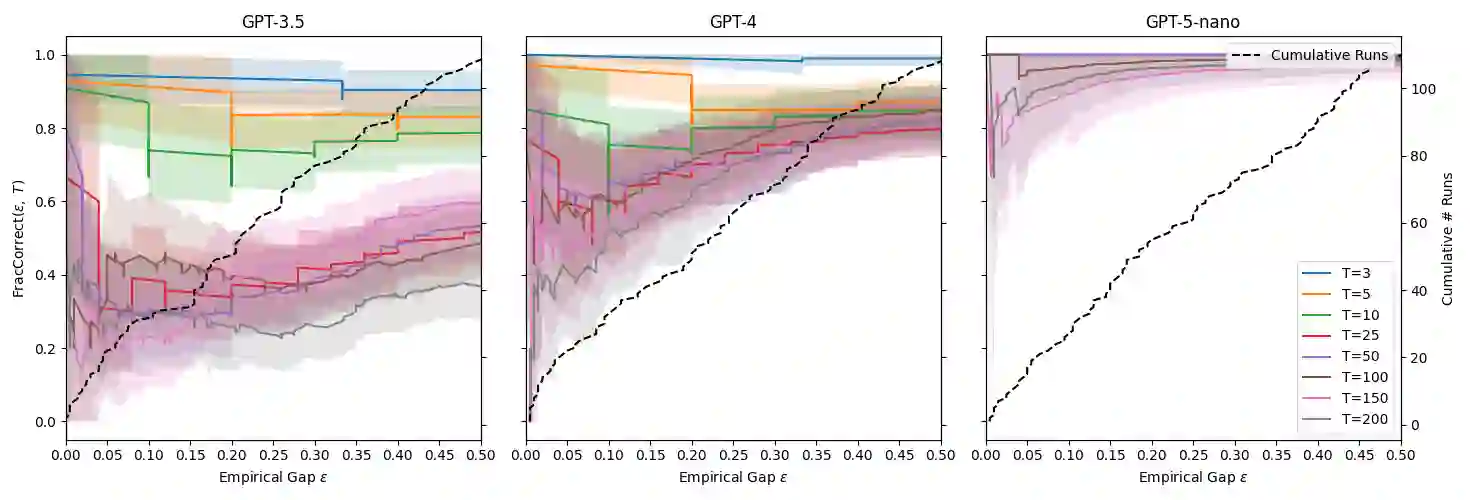

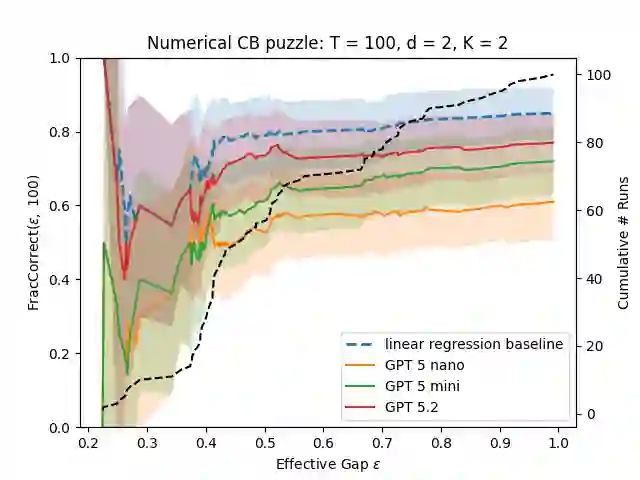

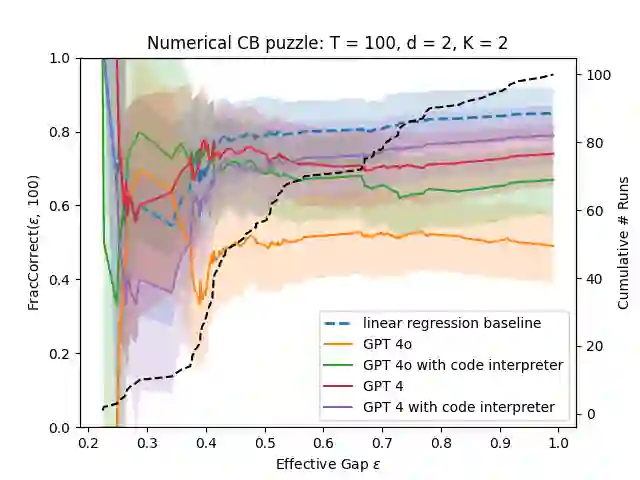

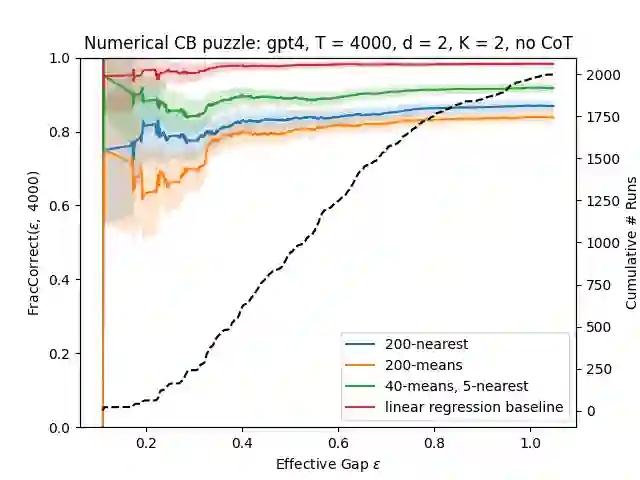

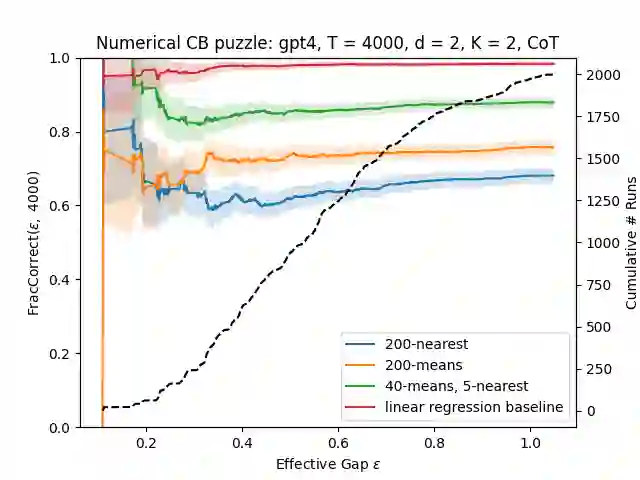

We evaluate the ability of the current generation of large language models (LLMs) to help a decision-making agent facing an exploration-exploitation tradeoff. While previous work has largely study the ability of LLMs to solve combined exploration-exploitation tasks, we take a more systematic approach and use LLMs to explore and exploit in silos in various (contextual) bandit tasks. We find that reasoning models show the most promise for solving exploitation tasks, although they are still too expensive or too slow to be used in many practical settings. Motivated by this, we study tool use and in-context summarization using non-reasoning models. We find that these mitigations may be used to substantially improve performance on medium-difficulty tasks, however even then, all LLMs we study perform worse than a simple linear regression, even in non-linear settings. On the other hand, we find that LLMs do help at exploring large action spaces with inherent semantics, by suggesting suitable candidates to explore.

翻译:我们评估了当前一代大型语言模型(LLMs)在帮助决策智能体应对探索-利用权衡问题上的能力。尽管先前的研究主要关注LLMs解决探索与利用相结合任务的能力,但我们采取了更系统化的方法,在多种(上下文)赌博机任务中分别使用LLMs进行探索和利用。研究发现,推理模型在解决利用任务方面展现出最大潜力,但其计算成本过高或速度过慢,难以适用于多数实际场景。受此启发,我们研究了基于非推理模型的工具使用和上下文摘要技术。结果表明,这些优化方法可显著提升中等难度任务的表现,但即便如此,所有研究的LLMs性能仍逊于简单的线性回归模型,即使在非线性场景中亦是如此。另一方面,我们发现LLMs确实能通过推荐合适的候选方案,帮助在具有内在语义的大规模动作空间中进行有效探索。