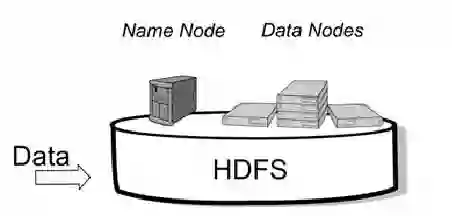

Distributed File Systems (DFS) are essential for managing vast datasets across multiple servers, offering benefits in scalability, fault tolerance, and data accessibility. This paper presents a comprehensive evaluation of three prominent DFSs - Google File System (GFS), Hadoop Distributed File System (HDFS), and MinIO - focusing on their fault tolerance mechanisms and scalability under varying data loads and client demands. Through detailed analysis, how these systems handle data redundancy, server failures, and client access protocols, ensuring reliability in dynamic, large-scale environments is assessed. In addition, the impact of system design on performance, particularly in distributed cloud and computing architectures is assessed. By comparing the strengths and limitations of each DFS, the paper provides practical insights for selecting the most appropriate system for different enterprise needs, from high availability storage to big data analytics.

翻译:分布式文件系统(DFS)对于跨多台服务器管理海量数据集至关重要,其在可扩展性、容错性和数据可访问性方面具有显著优势。本文对三种主流分布式文件系统——谷歌文件系统(GFS)、Hadoop分布式文件系统(HDFS)以及MinIO——进行了全面评估,重点研究其在变化的数据负载和客户端需求下的容错机制与可扩展性。通过详细分析,评估了这些系统如何处理数据冗余、服务器故障及客户端访问协议,从而确保动态大规模环境下的可靠性。此外,本文还评估了系统设计对性能的影响,特别是在分布式云与计算架构中的表现。通过比较各分布式文件系统的优势与局限,本研究为从高可用存储到大数据分析等不同企业需求选择最合适的系统提供了实践性见解。