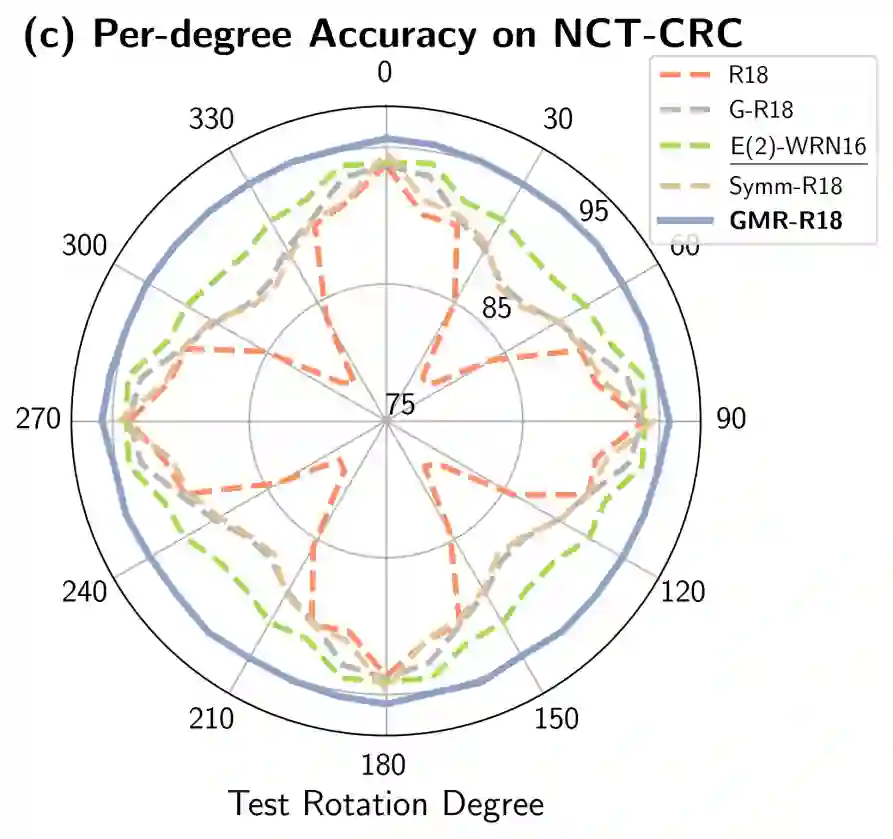

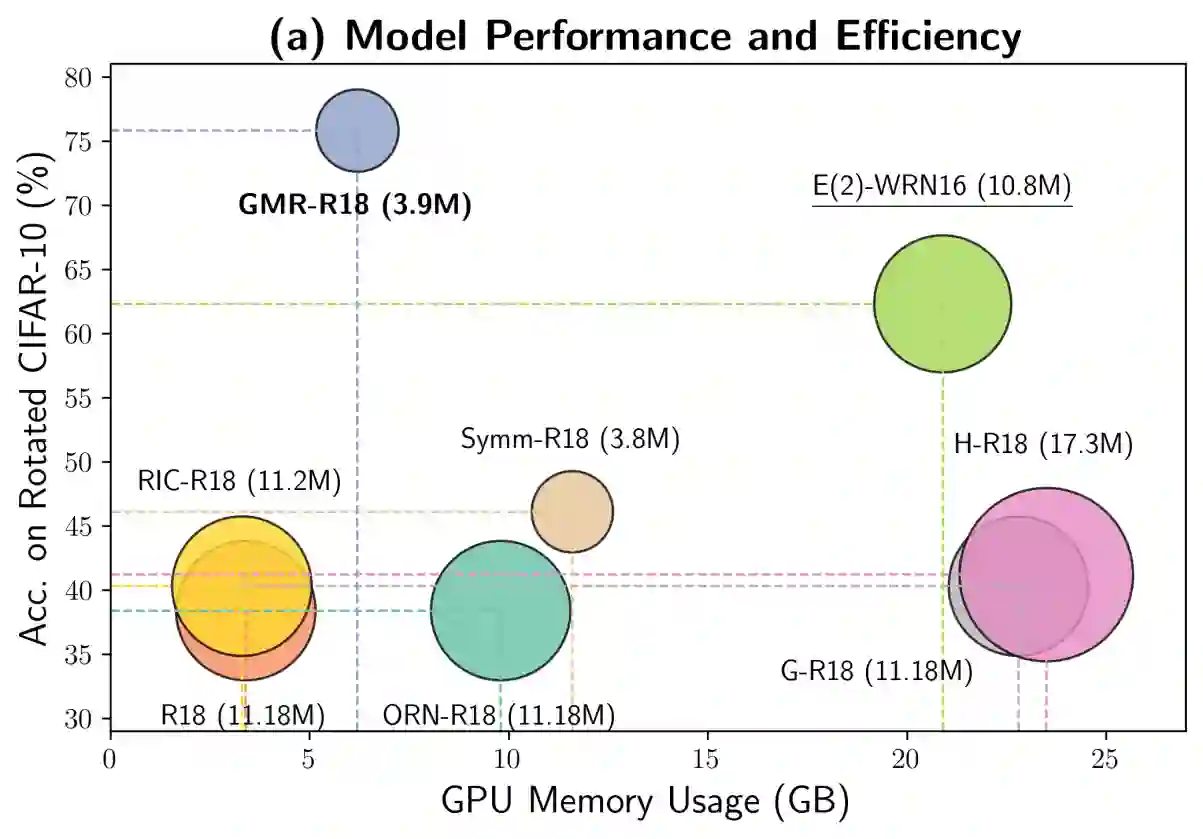

Symmetry, where certain features remain invariant under geometric transformations, can often serve as a powerful prior in designing convolutional neural networks (CNNs). While conventional CNNs inherently support translational equivariance, extending this property to rotation and reflection has proven challenging, often forcing a compromise between equivariance, efficiency, and information loss. In this work, we introduce Gaussian Mixture Ring Convolution (GMR-Conv), an efficient convolution kernel that smooths radial symmetry using a mixture of Gaussian-weighted rings. This design mitigates discretization errors of circular kernels, thereby preserving robust rotation and reflection equivariance without incurring computational overhead. We further optimize both the space and speed efficiency of GMR-Conv via a novel parameterization and computation strategy, allowing larger kernels at an acceptable cost. Extensive experiments on eight classification and one segmentation datasets demonstrate that GMR-Conv not only matches conventional CNNs' performance but can also surpass it in applications with orientation-less data. GMR-Conv is also proven to be more robust and efficient than the state-of-the-art equivariant learning methods. Our work provides inspiring empirical evidence that carefully applied radial symmetry can alleviate the challenges of information loss, marking a promising advance in equivariant network architectures. The code is available at https://github.com/XYPB/GMR-Conv.

翻译:对称性——即某些特征在几何变换下保持不变的性质——常可作为设计卷积神经网络(CNN)的强大先验。传统 CNN 天然支持平移等变性,但将这一性质扩展至旋转与反射则极具挑战,往往需要在等变性、效率与信息损失之间做出妥协。本文提出高斯混合环卷积(GMR-Conv),这是一种通过高斯加权环的混合来平滑径向对称性的高效卷积核。该设计缓解了圆形核的离散化误差,从而在不增加计算开销的前提下保持了稳健的旋转与反射等变性。我们进一步通过新颖的参数化与计算策略优化了 GMR-Conv 的空间与速度效率,使得以可接受的代价使用更大核成为可能。在八个分类数据集和一个分割数据集上的大量实验表明,GMR-Conv 不仅能够匹配传统 CNN 的性能,在方向无关数据的应用中甚至能超越之。实验也证明,GMR-Conv 比当前最先进的等变学习方法更具鲁棒性和效率。我们的工作提供了鼓舞人心的实证证据,表明精心设计的径向对称性能够缓解信息损失的挑战,这标志着等变网络架构向前迈出了有希望的一步。代码发布于 https://github.com/XYPB/GMR-Conv。