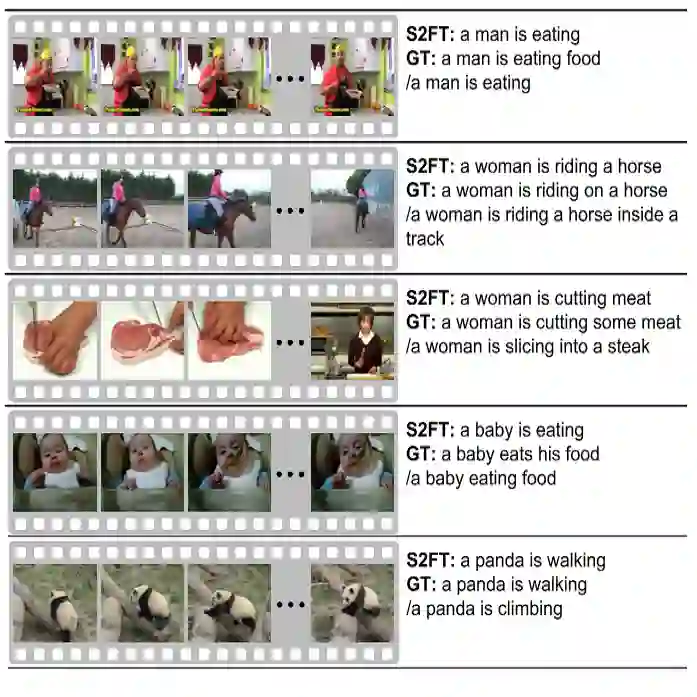

Existing video captioning methods merely provide shallow or simplistic representations of object behaviors, resulting in superficial and ambiguous descriptions. However, object behavior is dynamic and complex. To comprehensively capture the essence of object behavior, we propose a dynamic action semantic-aware graph transformer. Firstly, a multi-scale temporal modeling module is designed to flexibly learn long and short-term latent action features. It not only acquires latent action features across time scales, but also considers local latent action details, enhancing the coherence and sensitiveness of latent action representations. Secondly, a visual-action semantic aware module is proposed to adaptively capture semantic representations related to object behavior, enhancing the richness and accurateness of action representations. By harnessing the collaborative efforts of these two modules,we can acquire rich behavior representations to generate human-like natural descriptions. Finally, this rich behavior representations and object representations are used to construct a temporal objects-action graph, which is fed into the graph transformer to model the complex temporal dependencies between objects and actions. To avoid adding complexity in the inference phase, the behavioral knowledge of the objects will be distilled into a simple network through knowledge distillation. The experimental results on MSVD and MSR-VTT datasets demonstrate that the proposed method achieves significant performance improvements across multiple metrics.

翻译:现有视频描述方法仅提供对象行为的浅层或简化表征,导致描述流于表面且含义模糊。然而,对象行为具有动态性与复杂性。为全面捕捉对象行为本质,本文提出一种动态动作语义感知图Transformer。首先,设计多尺度时序建模模块以灵活学习长短时潜在动作特征。该模块不仅能获取跨时间尺度的潜在动作特征,同时考虑局部潜在动作细节,从而增强潜在动作表征的连贯性与敏感性。其次,提出视觉-动作语义感知模块以自适应捕获与对象行为相关的语义表征,提升动作表征的丰富度与准确性。通过协同利用这两个模块,我们能够获取丰富的行为表征以生成类人的自然描述。最后,利用这些丰富的行为表征与对象表征构建时序对象-动作图,并将其输入图Transformer以建模对象与动作间复杂的时序依赖关系。为避免在推理阶段增加复杂度,对象的行为知识将通过知识蒸馏技术压缩至轻量网络中。在MSVD与MSR-VTT数据集上的实验结果表明,所提方法在多项指标上均取得显著性能提升。