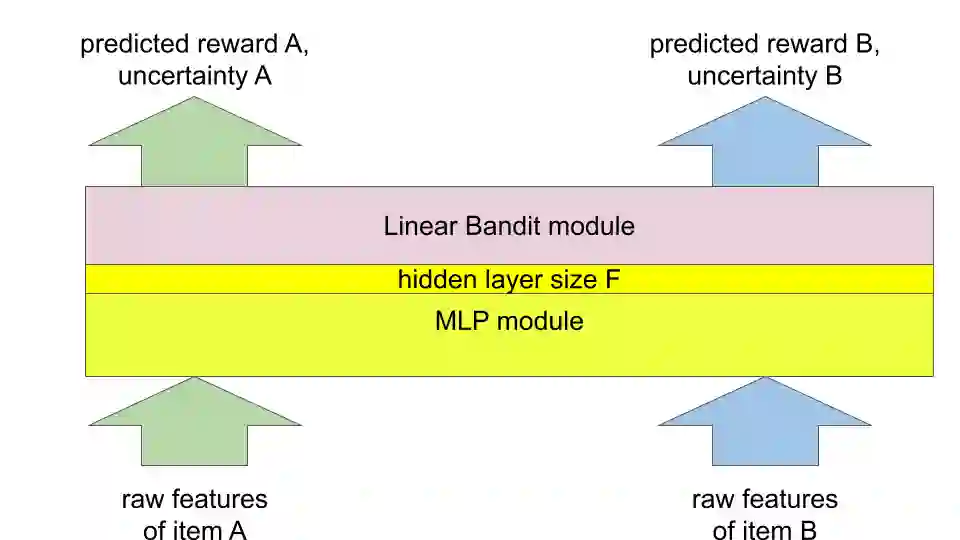

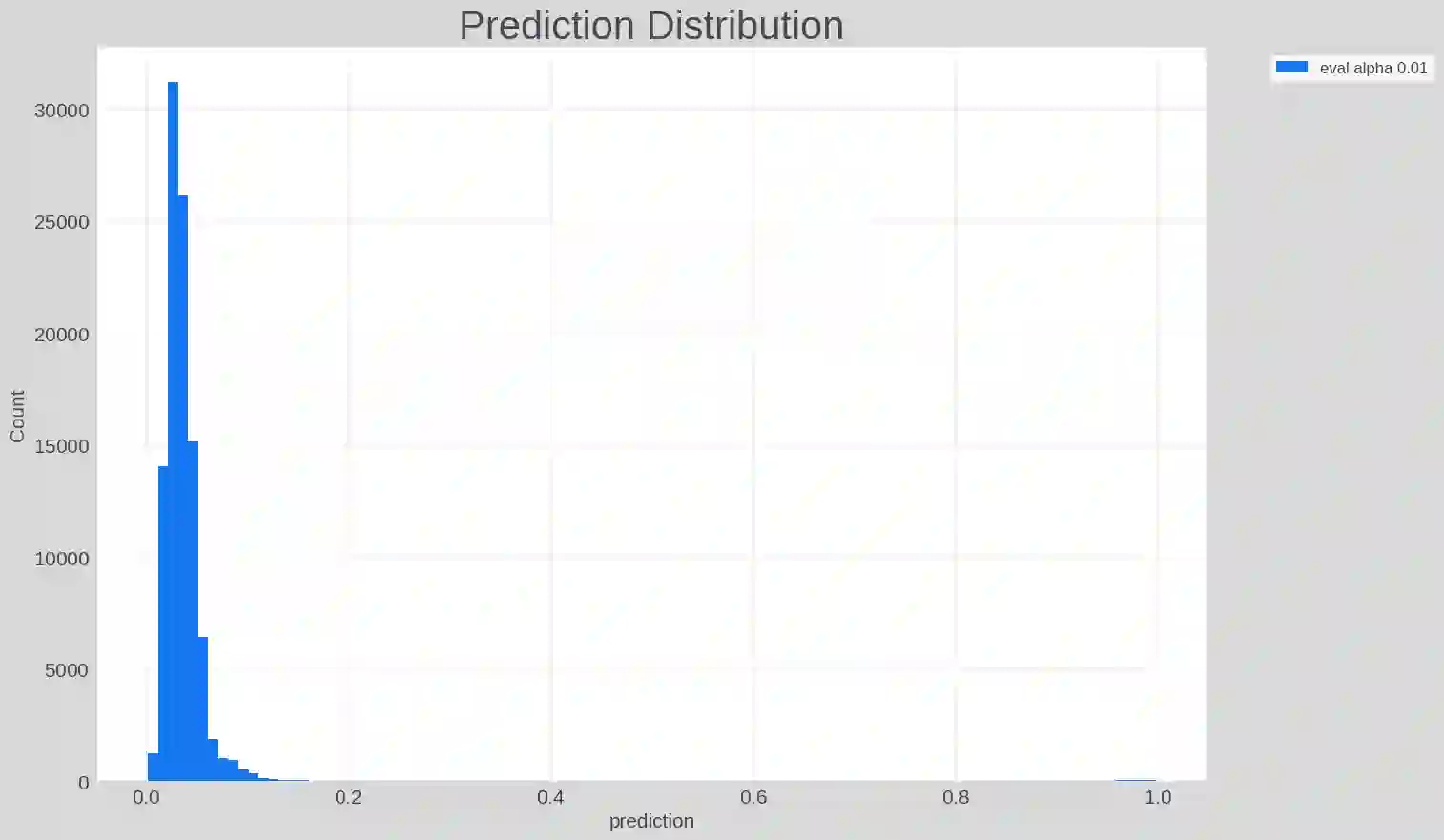

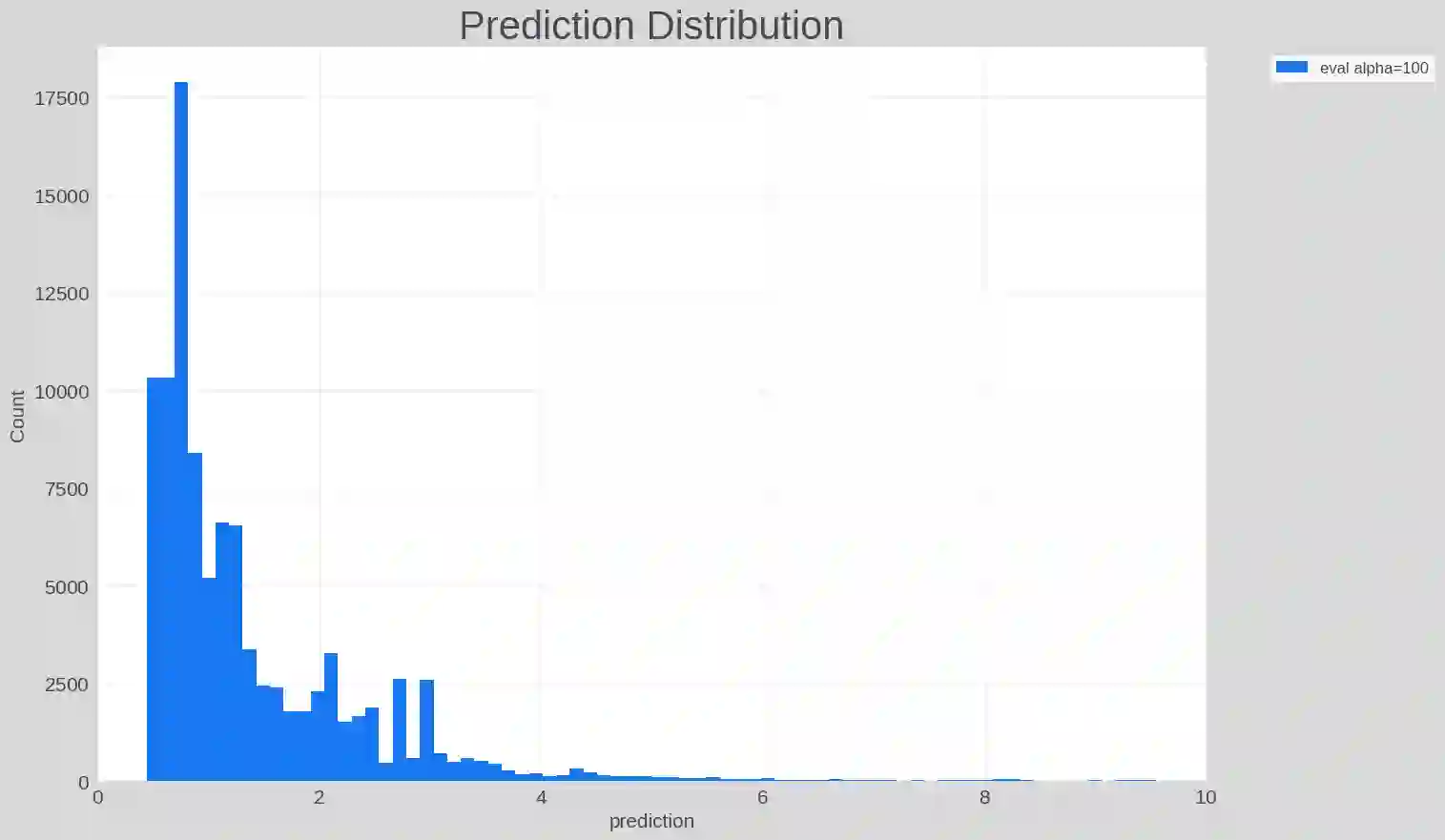

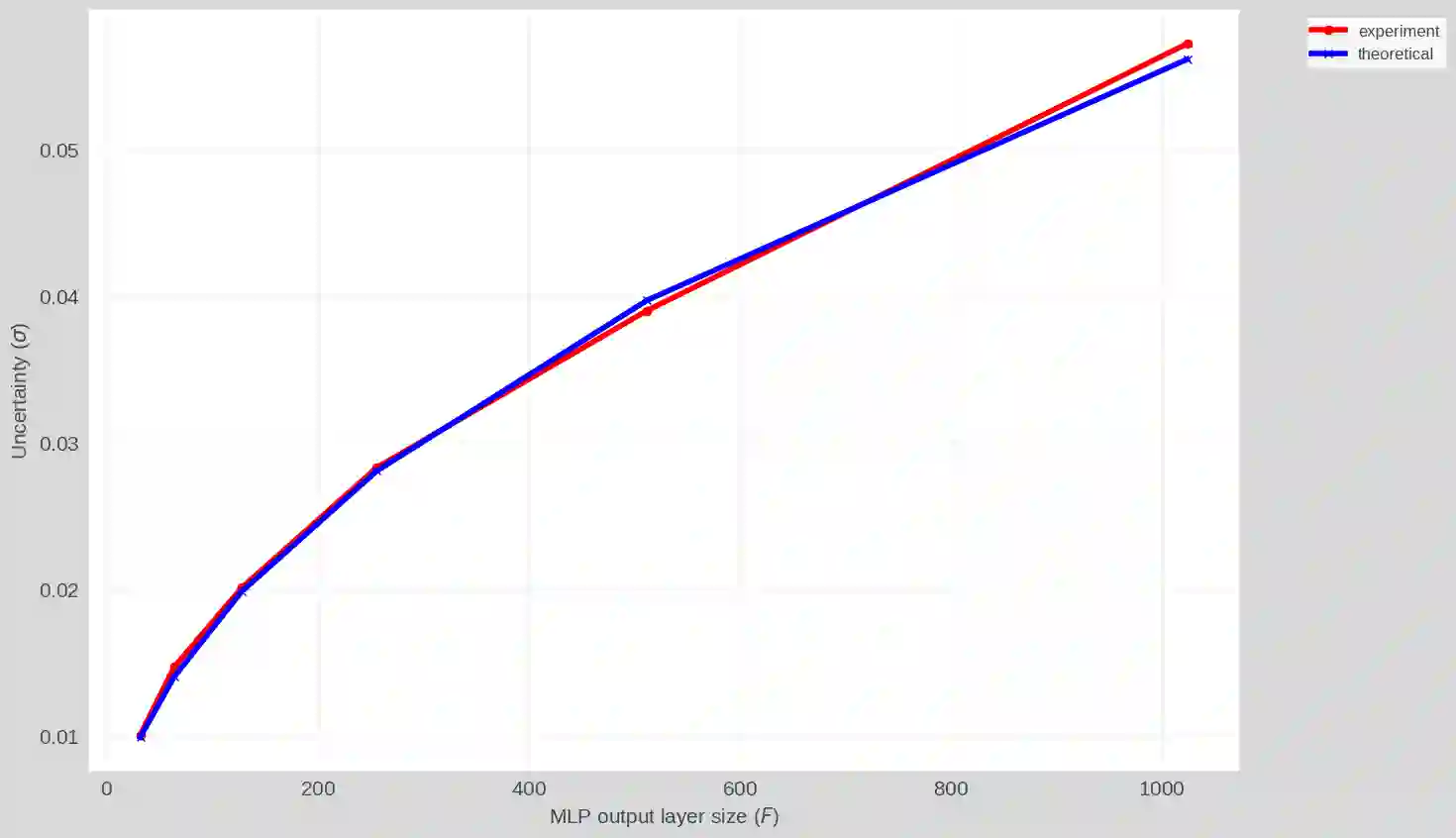

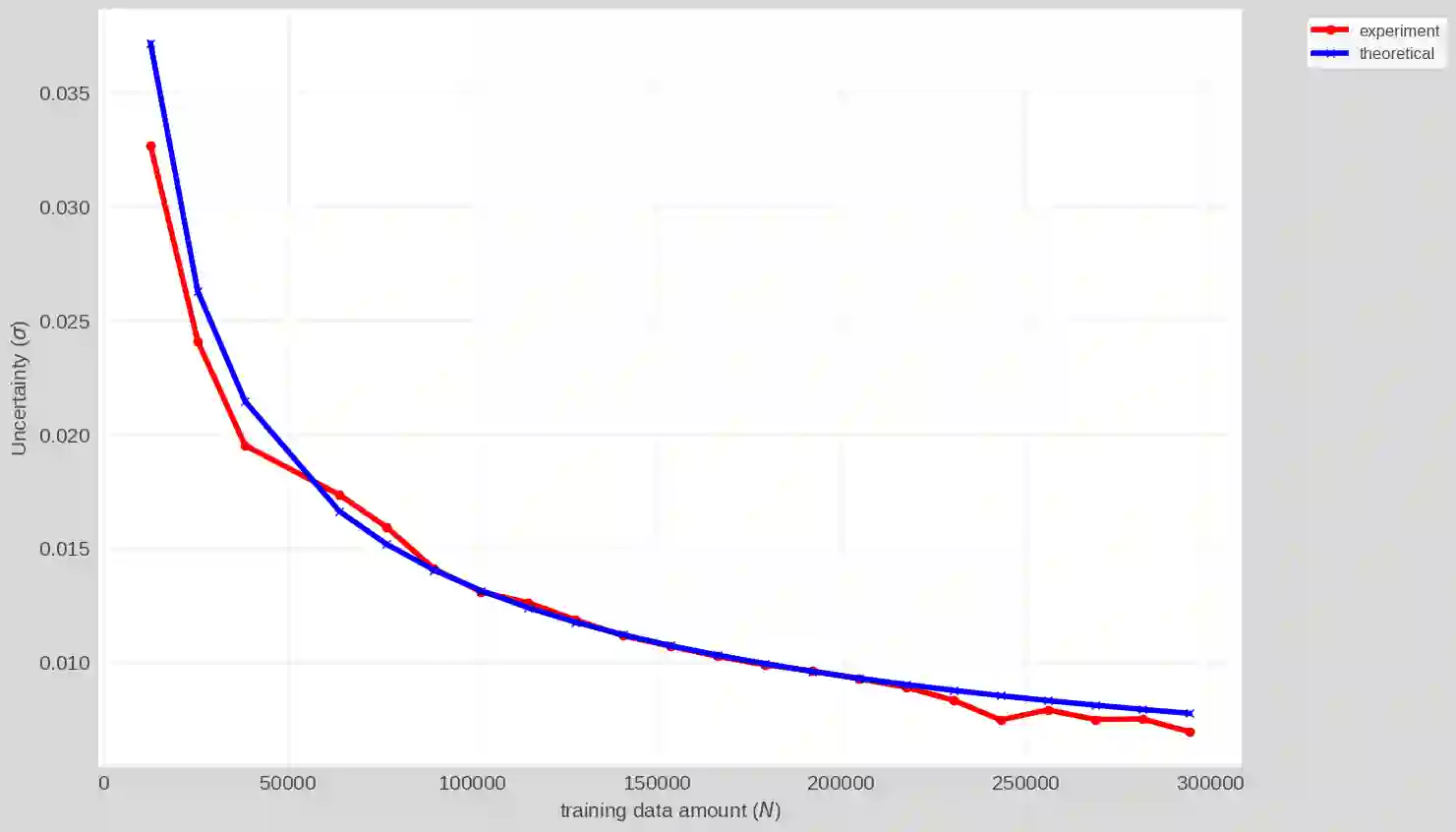

Contextual bandit learning is increasingly favored in modern large-scale recommendation systems. To better utlize the contextual information and available user or item features, the integration of neural networks have been introduced to enhance contextual bandit learning and has triggered significant interest from both academia and industry. However, a major challenge arises when implementing a disjoint neural contextual bandit solution in large-scale recommendation systems, where each item or user may correspond to a separate bandit arm. The huge number of items to recommend poses a significant hurdle for real world production deployment. This paper focuses on a joint neural contextual bandit solution which serves all recommending items in one single model. The output consists of a predicted reward $\mu$, an uncertainty $\sigma$ and a hyper-parameter $\alpha$ which balances exploitation and exploration, e.g., $\mu + \alpha \sigma$. The tuning of the parameter $\alpha$ is typically heuristic and complex in practice due to its stochastic nature. To address this challenge, we provide both theoretical analysis and experimental findings regarding the uncertainty $\sigma$ of the joint neural contextual bandit model. Our analysis reveals that $\alpha$ demonstrates an approximate square root relationship with the size of the last hidden layer $F$ and inverse square root relationship with the amount of training data $N$, i.e., $\sigma \propto \sqrt{\frac{F}{N}}$. The experiments, conducted with real industrial data, align with the theoretical analysis, help understanding model behaviors and assist the hyper-parameter tuning during both offline training and online deployment.

翻译:上下文赌博机学习在现代大规模推荐系统中日益受到青睐。为更好地利用上下文信息及可用的用户或物品特征,神经网络的集成被引入以增强上下文赌博机学习,并引发了学术界与工业界的广泛关注。然而,在大规模推荐系统中实施分离式神经上下文赌博机方案时面临重大挑战——每个物品或用户可能对应独立的赌博机臂。待推荐物品的巨大数量给实际生产部署带来了显著障碍。本文聚焦于联合神经上下文赌博机方案,该方案通过单一模型服务所有推荐物品。其输出包含预测奖励 $\mu$、不确定性 $\sigma$ 以及平衡利用与探索的超参数 $\alpha$(例如 $\mu + \alpha \sigma$)。由于该参数的随机特性,实践中通常采用启发式方法进行调参且过程复杂。为应对这一挑战,我们针对联合神经上下文赌博机模型的不确定性 $\sigma$ 提供了理论分析与实验验证。分析表明,$\alpha$ 与末层隐藏层维度 $F$ 呈现近似平方根关系,与训练数据量 $N$ 呈反平方根关系,即 $\sigma \propto \sqrt{\frac{F}{N}}$。基于真实工业数据开展的实验与理论分析相符,有助于理解模型行为,并为离线训练与在线部署阶段的超参数调优提供支持。