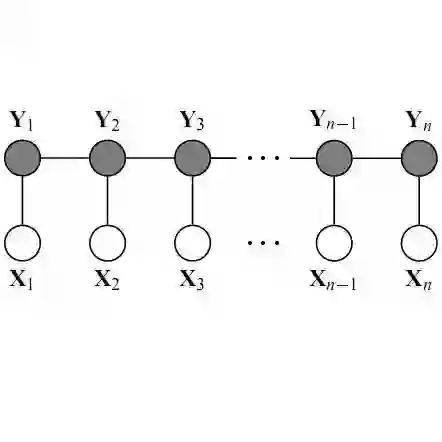

Graph Neural Networks (GNNs), which are nowadays the benchmark approach in graph representation learning, have been shown to be vulnerable to adversarial attacks, raising concerns about their real-world applicability. While existing defense techniques primarily concentrate on the training phase of GNNs, involving adjustments to message passing architectures or pre-processing methods, there is a noticeable gap in methods focusing on increasing robustness during inference. In this context, this study introduces RobustCRF, a post-hoc approach aiming to enhance the robustness of GNNs at the inference stage. Our proposed method, founded on statistical relational learning using a Conditional Random Field, is model-agnostic and does not require prior knowledge about the underlying model architecture. We validate the efficacy of this approach across various models, leveraging benchmark node classification datasets.

翻译:图神经网络(GNNs)作为当前图表示学习中的基准方法,已被证明易受对抗攻击的影响,这引发了对其实际应用可行性的担忧。现有防御技术主要集中于GNNs的训练阶段,涉及对消息传递架构或预处理方法的调整,而在推理阶段提升鲁棒性的方法存在明显空白。在此背景下,本研究提出RobustCRF,一种旨在推理阶段增强GNN鲁棒性的事后处理方法。该方法基于条件随机场的统计关系学习构建,与模型无关,且无需预先了解底层模型架构。我们利用基准节点分类数据集,在不同模型上验证了该方法的有效性。