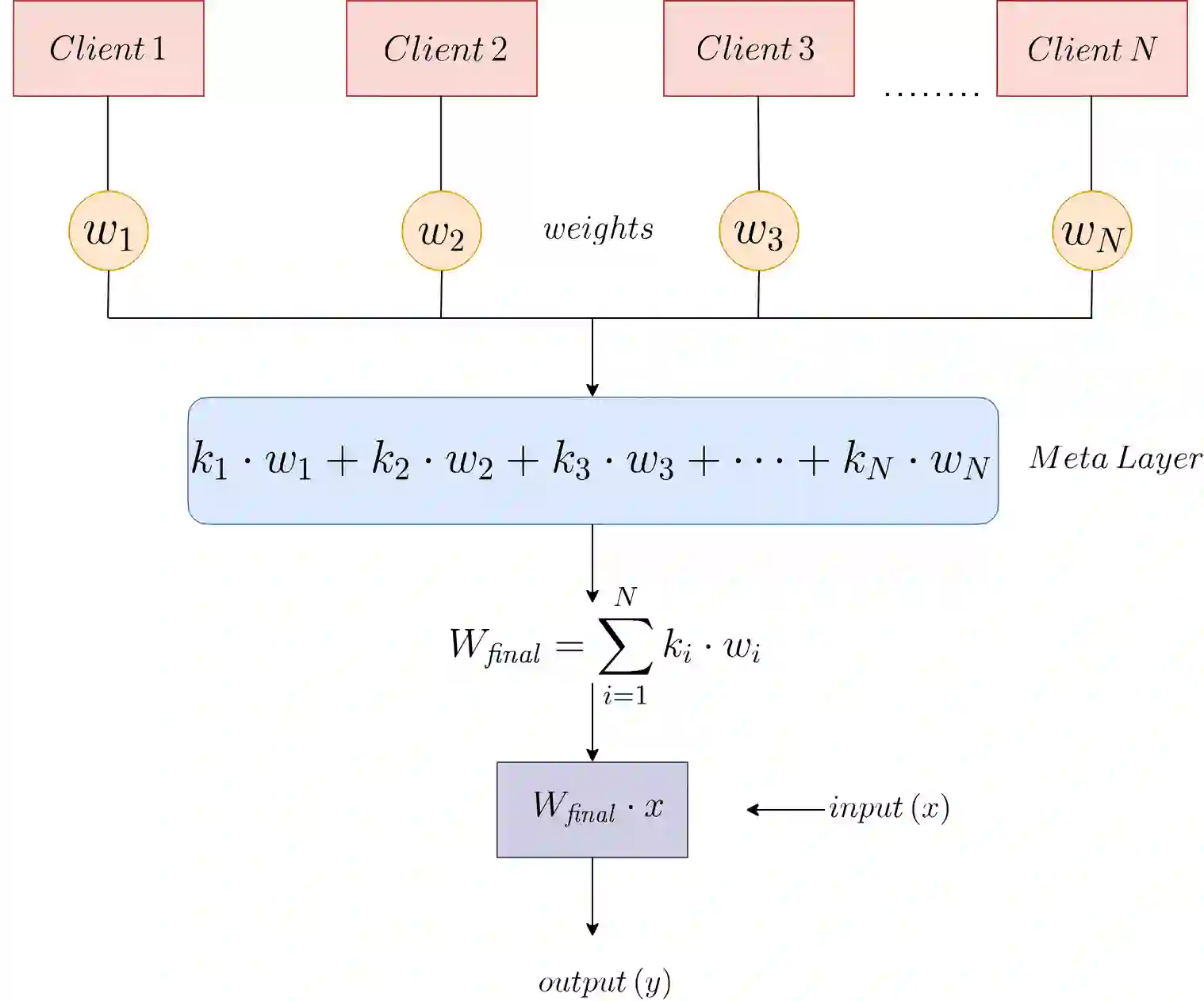

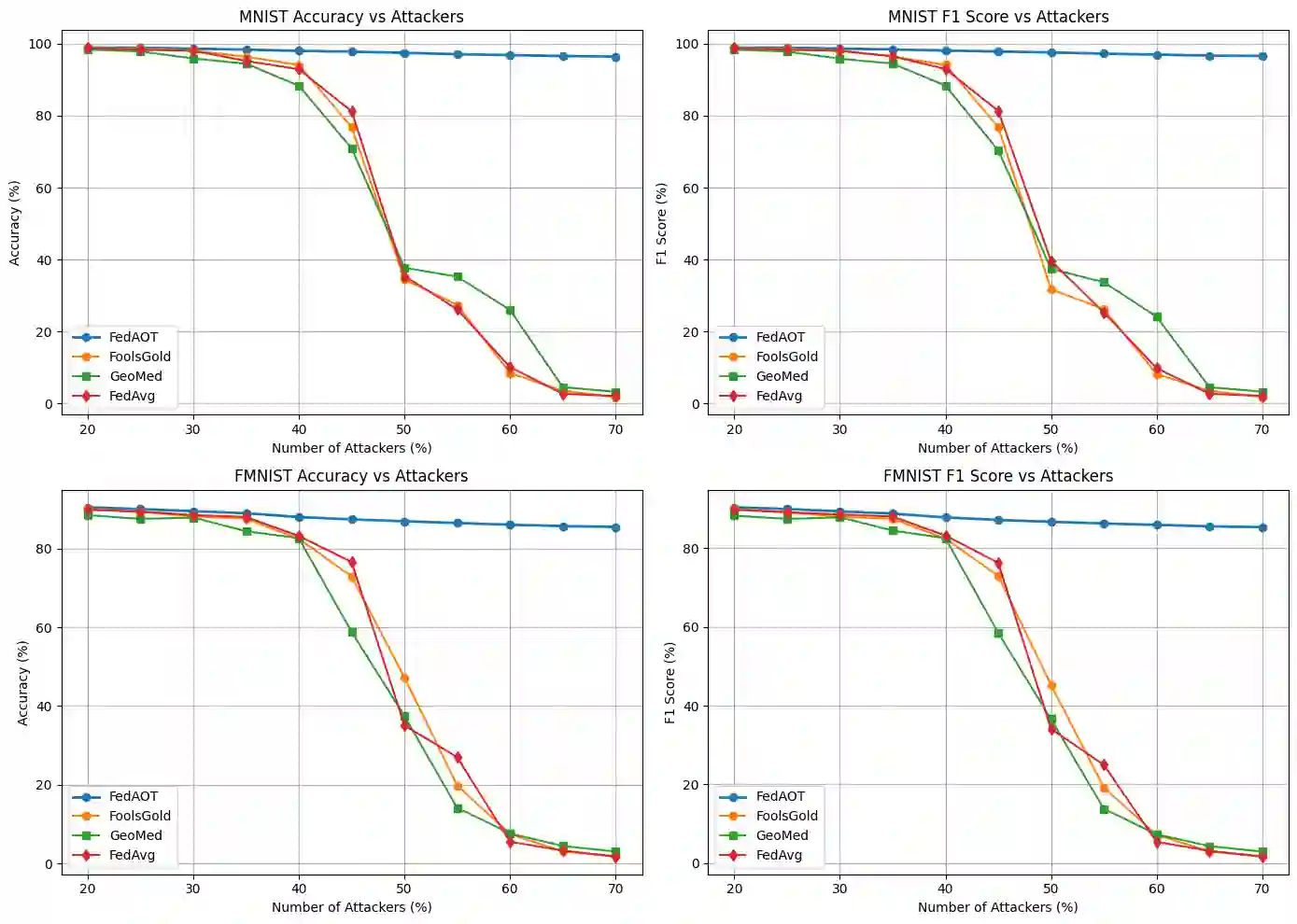

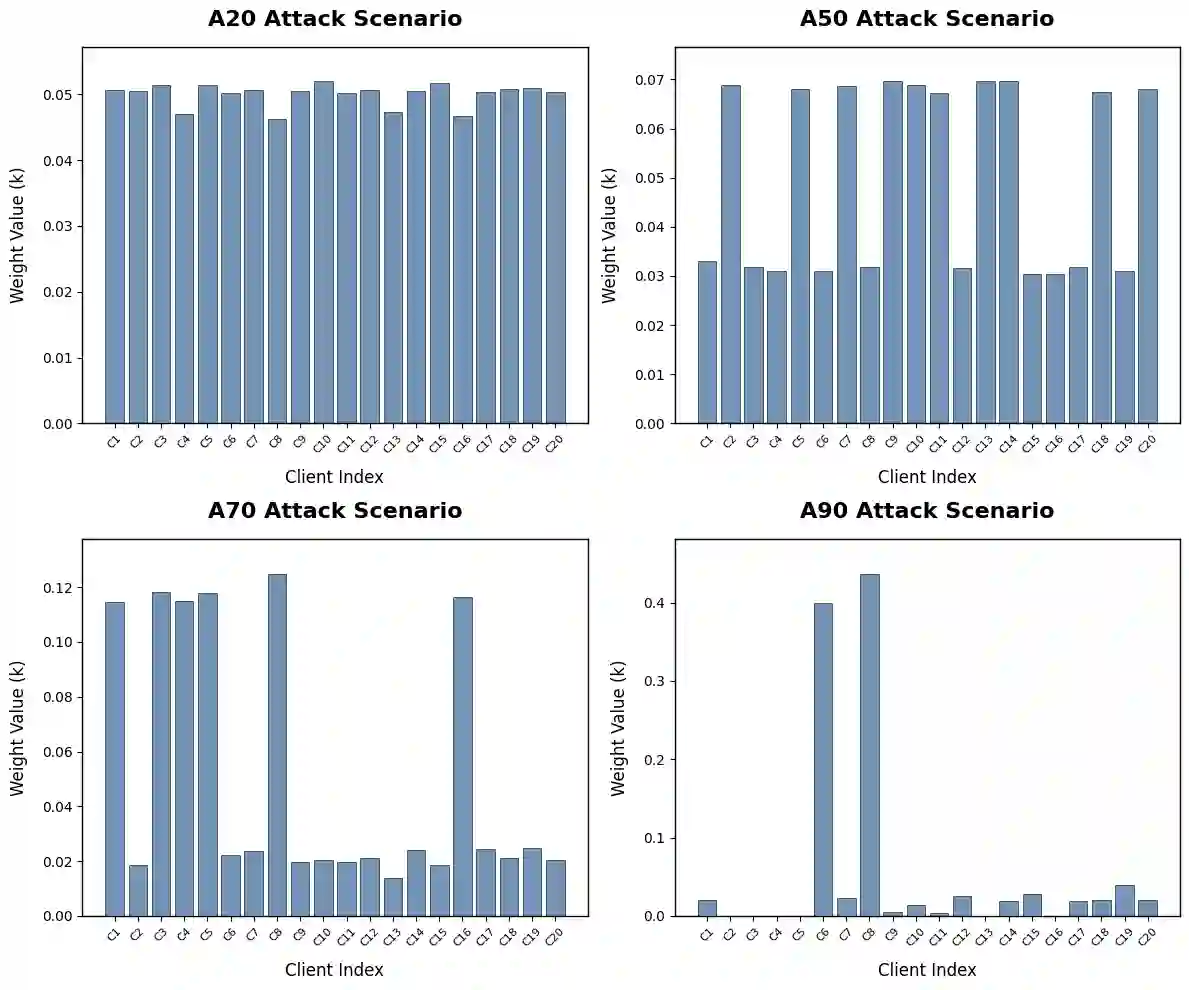

Federated Learning (FL) is increasingly applied in sectors like healthcare, finance, and IoT, enabling collaborative model training while safeguarding user privacy. However, FL systems are susceptible to Byzantine adversaries that inject malicious updates, which can severely compromise global model performance. Existing defenses tend to focus on specific attack types and fail against untargeted strategies, such as multi-label flipping or combinations of noise and backdoor patterns. To overcome these limitations, we propose FedAOT-a novel defense mechanism that counters multi-label flipping and untargeted poisoning attacks using a metalearning-inspired adaptive aggregation framework. FedAOT dynamically weights client updates based on their reliability, suppressing adversarial influence without relying on predefined thresholds or restrictive attack assumptions. Notably, FedAOT generalizes effectively across diverse datasets and a wide range of attack types, maintaining robust performance even in previously unseen scenarios. Experimental results demonstrate that FedAOT substantially improves model accuracy and resilience while maintaining computational efficiency, offering a scalable and practical solution for secure federated learning.

翻译:联邦学习(FL)日益广泛应用于医疗、金融和物联网等领域,它能够在保护用户隐私的同时实现协作式模型训练。然而,FL系统容易受到拜占庭攻击者的威胁,这些攻击者会注入恶意更新,从而严重损害全局模型的性能。现有的防御方法往往专注于特定攻击类型,难以应对无目标攻击策略,例如多标签翻转或噪声与后门模式的组合攻击。为了克服这些局限性,我们提出了FedAOT——一种新颖的防御机制,它利用受元学习启发的自适应聚合框架来抵御多标签翻转和无目标投毒攻击。FedAOT根据客户端更新的可靠性对其进行动态加权,从而抑制对抗性影响,且无需依赖预定义的阈值或限制性攻击假设。值得注意的是,FedAOT能够有效泛化至不同的数据集和广泛的攻击类型,即使在先前未见的场景中也能保持鲁棒性能。实验结果表明,FedAOT在保持计算效率的同时,显著提高了模型精度和抗逆性,为安全的联邦学习提供了一个可扩展且实用的解决方案。