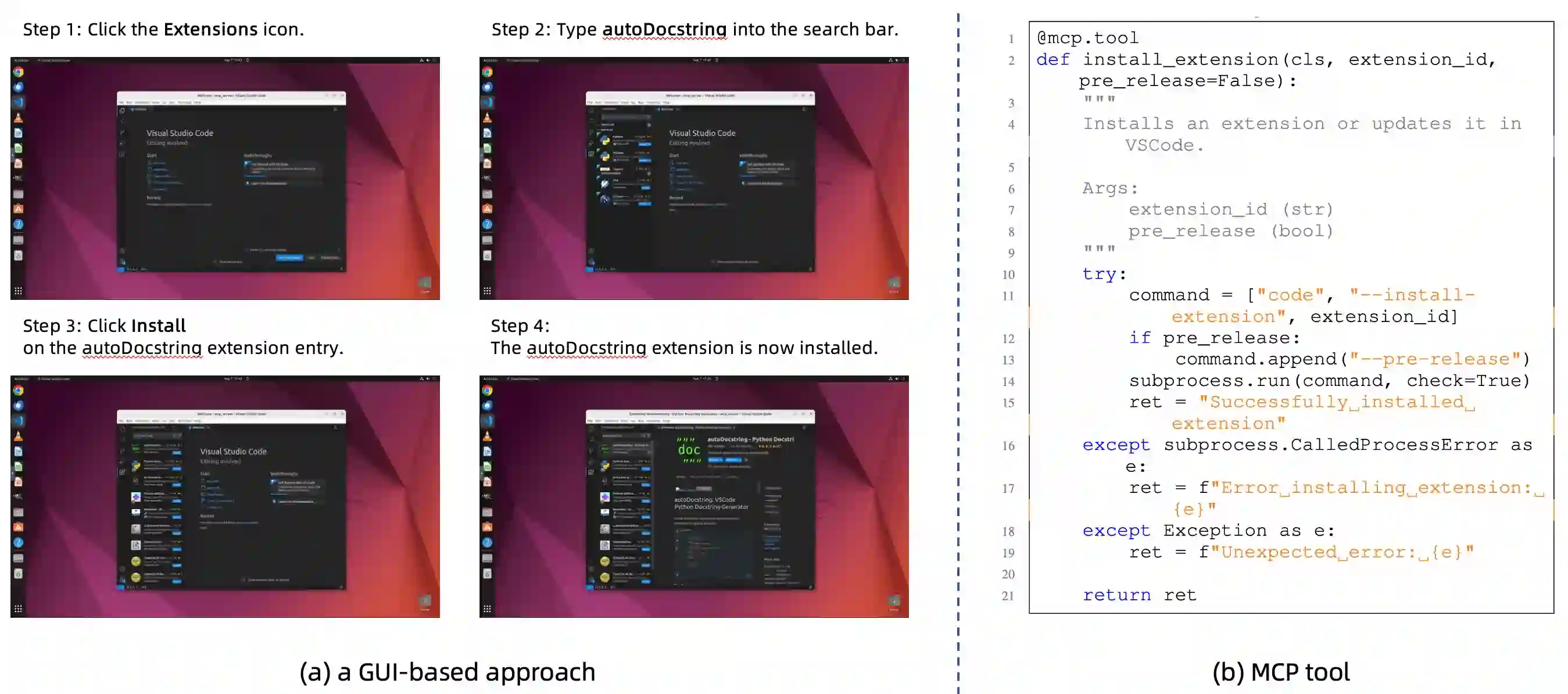

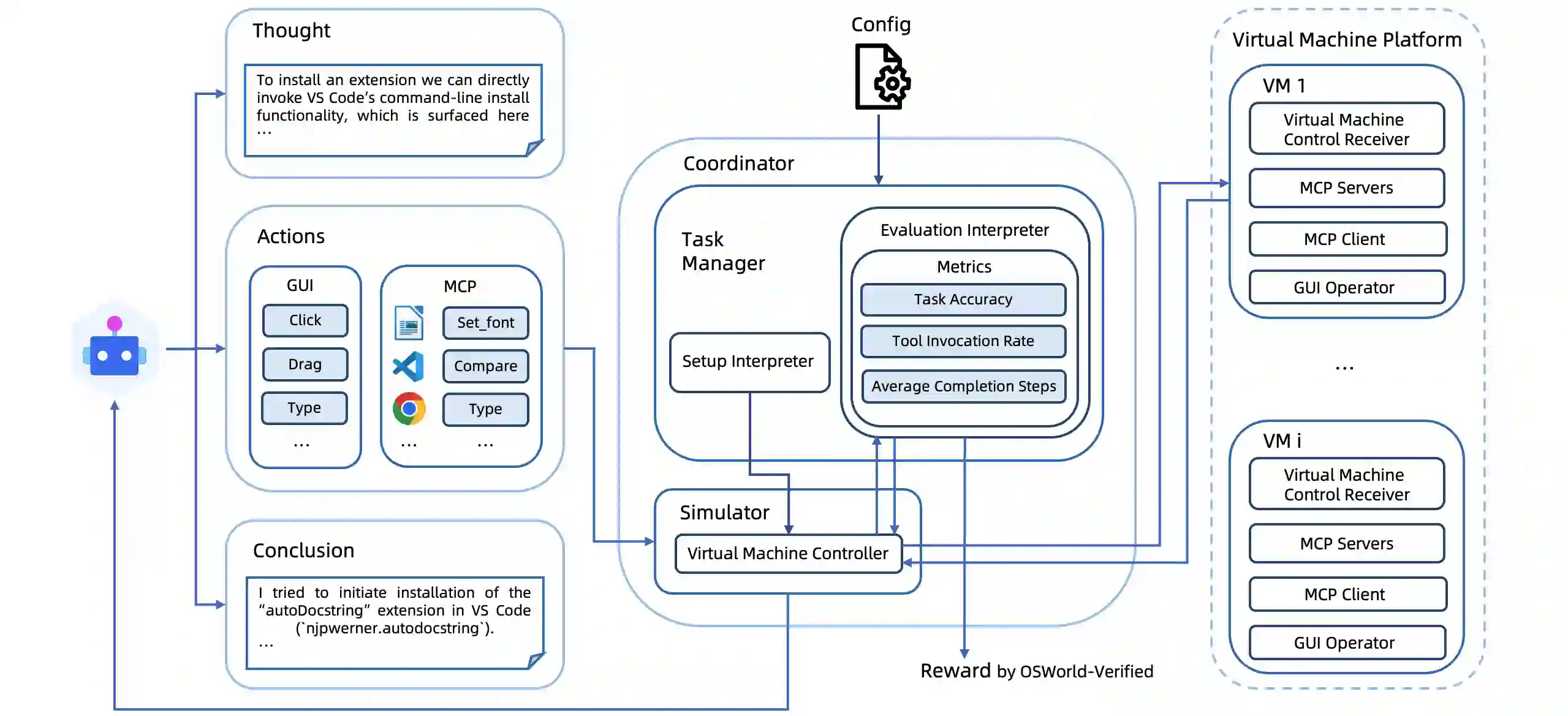

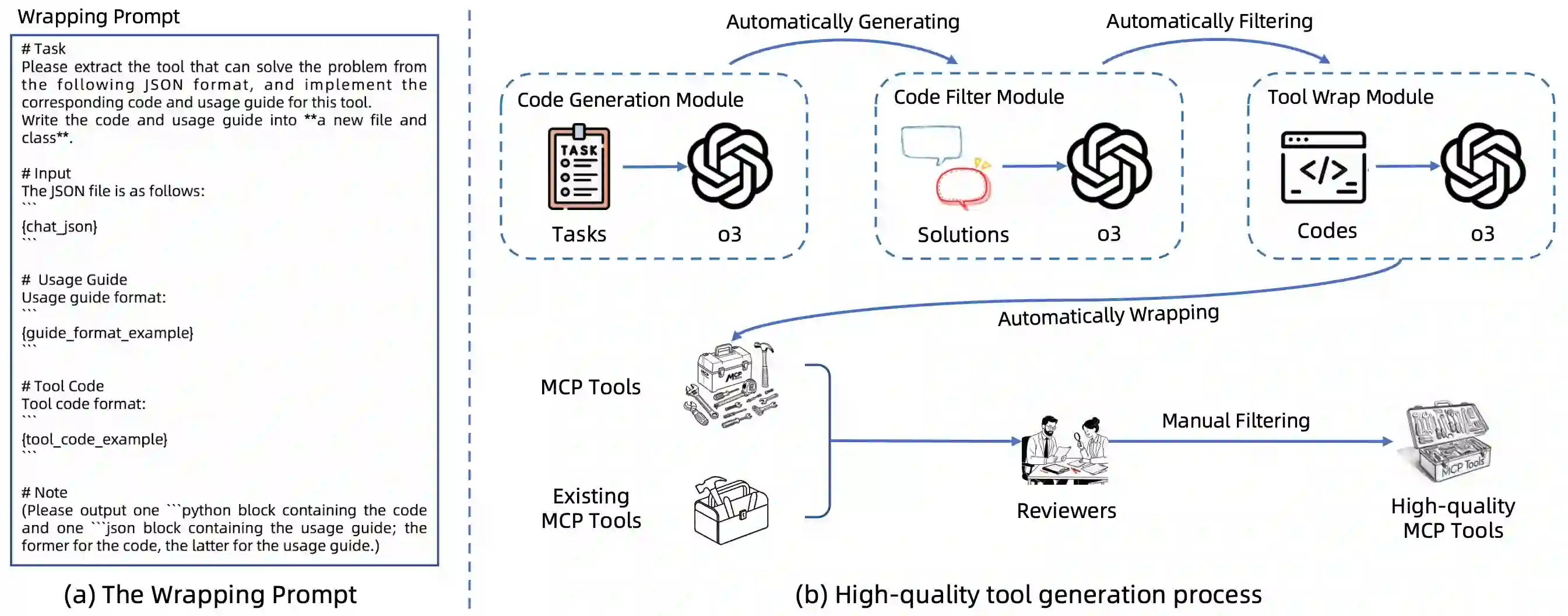

With advances in decision-making and reasoning capabilities, multimodal agents show strong potential in computer application scenarios. Past evaluations have mainly assessed GUI interaction skills, while tool invocation abilities, such as those enabled by the Model Context Protocol (MCP), have been largely overlooked. Comparing agents with integrated tool invocation to those evaluated only on GUI interaction is inherently unfair. We present OSWorld-MCP, the first comprehensive and fair benchmark for assessing computer-use agents' tool invocation, GUI operation, and decision-making abilities in a real-world environment. We design a novel automated code-generation pipeline to create tools and combine them with a curated selection from existing tools. Rigorous manual validation yields 158 high-quality tools (covering 7 common applications), each verified for correct functionality, practical applicability, and versatility. Extensive evaluations of state-of-the-art multimodal agents on OSWorld-MCP show that MCP tools generally improve task success rates (e.g., from 8.3% to 20.4% for OpenAI o3 at 15 steps, from 40.1% to 43.3% for Claude 4 Sonnet at 50 steps), underscoring the importance of assessing tool invocation capabilities. However, even the strongest models have relatively low tool invocation rates, Only 36.3%, indicating room for improvement and highlighting the benchmark's challenge. By explicitly measuring MCP tool usage skills, OSWorld-MCP deepens understanding of multimodal agents and sets a new standard for evaluating performance in complex, tool-assisted environments. Our code, environment, and data are publicly available at https://osworld-mcp.github.io.

翻译:随着决策与推理能力的进步,多模态智能体在计算机应用场景中展现出巨大潜力。以往的评估主要关注图形用户界面交互技能,而工具调用能力(如通过模型上下文协议实现的功能)在很大程度上被忽视。将集成工具调用的智能体与仅评估GUI交互的智能体进行比较本质上是不公平的。我们提出了OSWorld-MCP,这是首个全面且公平的基准测试,用于在真实环境中评估计算机使用智能体的工具调用、GUI操作和决策能力。我们设计了一种新颖的自动化代码生成流程来创建工具,并将其与从现有工具中精选的部分相结合。通过严格的人工验证,我们获得了158个高质量工具(覆盖7种常见应用),每个工具均经过功能正确性、实际适用性和多功能性的验证。在OSWorld-MCP上对最先进的多模态智能体进行的广泛评估表明,MCP工具通常能提高任务成功率(例如,OpenAI o3在15步时从8.3%提升至20.4%,Claude 4 Sonnet在50步时从40.1%提升至43.3%),这凸显了评估工具调用能力的重要性。然而,即使是最强的模型,其工具调用率也相对较低,仅为36.3%,表明仍有改进空间,并突显了该基准测试的挑战性。通过明确衡量MCP工具使用技能,OSWorld-MCP深化了对多模态智能体的理解,并为评估复杂工具辅助环境中的性能设定了新标准。我们的代码、环境和数据已在https://osworld-mcp.github.io公开提供。