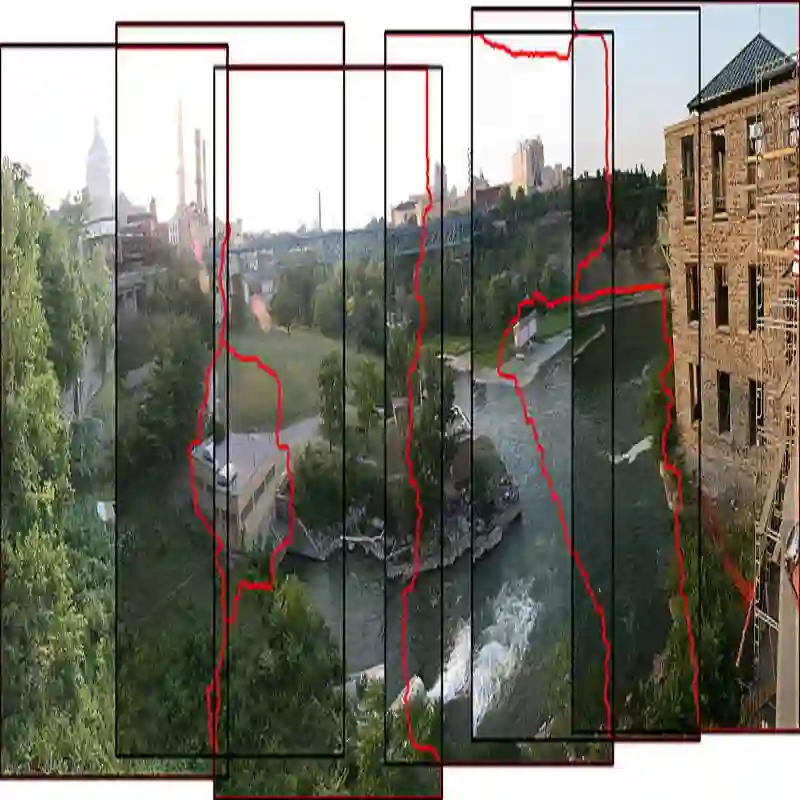

We introduce Forensim, an attention-based state-space framework for image forgery detection that jointly localizes both manipulated (target) and source regions. Unlike traditional approaches that rely solely on artifact cues to detect spliced or forged areas, Forensim is designed to capture duplication patterns crucial for understanding context. In scenarios such as protest imagery, detecting only the forged region, for example a duplicated act of violence inserted into a peaceful crowd, can mislead interpretation, highlighting the need for joint source-target localization. Forensim outputs three-class masks (pristine, source, target) and supports detection of both splicing and copy-move forgeries within a unified architecture. We propose a visual state-space model that leverages normalized attention maps to identify internal similarities, paired with a region-based block attention module to distinguish manipulated regions. This design enables end-to-end training and precise localization. Forensim achieves state-of-the-art performance on standard benchmarks. We also release CMFD-Anything, a new dataset addressing limitations of existing copy-move forgery datasets.

翻译:本文提出Forensim,一种基于注意力的状态空间框架,用于图像篡改检测,能够联合定位被篡改(目标)区域与源区域。与仅依赖伪影线索检测拼接或篡改区域的传统方法不同,Forensim旨在捕获对理解上下文至关重要的复制模式。在诸如抗议图像等场景中,若仅检测伪造区域(例如插入和平人群中的重复暴力行为)可能导致解读误导,这凸显了联合源-目标定位的必要性。Forensim输出三类掩码(原始区域、源区域、目标区域),并在统一架构中支持拼接与复制-移动篡改的检测。我们提出一种视觉状态空间模型,利用归一化注意力图识别内部相似性,并结合基于区域的块注意力模块以区分篡改区域。该设计支持端到端训练与精确定位。Forensim在标准基准测试中取得了最先进的性能。我们还发布了CMFD-Anything数据集,以解决现有复制-移动篡改数据集的局限性。