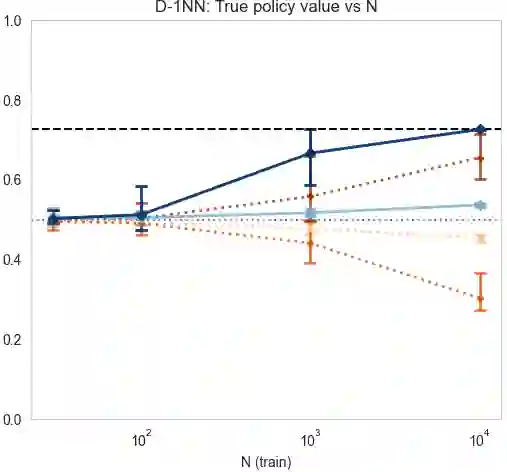

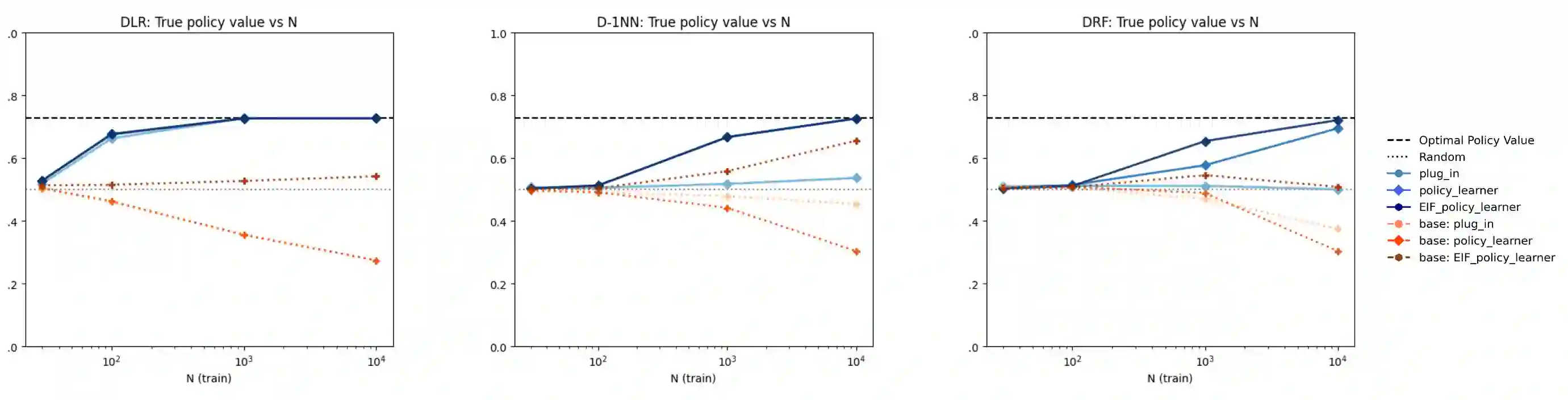

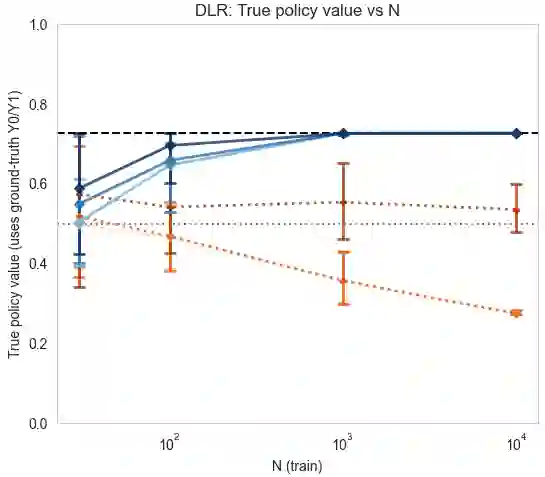

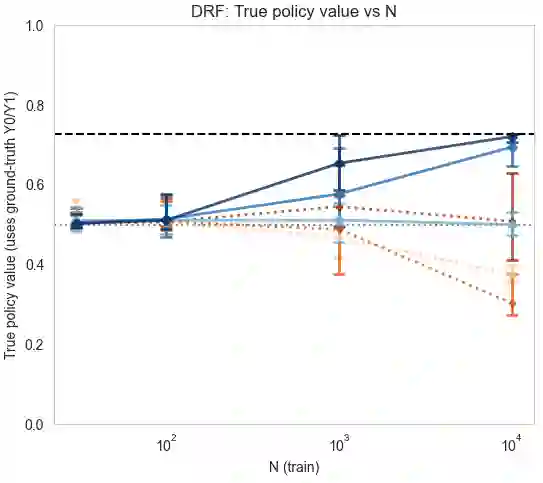

We introduce a new preference-based framework for conditional treatment effect estimation and policy learning, built on the Conditional Preference-based Treatment Effect (CPTE). CPTE requires only that outcomes be ranked under a preference rule, unlocking flexible modeling of heterogeneous effects with multivariate, ordinal, or preference-driven outcomes. This unifies applications such as conditional probability of necessity and sufficiency, conditional Win Ratio, and Generalized Pairwise Comparisons. Despite the intrinsic non-identifiability of comparison-based estimands, CPTE provides interpretable targets and delivers new identifiability conditions for previous unidentifiable estimands. We present estimation strategies via matching, quantile, and distributional regression, and further design efficient influence-function estimators to correct plug-in bias and maximize policy value. Synthetic and semi-synthetic experiments demonstrate clear performance gains and practical impact.

翻译:我们提出了一种新的基于偏好的框架,用于条件处理效应估计和策略学习,该框架建立在条件偏好处理效应(CPTE)之上。CPTE仅要求结果在偏好规则下可排序,从而能够灵活地建模具有多元、有序或偏好驱动结果的异质性效应。这统一了诸如条件必要性与充分性概率、条件胜率比以及广义成对比较等应用。尽管基于比较的估计量存在固有的不可识别性,CPTE提供了可解释的目标,并为先前不可识别的估计量提供了新的可识别性条件。我们提出了通过匹配、分位数和分布回归的估计策略,并进一步设计了高效影响函数估计器以校正插件偏差并最大化策略价值。合成与半合成实验展示了显著的性能提升和实际影响。