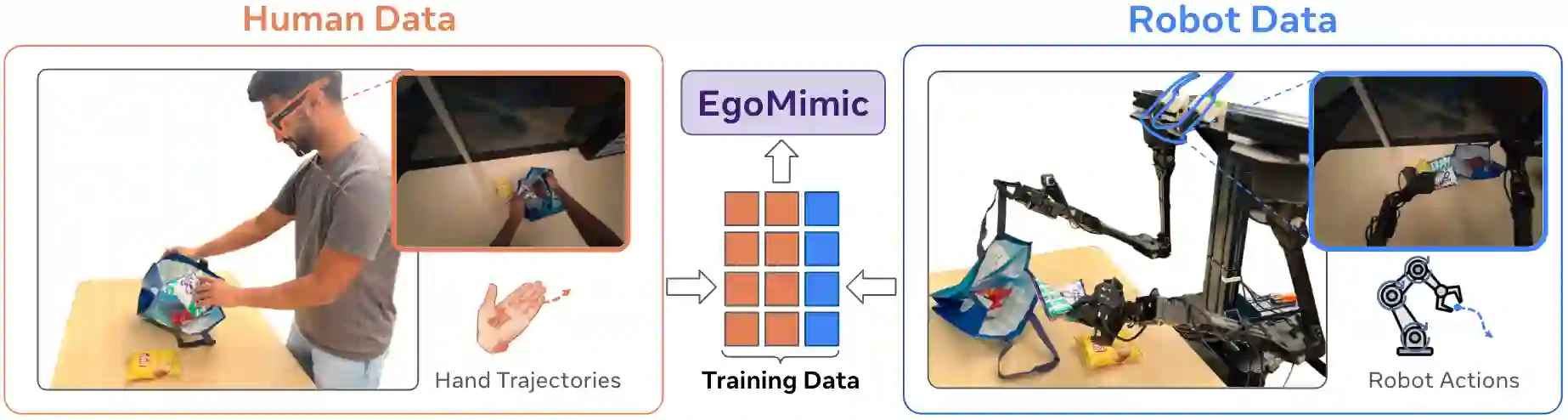

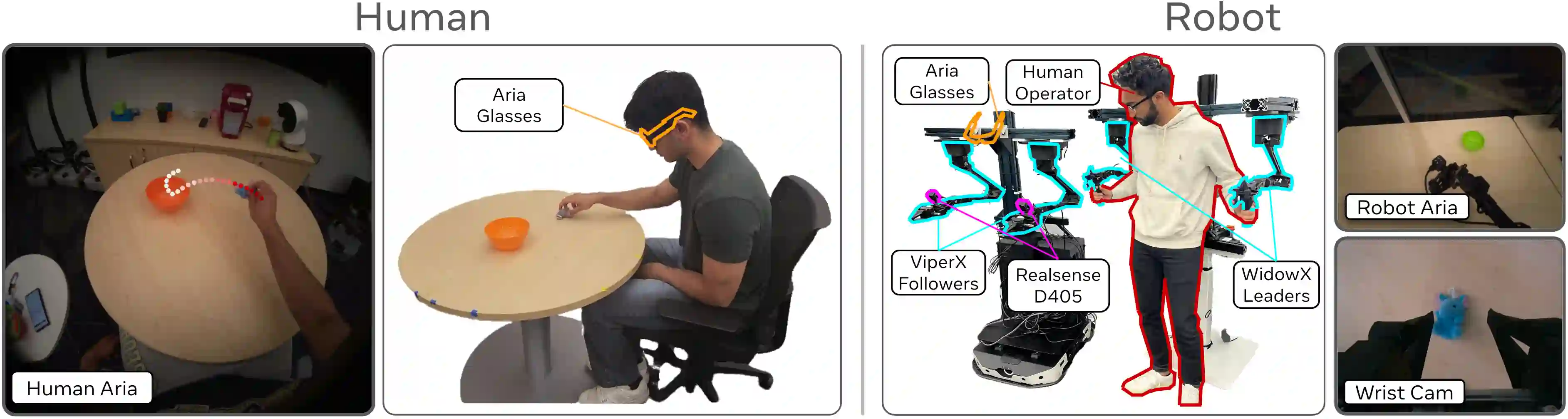

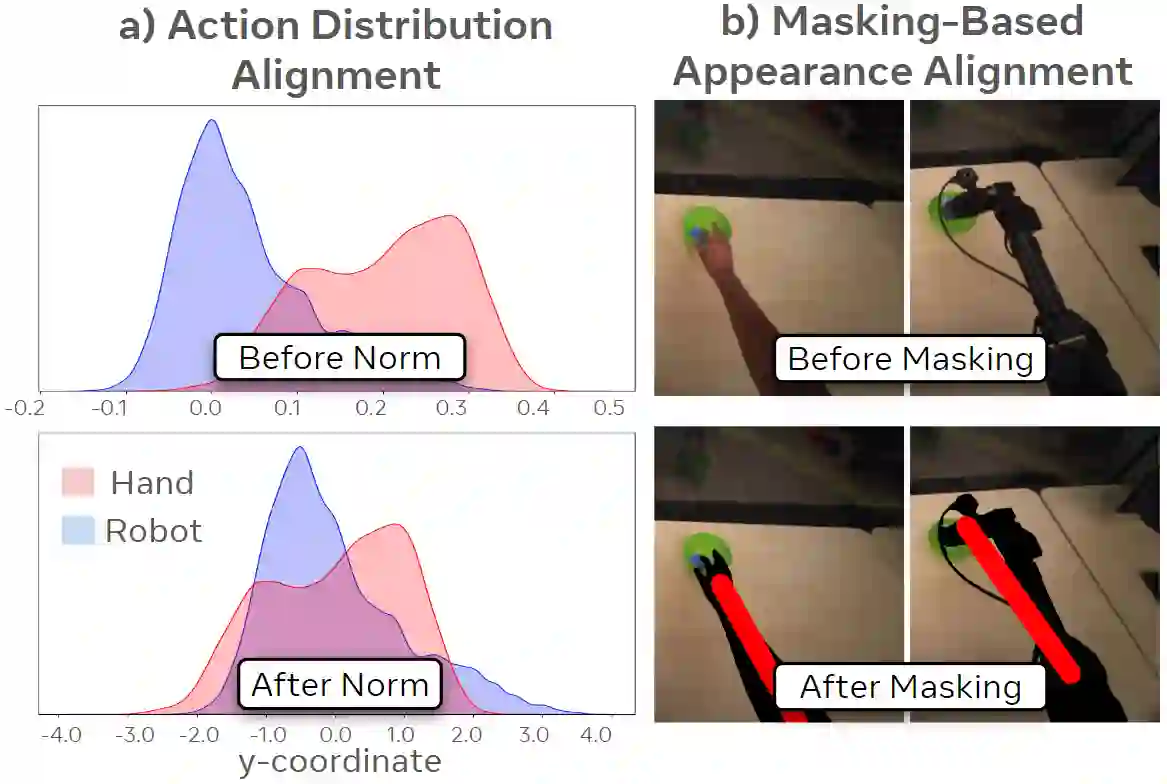

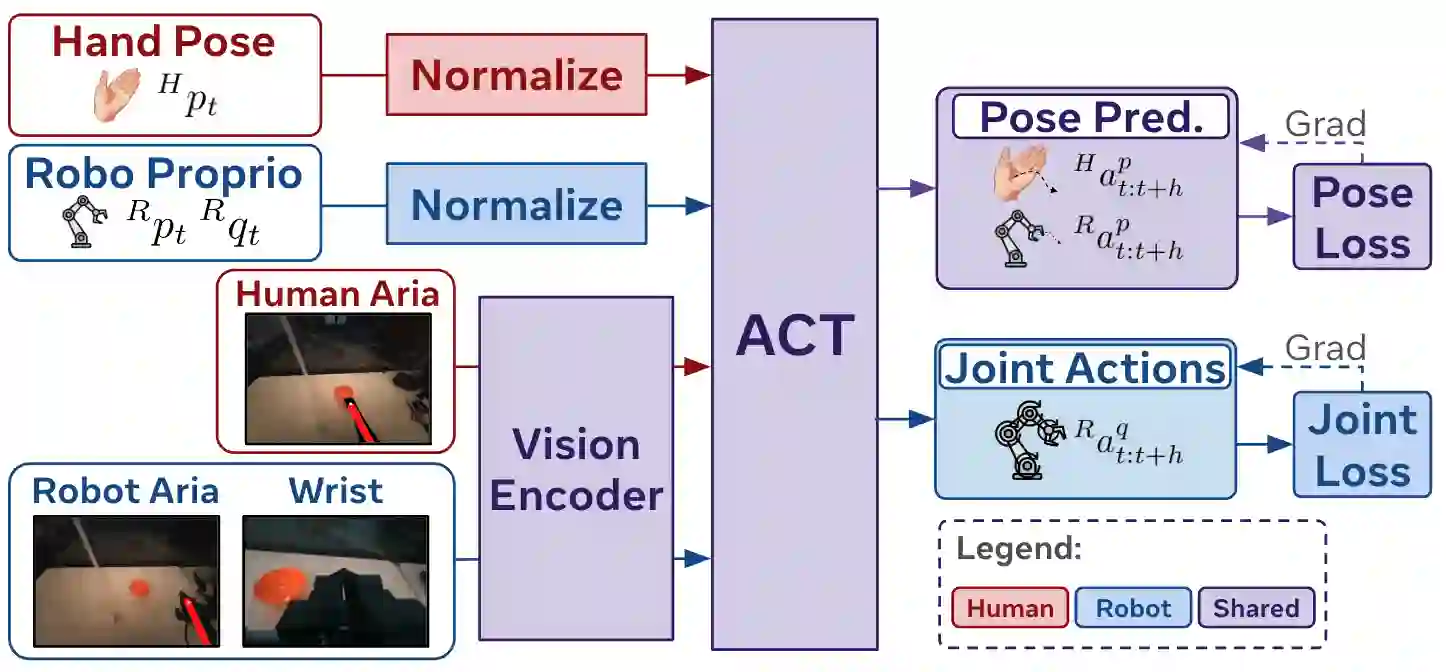

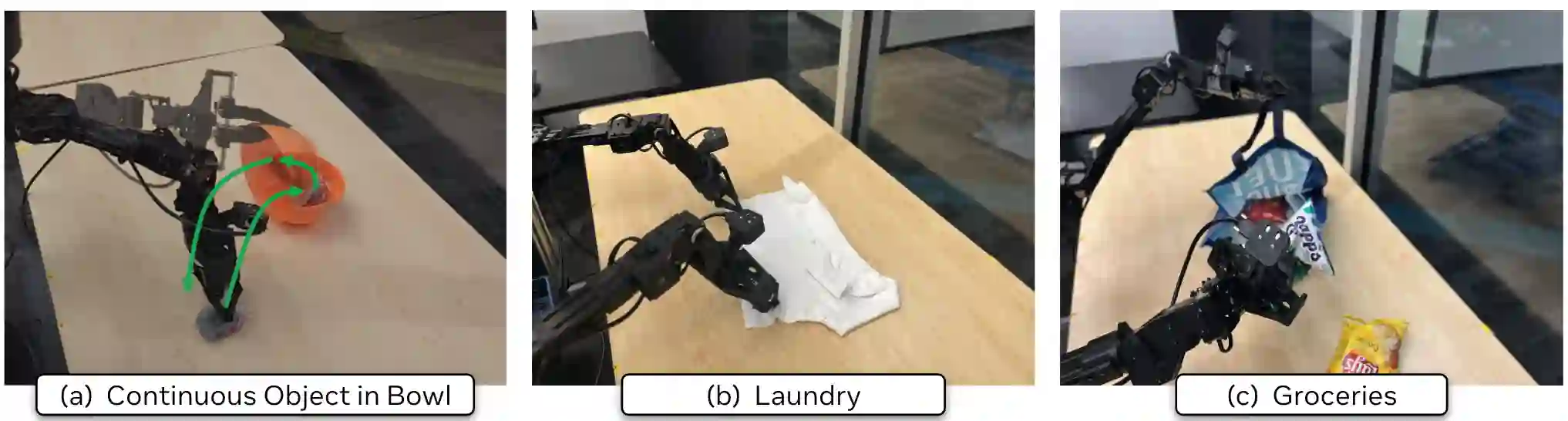

The scale and diversity of demonstration data required for imitation learning is a significant challenge. We present EgoMimic, a full-stack framework which scales manipulation via human embodiment data, specifically egocentric human videos paired with 3D hand tracking. EgoMimic achieves this through: (1) a system to capture human embodiment data using the ergonomic Project Aria glasses, (2) a low-cost bimanual manipulator that minimizes the kinematic gap to human data, (3) cross-domain data alignment techniques, and (4) an imitation learning architecture that co-trains on human and robot data. Compared to prior works that only extract high-level intent from human videos, our approach treats human and robot data equally as embodied demonstration data and learns a unified policy from both data sources. EgoMimic achieves significant improvement on a diverse set of long-horizon, single-arm and bimanual manipulation tasks over state-of-the-art imitation learning methods and enables generalization to entirely new scenes. Finally, we show a favorable scaling trend for EgoMimic, where adding 1 hour of additional hand data is significantly more valuable than 1 hour of additional robot data. Videos and additional information can be found at https://egomimic.github.io/

翻译:模仿学习所需演示数据的规模与多样性是一个重大挑战。我们提出了EgoMimic,这是一个全栈框架,通过人类具身数据(具体而言是结合了3D手部追踪的第一人称人类视频)来扩展操作任务的规模。EgoMimic通过以下方式实现这一目标:(1) 一个使用符合人体工程学的Project Aria眼镜来采集人类具身数据的系统,(2) 一个成本低廉的双臂机械手,其最小化了与人类数据之间的运动学差距,(3) 跨领域数据对齐技术,以及(4) 一种在人类数据和机器人数据上协同训练的模仿学习架构。与先前仅从人类视频中提取高层意图的工作相比,我们的方法将人类数据和机器人数据平等地视为具身演示数据,并从这两个数据源中学习一个统一的策略。在一系列多样化的长时程、单臂和双臂操作任务上,EgoMimic相比最先进的模仿学习方法取得了显著改进,并能泛化到全新的场景。最后,我们展示了EgoMimic一个有利的扩展趋势:增加1小时额外的手部数据比增加1小时额外的机器人数据价值显著更高。视频和更多信息可在 https://egomimic.github.io/ 找到。