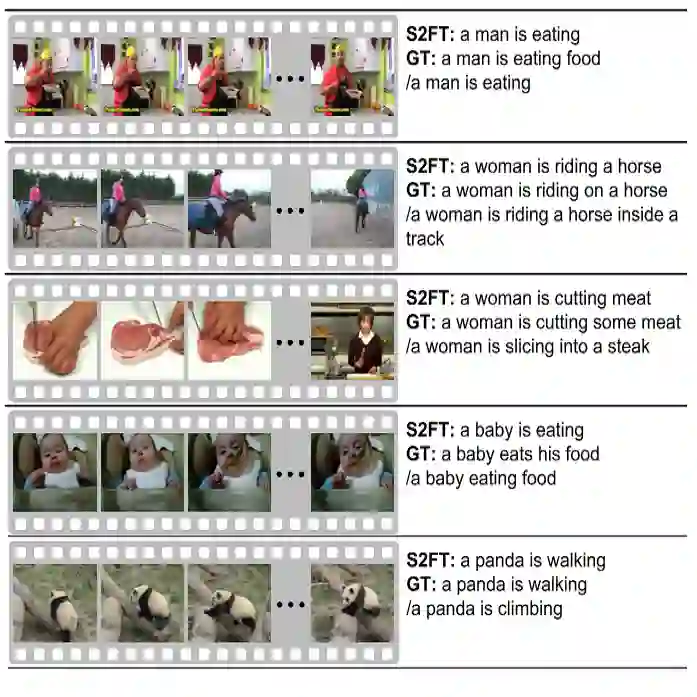

Video is an increasingly prominent and information-dense medium, yet it poses substantial challenges for language models. A typical video consists of a sequence of shorter segments, or shots, that collectively form a coherent narrative. Each shot is analogous to a word in a sentence where multiple data streams of information (such as visual and auditory data) must be processed simultaneously. Comprehension of the entire video requires not only understanding the visual-audio information of each shot but also requires that the model links the ideas between each shot to generate a larger, all-encompassing story. Despite significant progress in the field, current works often overlook videos' more granular shot-by-shot semantic information. In this project, we propose a family of efficient large language vision models (LLVMs) to boost video summarization and captioning called Shotluck Holmes. By leveraging better pretraining and data collection strategies, we extend the abilities of existing small LLVMs from being able to understand a picture to being able to understand a sequence of frames. Specifically, we show that Shotluck Holmes achieves better performance than state-of-the-art results on the Shot2Story video captioning and summary task with significantly smaller and more computationally efficient models.

翻译:视频作为一种日益重要且信息密集的媒介,对语言模型提出了重大挑战。典型的视频由一系列较短的片段(即镜头)组成,这些镜头共同构成连贯的叙事。每个镜头类似于句子中的一个词,其中多种信息数据流(如视觉和听觉数据)必须同时处理。理解整个视频不仅需要理解每个镜头的视听觉信息,还要求模型能够连接各镜头间的语义关联,以生成更宏大、完整的故事。尽管该领域已取得显著进展,现有研究往往忽视了视频更细粒度的逐镜头语义信息。在本项目中,我们提出了一系列高效的大型语言视觉模型(LLVM)家族——Shotluck Holmes,以提升视频摘要与描述性能。通过采用更优的预训练与数据收集策略,我们将现有小型LLVM的能力从理解单张图像扩展到理解连续帧序列。具体而言,我们证明Shotluck Holmes在Shot2Story视频描述与摘要任务上取得了优于最先进模型的性能,同时模型规模显著更小且计算效率更高。