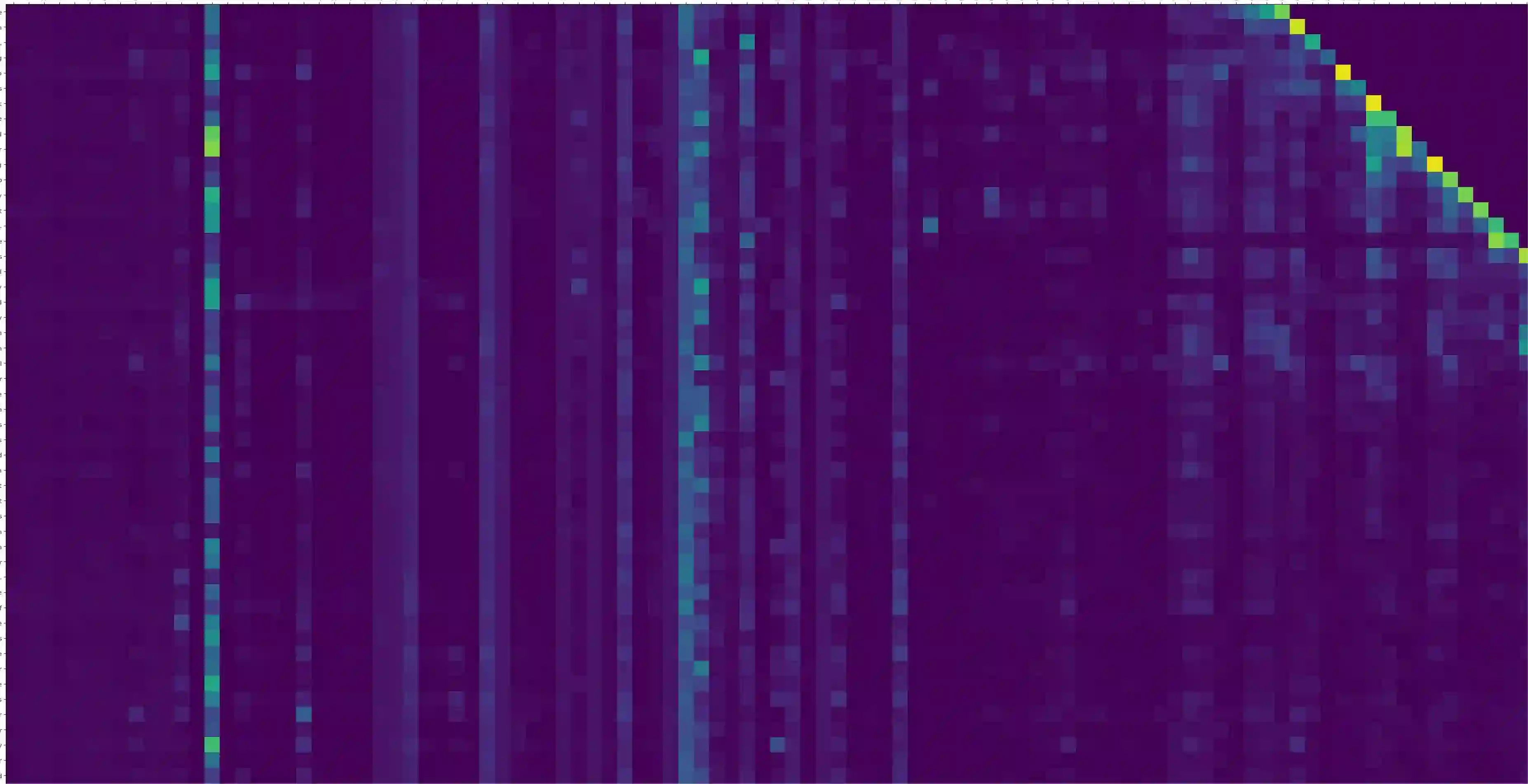

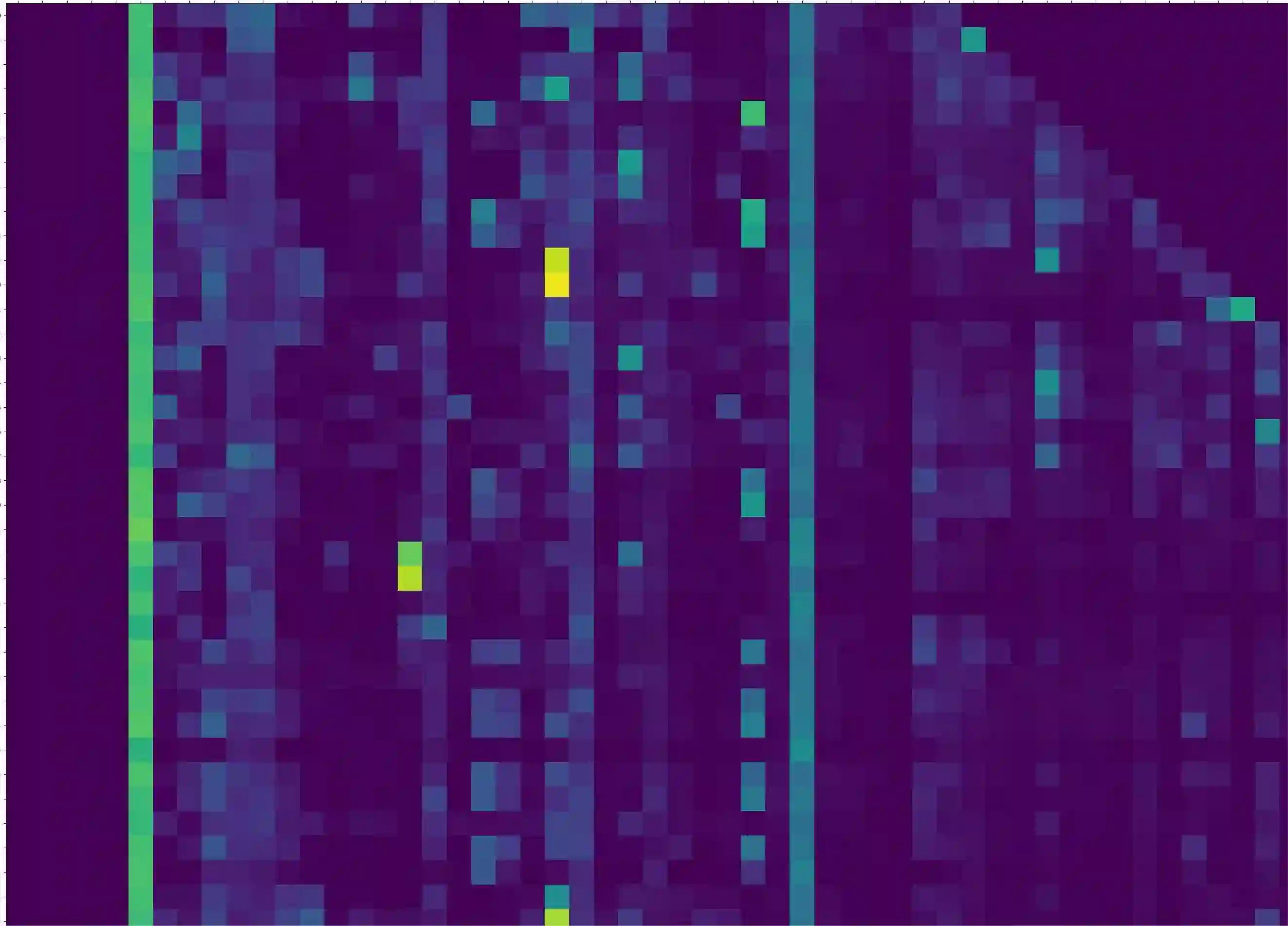

Large Vision-Language Models (LVLMs) have shown remarkable performance on many visual-language tasks. However, these models still suffer from multimodal hallucination, which means the generation of objects or content that violates the images. Many existing work detects hallucination by directly judging whether an object exists in an image, overlooking the association between the object and semantics. To address this issue, we propose Hierarchical Feedback Learning with Vision-enhanced Penalty Decoding (HELPD). This framework incorporates hallucination feedback at both object and sentence semantic levels. Remarkably, even with a marginal degree of training, this approach can alleviate over 15% of hallucination. Simultaneously, HELPD penalizes the output logits according to the image attention window to avoid being overly affected by generated text. HELPD can be seamlessly integrated with any LVLMs. Our experiments demonstrate that the proposed framework yields favorable results across multiple hallucination benchmarks. It effectively mitigates hallucination for different LVLMs and concurrently improves their text generation quality.

翻译:大型视觉语言模型(LVLMs)在许多视觉语言任务上展现出卓越性能。然而,这些模型仍受多模态幻觉问题的困扰,即生成与图像内容相违背的对象或内容。现有工作大多通过直接判断对象是否存在于图像中来检测幻觉,忽略了对象与语义之间的关联。为解决此问题,我们提出了基于视觉增强惩罚解码的分层反馈学习框架(HELPD)。该框架在对象和句子语义两个层面融入了幻觉反馈机制。值得注意的是,即使仅进行极少量训练,该方法也能减少超过15%的幻觉现象。同时,HELPD根据图像注意力窗口对输出逻辑值进行惩罚,以避免模型过度受生成文本的影响。HELPD可与任何LVLM无缝集成。实验表明,所提框架在多个幻觉基准测试中均取得优异结果,能有效缓解不同LVLM的幻觉问题,并同步提升其文本生成质量。