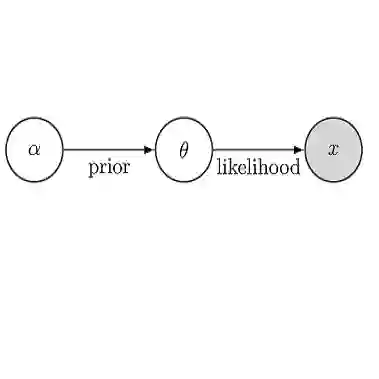

Recent studies seek to provide Graph Neural Network (GNN) interpretability via multiple unsupervised learning models. Due to the scarcity of datasets, current methods easily suffer from learning bias. To solve this problem, we embed a Large Language Model (LLM) as knowledge into the GNN explanation network to avoid the learning bias problem. We inject LLM as a Bayesian Inference (BI) module to mitigate learning bias. The efficacy of the BI module has been proven both theoretically and experimentally. We conduct experiments on both synthetic and real-world datasets. The innovation of our work lies in two parts: 1. We provide a novel view of the possibility of an LLM functioning as a Bayesian inference to improve the performance of existing algorithms; 2. We are the first to discuss the learning bias issues in the GNN explanation problem.

翻译:近期研究尝试通过多种无监督学习模型为图神经网络提供可解释性。由于数据集稀缺,现有方法易受学习偏差影响。为解决此问题,我们将大语言模型作为知识嵌入到GNN解释网络中以避免学习偏差问题。通过将LLM作为贝叶斯推理模块注入系统以缓解学习偏差。该BI模块的有效性已在理论与实验层面得到验证。我们在合成数据集与真实数据集上进行了实验。本研究的创新点在于:1. 提出LLM作为贝叶斯推理模块提升现有算法性能的新视角;2. 首次系统探讨GNN解释任务中的学习偏差问题。