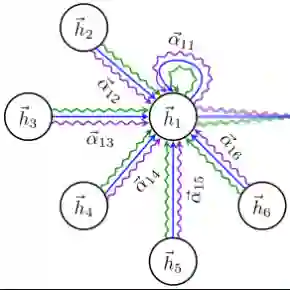

Many datasets have been designed to further the development of fake audio detection, such as datasets of the ASVspoof and ADD challenges. However, these datasets do not consider a situation that the emotion of the audio has been changed from one to another, while other information (e.g. speaker identity and content) remains the same. Changing the emotion of an audio can lead to semantic changes. Speech with tampered semantics may pose threats to people's lives. Therefore, this paper reports our progress in developing such an emotion fake audio detection dataset involving changing emotion state of the origin audio named EmoFake. The fake audio in EmoFake is generated by open source emotion voice conversion models. Furthermore, we proposed a method named Graph Attention networks using Deep Emotion embedding (GADE) for the detection of emotion fake audio. Some benchmark experiments are conducted on this dataset. The results show that our designed dataset poses a challenge to the fake audio detection model trained with the LA dataset of ASVspoof 2019. The proposed GADE shows good performance in the face of emotion fake audio.

翻译:为推进伪造音频检测技术的发展,已有许多数据集被构建,例如ASVspoof和ADD挑战赛的相关数据集。然而,这些数据集未考虑音频情感被篡改(即从一种情感状态转换为另一种)而其他信息(如说话人身份与内容)保持不变的情形。改变音频情感可能导致语义层面的变化。语义被篡改的语音可能对人们的生活构成威胁。为此,本文报告了我们在构建此类情感伪造音频检测数据集方面的进展,该数据集涉及对原始音频进行情感状态转换,并命名为EmoFake。EmoFake中的伪造音频通过开源情感语音转换模型生成。此外,我们提出了一种基于深度情感嵌入的图注意力网络(GADE)用于情感伪造音频检测。我们在该数据集上开展了多项基准实验。结果表明,本数据集对基于ASVspoof 2019 LA数据集训练的伪造音频检测模型构成了挑战。所提出的GADE方法在面对情感伪造音频时表现出良好性能。