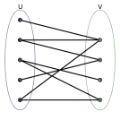

Tool-Integrated Reasoning (TIR) empowers large language models (LLMs) to tackle complex tasks by interleaving reasoning steps with external tool interactions. However, existing reinforcement learning methods typically rely on outcome- or trajectory-level rewards, assigning uniform advantages to all steps within a trajectory. This coarse-grained credit assignment fails to distinguish effective tool calls from redundant or erroneous ones, particularly in long-horizon multi-turn scenarios. To address this, we propose MatchTIR, a framework that introduces fine-grained supervision via bipartite matching-based turn-level reward assignment and dual-level advantage estimation. Specifically, we formulate credit assignment as a bipartite matching problem between predicted and ground-truth traces, utilizing two assignment strategies to derive dense turn-level rewards. Furthermore, to balance local step precision with global task success, we introduce a dual-level advantage estimation scheme that integrates turn-level and trajectory-level signals, assigning distinct advantage values to individual interaction turns. Extensive experiments on three benchmarks demonstrate the superiority of MatchTIR. Notably, our 4B model surpasses the majority of 8B competitors, particularly in long-horizon and multi-turn tasks. Our codes are available at https://github.com/quchangle1/MatchTIR.

翻译:工具集成推理(TIR)通过将推理步骤与外部工具调用交错进行,赋能大语言模型(LLM)处理复杂任务。然而,现有的强化学习方法通常依赖于结果级或轨迹级奖励,对轨迹中的所有步骤赋予统一的优势值。这种粗粒度的信用分配无法区分有效的工具调用与冗余或错误的调用,尤其在长视野、多轮次的场景中。为解决此问题,我们提出MatchTIR框架,该框架通过基于二分图匹配的轮次级奖励分配和双层优势估计,引入了细粒度的监督。具体而言,我们将信用分配建模为预测轨迹与真实轨迹之间的二分图匹配问题,利用两种分配策略来推导密集的轮次级奖励。此外,为平衡局部步骤精度与全局任务成功率,我们引入了一种双层优势估计方案,该方案整合了轮次级和轨迹级信号,为每个交互轮次分配不同的优势值。在三个基准测试上的大量实验证明了MatchTIR的优越性。值得注意的是,我们的4B模型超越了大多数8B竞争对手,尤其在长视野和多轮次任务中。我们的代码公开于 https://github.com/quchangle1/MatchTIR。