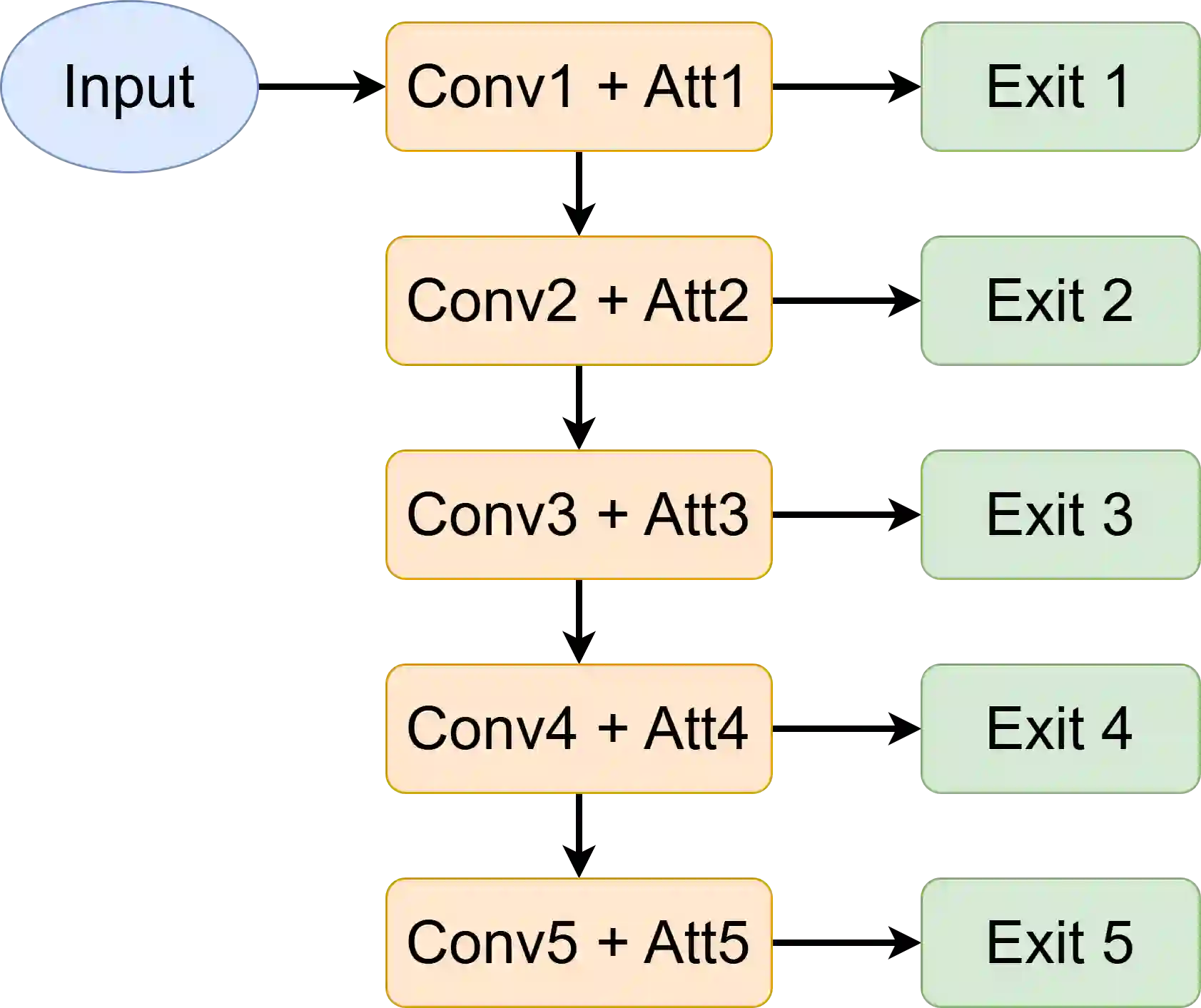

Early-exit neural networks enable adaptive inference by allowing predictions at intermediate layers, reducing computational cost. However, early exits often lack interpretability and may focus on different features than deeper layers, limiting trust and explainability. This paper presents Explanation-Guided Training (EGT), a multi-objective framework that improves interpretability and consistency in early-exit networks through attention-based regularization. EGT introduces an attention consistency loss that aligns early-exit attention maps with the final exit. The framework jointly optimizes classification accuracy and attention consistency through a weighted combination of losses. Experiments on a real-world image classification dataset demonstrate that EGT achieves up to 98.97% overall accuracy (matching baseline performance) with a 1.97x inference speedup through early exits, while improving attention consistency by up to 18.5% compared to baseline models. The proposed method provides more interpretable and consistent explanations across all exit points, making early-exit networks more suitable for explainable AI applications in resource-constrained environments.

翻译:早退神经网络通过在中间层进行预测实现自适应推理,从而降低计算成本。然而,早退层通常缺乏可解释性,且可能关注与深层网络不同的特征,这限制了其可信度和可解释性。本文提出解释引导训练(EGT),一种通过基于注意力的正则化来提升早退网络可解释性与一致性的多目标框架。EGT引入了注意力一致性损失函数,使早退层的注意力图与最终输出层对齐。该框架通过加权组合损失函数,联合优化分类准确率和注意力一致性。在真实世界图像分类数据集上的实验表明,EGT通过早退机制实现了1.97倍的推理加速,同时达到98.97%的整体准确率(与基线性能持平),且注意力一致性较基线模型提升高达18.5%。所提方法在所有输出层均提供了更具可解释性和一致性的解释,使得早退神经网络更适用于资源受限环境下的可解释人工智能应用。