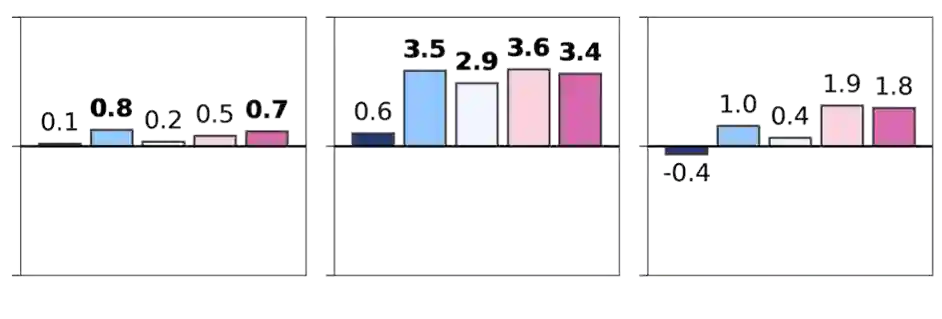

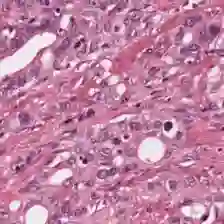

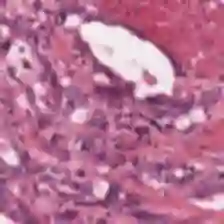

Foundation models are rapidly being developed for computational pathology applications. However, it remains an open question which factors are most important for downstream performance with data scale and diversity, model size, and training algorithm all playing a role. In this work, we propose algorithmic modifications, tailored for pathology, and we present the result of scaling both data and model size, surpassing previous studies in both dimensions. We introduce three new models: Virchow2, a 632 million parameter vision transformer, Virchow2G, a 1.9 billion parameter vision transformer, and Virchow2G Mini, a 22 million parameter distillation of Virchow2G, each trained with 3.1 million histopathology whole slide images, with diverse tissues, originating institutions, and stains. We achieve state of the art performance on 12 tile-level tasks, as compared to the top performing competing models. Our results suggest that data diversity and domain-specific methods can outperform models that only scale in the number of parameters, but, on average, performance benefits from the combination of domain-specific methods, data scale, and model scale.

翻译:基础模型在计算病理学应用中的开发进展迅速。然而,对于下游任务性能而言,数据规模与多样性、模型大小以及训练算法各自的作用如何,仍是一个悬而未决的问题。本研究提出了针对病理学量身定制的算法改进,并通过扩展数据和模型规模,在这两个维度上均超越了先前的研究。我们引入了三个新模型:Virchow2,一个拥有6.32亿参数的视觉Transformer;Virchow2G,一个拥有19亿参数的视觉Transformer;以及Virchow2G Mini,一个通过蒸馏Virchow2G得到的2200万参数模型。每个模型均使用310万张组织病理学全切片图像进行训练,这些图像涵盖多样化的组织类型、来源机构和染色方法。在12项图块级任务上,与性能最佳的竞争模型相比,我们实现了最先进的性能。我们的结果表明,数据多样性和领域特定方法可以超越仅靠参数数量扩展的模型;但平均而言,性能的提升得益于领域特定方法、数据规模和模型规模的结合。