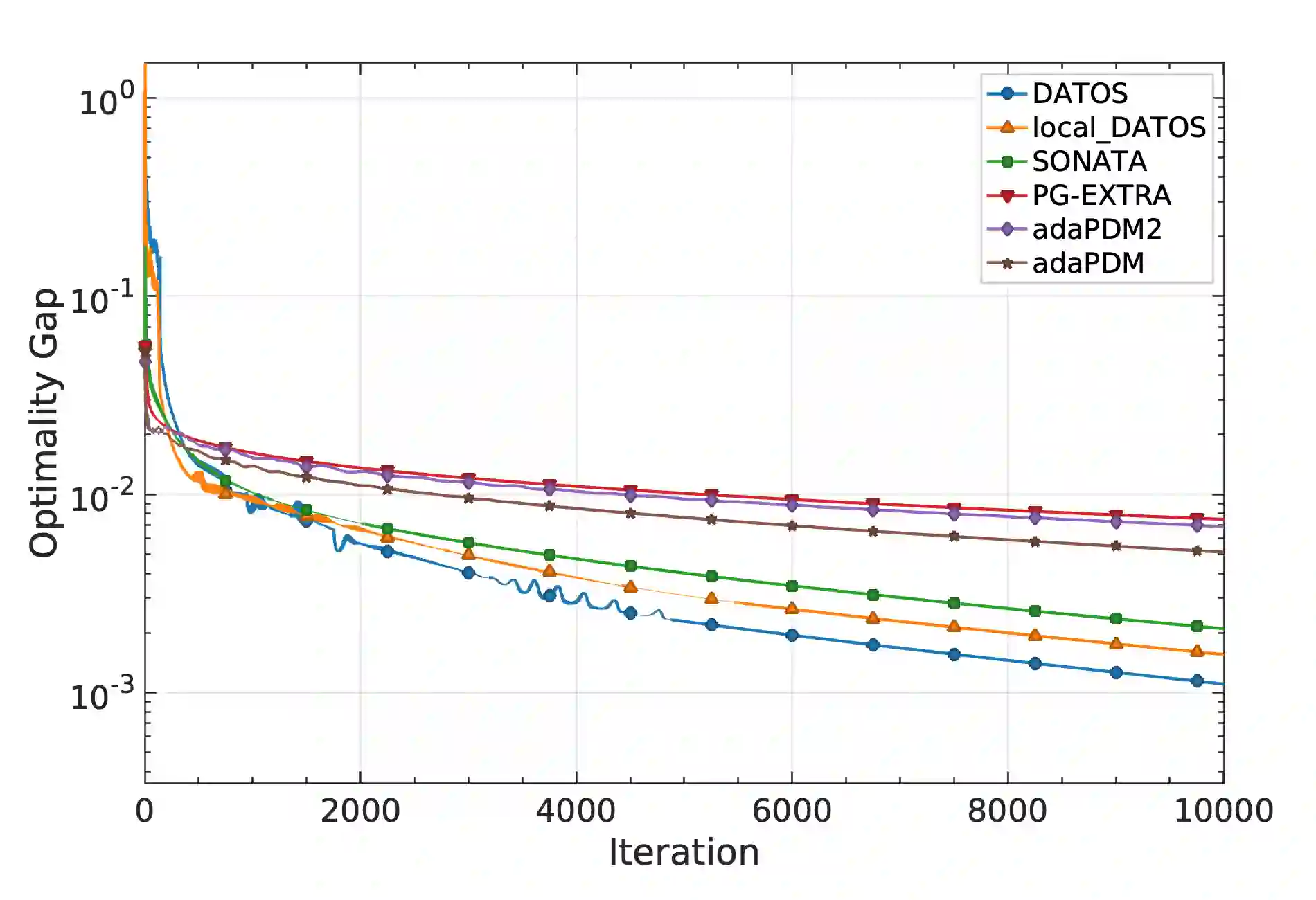

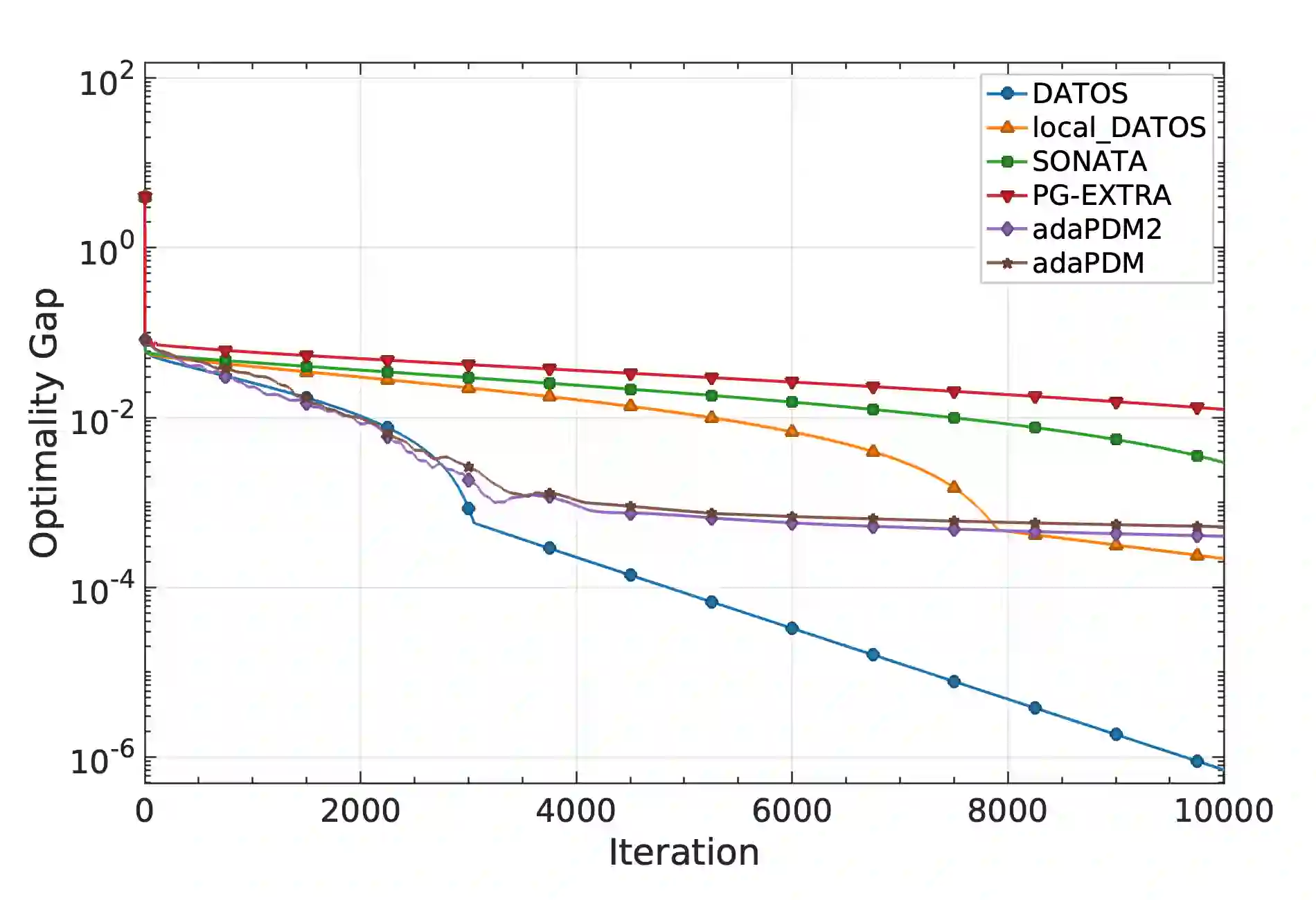

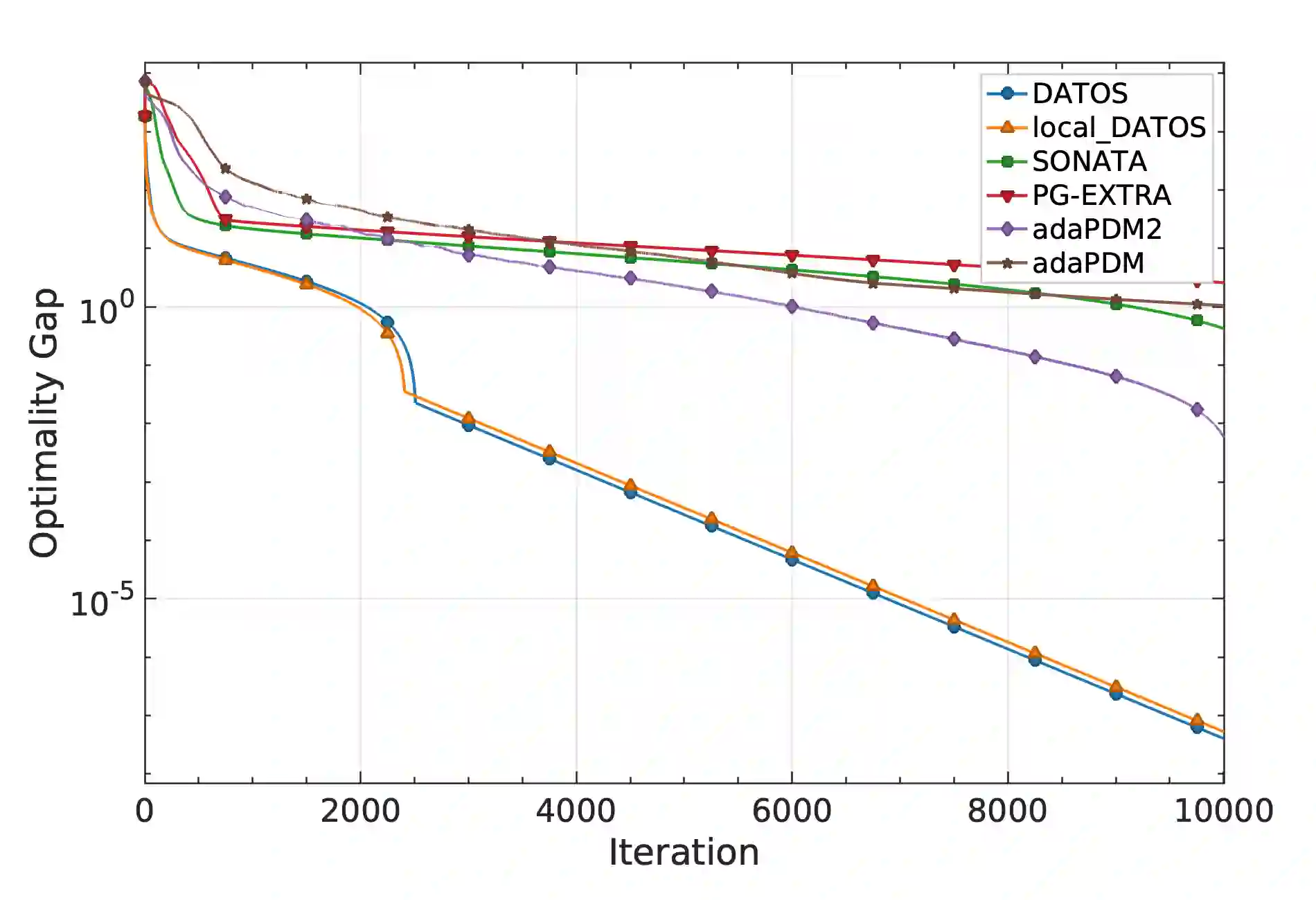

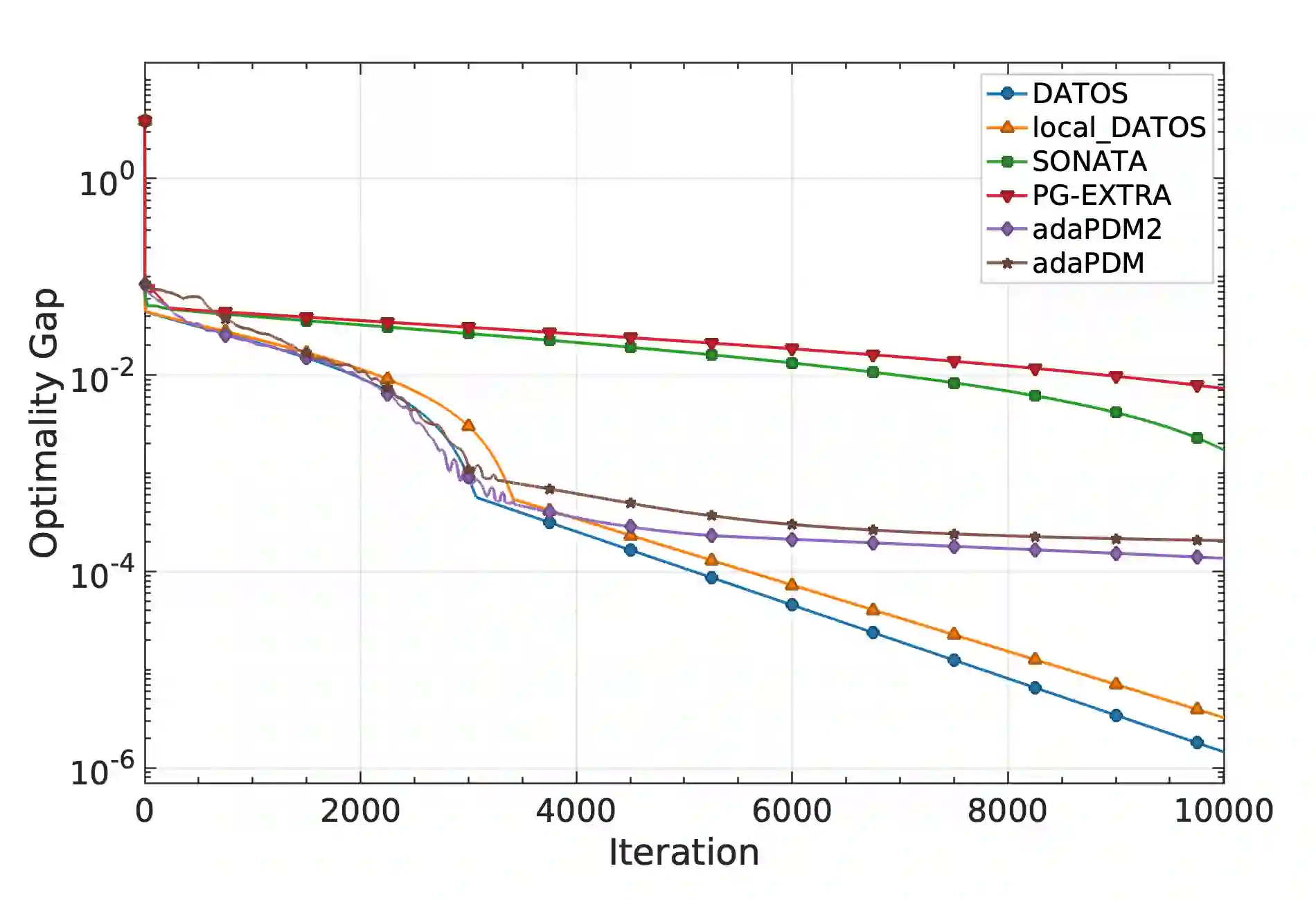

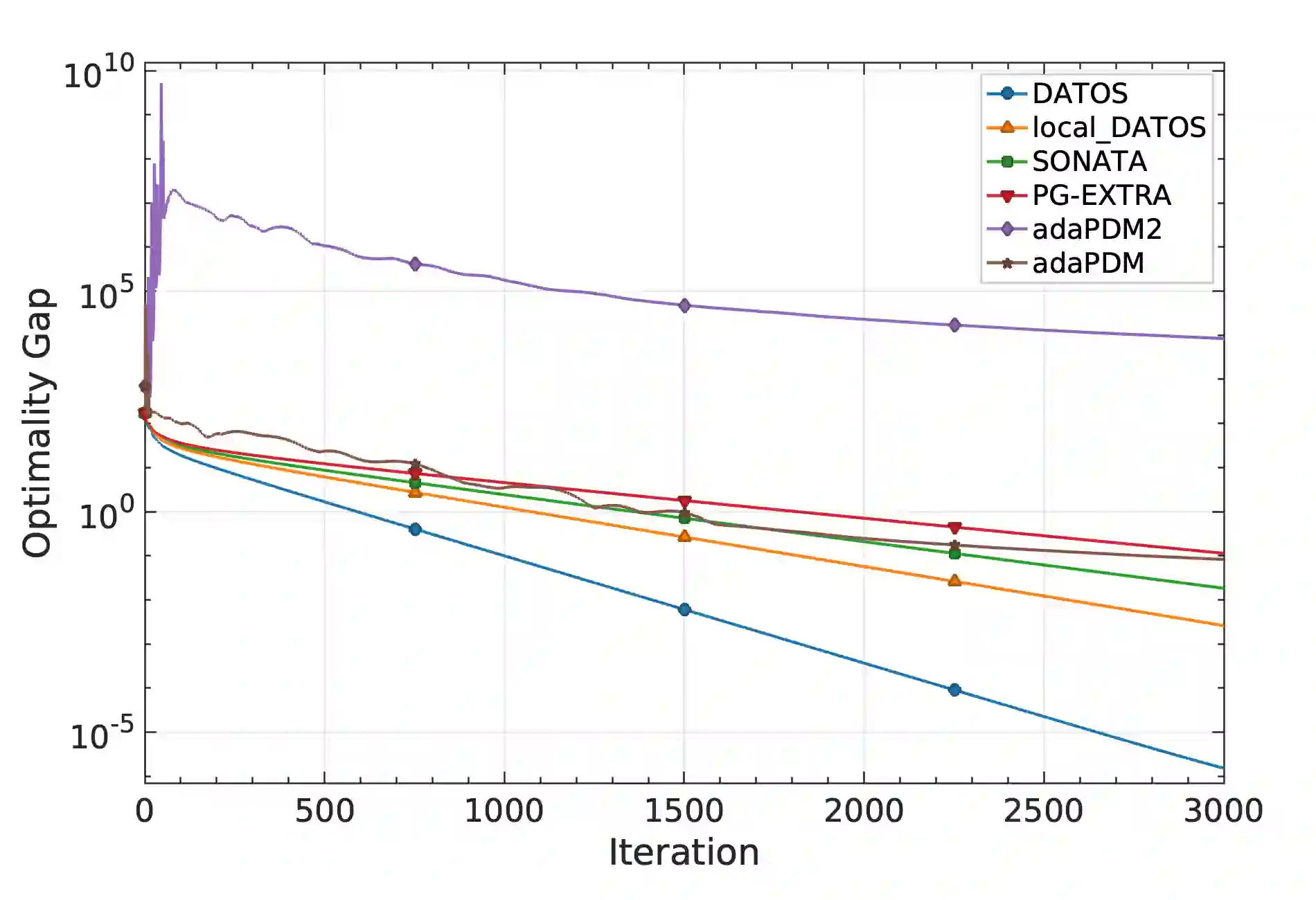

The paper studies decentralized optimization over networks, where agents minimize a sum of {\it locally} smooth (strongly) convex losses and plus a nonsmooth convex extended value term. We propose decentralized methods wherein agents {\it adaptively} adjust their stepsize via local backtracking procedures coupled with lightweight min-consensus protocols. Our design stems from a three-operator splitting factorization applied to an equivalent reformulation of the problem. The reformulation is endowed with a new BCV preconditioning metric (Bertsekas-O'Connor-Vandenberghe), which enables efficient decentralized implementation and local stepsize adjustments. We establish robust convergence guarantees. Under mere convexity, the proposed methods converge with a sublinear rate. Under strong convexity of the sum-function, and assuming the nonsmooth component is partly smooth, we further prove linear convergence. Numerical experiments corroborate the theory and highlight the effectiveness of the proposed adaptive stepsize strategy.

翻译:本文研究网络上的去中心化优化问题,其中各智能体需最小化局部光滑(强)凸损失函数之和,并附加一个非光滑凸扩展值项。我们提出一种去中心化方法,使智能体能够通过局部回溯过程与轻量级最小共识协议相结合,自适应地调整其步长。该设计源于对问题等价重构形式应用三算子分裂分解。该重构形式采用了一种新的BCV预条件度量(Bertsekas-O'Connor-Vandenberghe),从而实现了高效的去中心化执行与局部步长调整。我们建立了鲁棒的收敛性保证:在仅满足凸性条件下,所提方法以次线性速率收敛;当总函数满足强凸性且非光滑分量具有部分光滑性时,我们进一步证明了线性收敛性。数值实验验证了理论结果,并凸显了所提自适应步长策略的有效性。