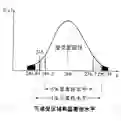

Large Language Models (LLMs) are rapidly gaining enormous popularity in recent years. However, the training of LLMs has raised significant privacy and legal concerns, particularly regarding the distillation and inclusion of copyrighted materials in their training data without proper attribution or licensing, an issue that falls under the broader concern of data misappropriation. In this article, we focus on a specific problem of data misappropriation detection, namely, to determine whether a given LLM has incorporated the data generated by another LLM. We propose embedding watermarks into the copyrighted training data and formulating the detection of data misappropriation as a hypothesis testing problem. We develop a general statistical testing framework, construct test statistics, determine optimal rejection thresholds, and explicitly control type I and type II errors. Furthermore, we establish the asymptotic optimality properties of the proposed tests, and demonstrate the empirical effectiveness through intensive numerical experiments.

翻译:近年来,大型语言模型(LLMs)迅速获得了极大的普及。然而,LLMs的训练引发了严重的隐私和法律问题,尤其是在未经适当署名或授权的情况下,将受版权保护的材料提炼并纳入其训练数据中,这一问题属于数据盗用这一更广泛关切的一部分。本文聚焦于数据盗用检测中的一个具体问题,即判定给定的LLM是否包含了由另一个LLM生成的数据。我们提出在受版权保护的训练数据中嵌入水印,并将数据盗用检测构建为一个假设检验问题。我们开发了一个通用的统计检验框架,构建了检验统计量,确定了最优拒绝阈值,并明确控制了I类和II类错误。此外,我们建立了所提出检验的渐近最优性性质,并通过大量的数值实验证明了其经验有效性。